libigl tutorial

Libigl is an open source C++ library for geometry processing research and development. Dropping the heavy data structures of tradition geometry libraries, libigl is a simple header-only library of encapsulated functions. This combines the rapid prototyping familiar to Matlab or Python programmers with the performance and versatility of C++. The tutorial is a self-contained, hands-on introduction to libigl. Via interactive, step-by-step examples, we demonstrate how to accomplish common geometry processing tasks such as computation of differential quantities and operators, real-time deformation, parametrization, numerical optimization and remeshing. Each section of the lecture notes links to a cross-platform example application.

Chapter 1¶

We introduce libigl with a series of self-contained examples. The purpose of each example is to showcase a feature of libigl while applying to a practical problem in geometry processing. In this chapter, we will present the basic concepts of libigl and introduce a simple mesh viewer that allows to visualize a surface mesh and its attributes. All the tutorial examples are cross-platform and can be compiled on MacOSX, Linux and Windows.

Libigl Design Principles¶

Before getting into the examples, we summarize the main design principles in libigl:

-

No complex data types. We mostly use matrices and vectors. This greatly favors code reusability and forces the function authors to expose all the parameters used by the algorithm.

-

Minimal dependencies. We use external libraries only when necessary and we wrap them in a small set of functions.

-

Header-only. It is straight forward to use our library since it is only one additional include directory in your project. (if you are worried about compilation speed, it is also possible to build the library as a static library)

-

Function encapsulation. Every function (including its full implementation) is contained in a pair of .h/.cpp files with the same name of the function.

Downloading Libigl¶

libigl can be downloaded from our github repository or cloned with git:

git clone https://github.com/libigl/libigl.git

The core libigl functionality only depends on the C++ Standard Library and

Eigen. Optional dependencies will be downloaded upon issuing cmake, below.

To build all the examples in the tutorial (and tests), you can use the CMakeLists.txt in the root folder:

cd libigl/

mkdir build

cd build

cmake ../

make

Note about CGAL

The optional dependency CGAL has been notoriously difficult to setup (as it also depends on boost/gmp/mpfr). By default, it will only be enabled on Linux/macOS if GMP and MPFR are installed system-wide. On Windows, all its dependencies will be downloaded by CMake, thus requiring no setup on your part.

The examples can also be built independently using the CMakeLists.txt inside each example folder.

Note for linux users

Many linux distributions do not include gcc and the basic development tools in their default installation. On Ubuntu, you need to install the following packages:

sudo apt-get install \

git \

build-essential \

cmake \

libx11-dev \

mesa-common-dev libgl1-mesa-dev libglu1-mesa-dev \

libxrandr-dev \

libxi-dev \

libxmu-dev \

libblas-dev \

libxinerama-dev \

libxcursor-dev

Note for Windows users

libigl only supports the Microsoft Visual Studio 2015 compiler and later, in 64bit mode. It will not work with a 32bit build and it will not work with older versions of visual studio.

A few examples in Chapter 5 requires the CoMiSo solver. We provide a mirror of CoMISo that works out of the box with libigl. A copy will be downloaded automatically by CMake the first time you build the libigl root project. You can build the tutorials as usual and libigl will automatically find and compile CoMISo.

Note 1: CoMISo is distributed under the GPL3 license, it does impose restrictions on commercial usage.

Note 2: CoMISo requires a blas implementation. We use the built-in blas in macosx and linux, and we bundle a precompiled binary for VS2015 64 bit. Do NOT compile the tutorials in 32 bit on windows.

Libigl Example Project

We provide a blank project example showing how to use libigl and CMake. This is the recommended way of using libigl in your project. Feel free and encouraged to use this repository as a template to start a new personal project using libigl.

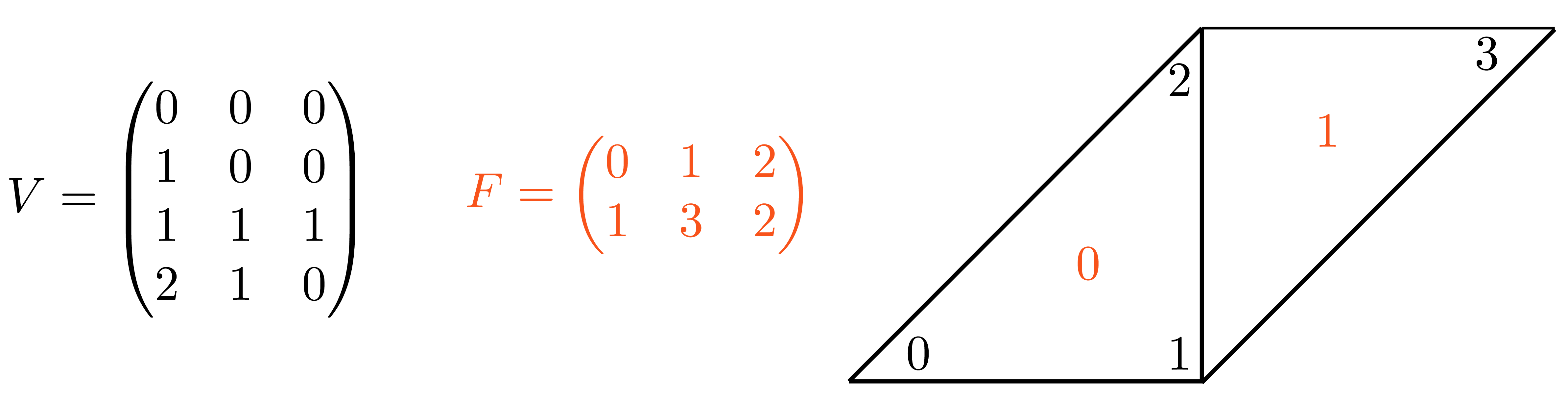

Mesh Representation¶

libigl uses the Eigen library to encode vector and matrices. We suggest that you keep the dense and sparse quick reference guides at hand while you read the examples in this tutorial.

A triangular mesh is encoded as a pair of matrices:

Eigen::MatrixXd V;

Eigen::MatrixXi F;

V is a #N by 3 matrix which stores the coordinates of the vertices. Each

row stores the coordinate of a vertex, with its x,y and z coordinates in the first,

second and third column, respectively. The matrix F stores the triangle

connectivity: each line of F denotes a triangle whose 3 vertices are

represented as indices pointing to rows of V.

Note that the order of the vertex indices in F determines the orientation of

the triangles and it should thus be consistent for the entire surface.

This simple representation has many advantages:

- it is memory efficient and cache friendly

- the use of indices instead of pointers greatly simplifies debugging

- the data can be trivially copied and serialized

libigl provides input [output] functions to read [write] many common mesh formats. The IO functions are contained in the files read*.h and write*.h. As a general rule each libigl function is contained in a pair of .h/.cpp files with the same name. By default, the .h files include the corresponding cpp files, making the library header-only.

Reading a mesh from a file requires a single libigl function call:

igl::readOFF(TUTORIAL_SHARED_PATH "/cube.off", V, F);

The function reads the mesh cube.off and it fills the provided V and F matrices.

Similarly, a mesh can be written in an OBJ file using:

igl::writeOBJ("cube.obj",V,F);

Example 101 contains a simple mesh converter from OFF to OBJ format.

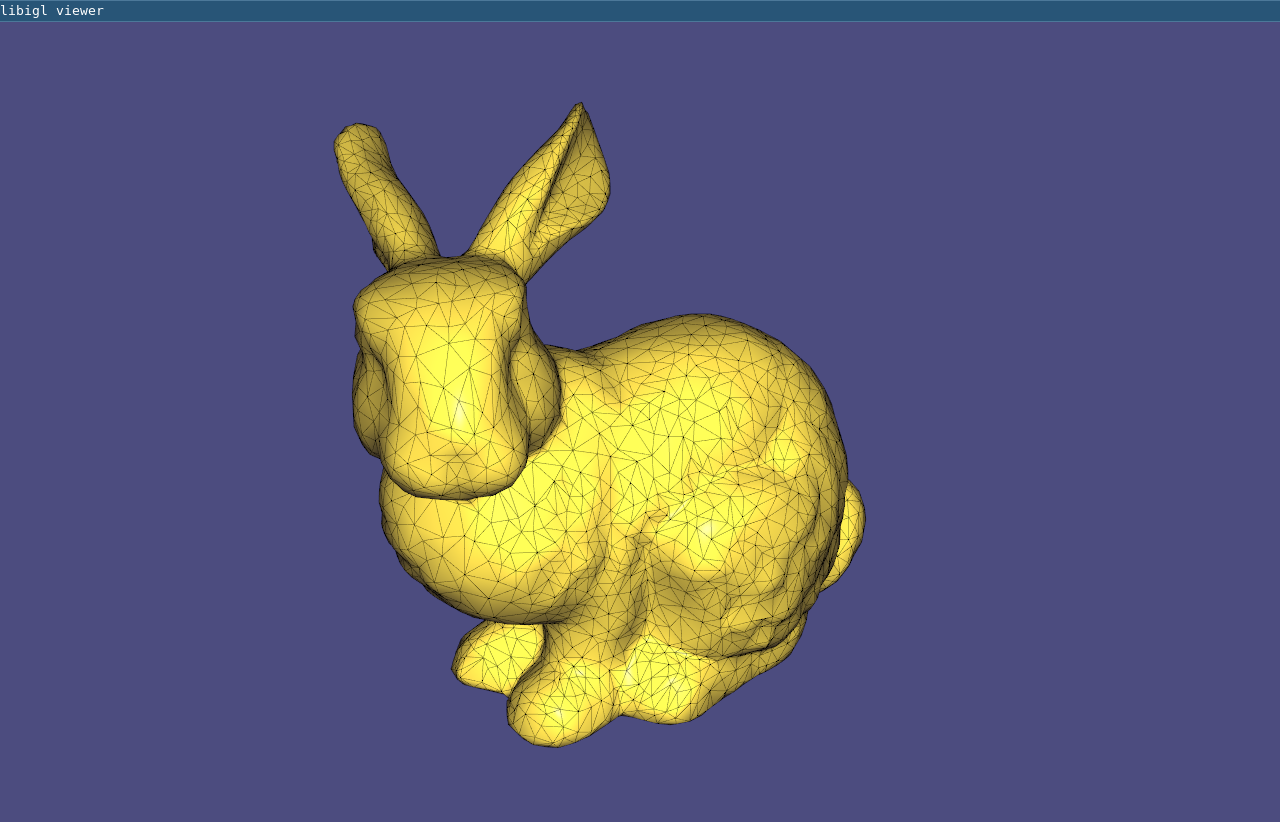

Visualizing Surfaces¶

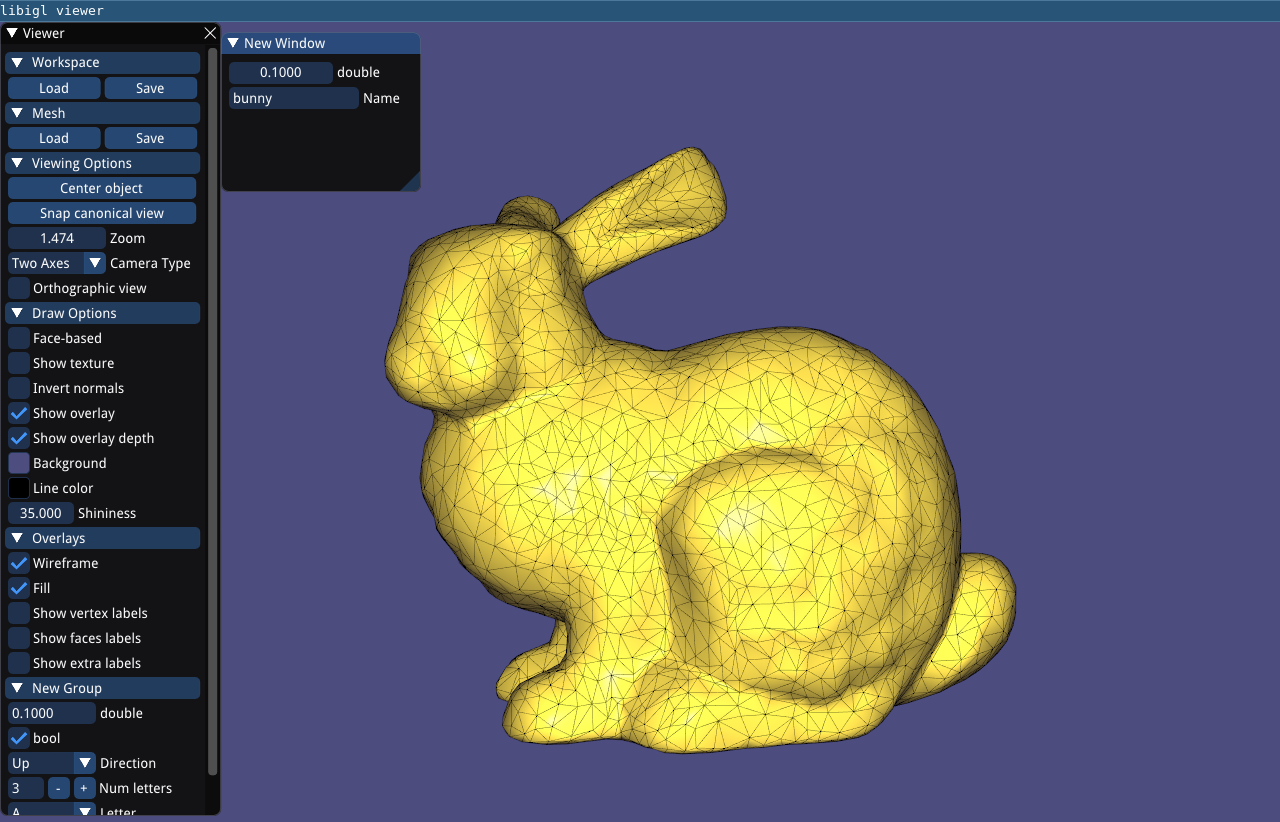

Libigl provides an glfw-based OpenGL 3.2 viewer to visualize surfaces, their properties and additional debugging information.

The following code (Example 102) is a basic skeleton for all the examples that will be used in the tutorial. It is a standalone application that loads a mesh and uses the viewer to render it.

#include <igl/readOFF.h>

#include <igl/opengl/glfw/Viewer.h>

Eigen::MatrixXd V;

Eigen::MatrixXi F;

int main(int argc, char *argv[])

{

// Load a mesh in OFF format

igl::readOFF(TUTORIAL_SHARED_PATH "/bunny.off", V, F);

// Plot the mesh

igl::opengl::glfw::Viewer viewer;

viewer.data().set_mesh(V, F);

viewer.launch();

}

The function set_mesh copies the mesh into the viewer.

Viewer.launch() creates a window, an OpenGL context and it starts the draw loop.

The default camera motion mode is 2-axis (ROTATION_TYPE_TWO_AXIS_VALUATOR_FIXED_UP),

which can be changed to 3-axis trackball style by adding this line:

viewer.core().set_rotation_type(igl::opengl::ViewerCore::ROTATION_TYPE_TRACKBALL);

Interaction With Keyboard And Mouse¶

Keyboard and mouse events triggers callbacks that can be registered in the viewer. The viewer supports the following callbacks:

bool (*callback_pre_draw)(Viewer& viewer);

bool (*callback_post_draw)(Viewer& viewer);

bool (*callback_mouse_down)(Viewer& viewer, int button, int modifier);

bool (*callback_mouse_up)(Viewer& viewer, int button, int modifier);

bool (*callback_mouse_move)(Viewer& viewer, int mouse_x, int mouse_y);

bool (*callback_mouse_scroll)(Viewer& viewer, float delta_y);

bool (*callback_key_down)(Viewer& viewer, unsigned char key, int modifiers);

bool (*callback_key_up)(Viewer& viewer, unsigned char key, int modifiers);

A keyboard callback can be used to visualize multiple meshes or different stages of an algorithm, as demonstrated in Example 103, where the keyboard callback changes the visualized mesh depending on the key pressed:

bool key_down(igl::opengl::glfw::Viewer& viewer, unsigned char key, int modifier)

{

if (key == '1')

{

viewer.data().clear();

viewer.data().set_mesh(V1, F1);

viewer.core.align_camera_center(V1,F1);

}

else if (key == '2')

{

viewer.data().clear();

viewer.data().set_mesh(V2, F2);

viewer.core.align_camera_center(V2,F2);

}

return false;

}

The callback is registered in the viewer as follows:

viewer.callback_key_down = &key_down;

Note that the mesh is cleared before using set_mesh. This has to be called every time the number of vertices or faces of the plotted mesh changes. Every callback returns a boolean value that tells the viewer if the event has been handled by the plugin, or if the viewer should process it normally. This is useful, for example, to disable the default mouse event handling if you want to control the camera directly in your code.

The viewer can be extended using plugins, which are classes that implements all the viewer’s callbacks. See the Viewer_plugin for more details.

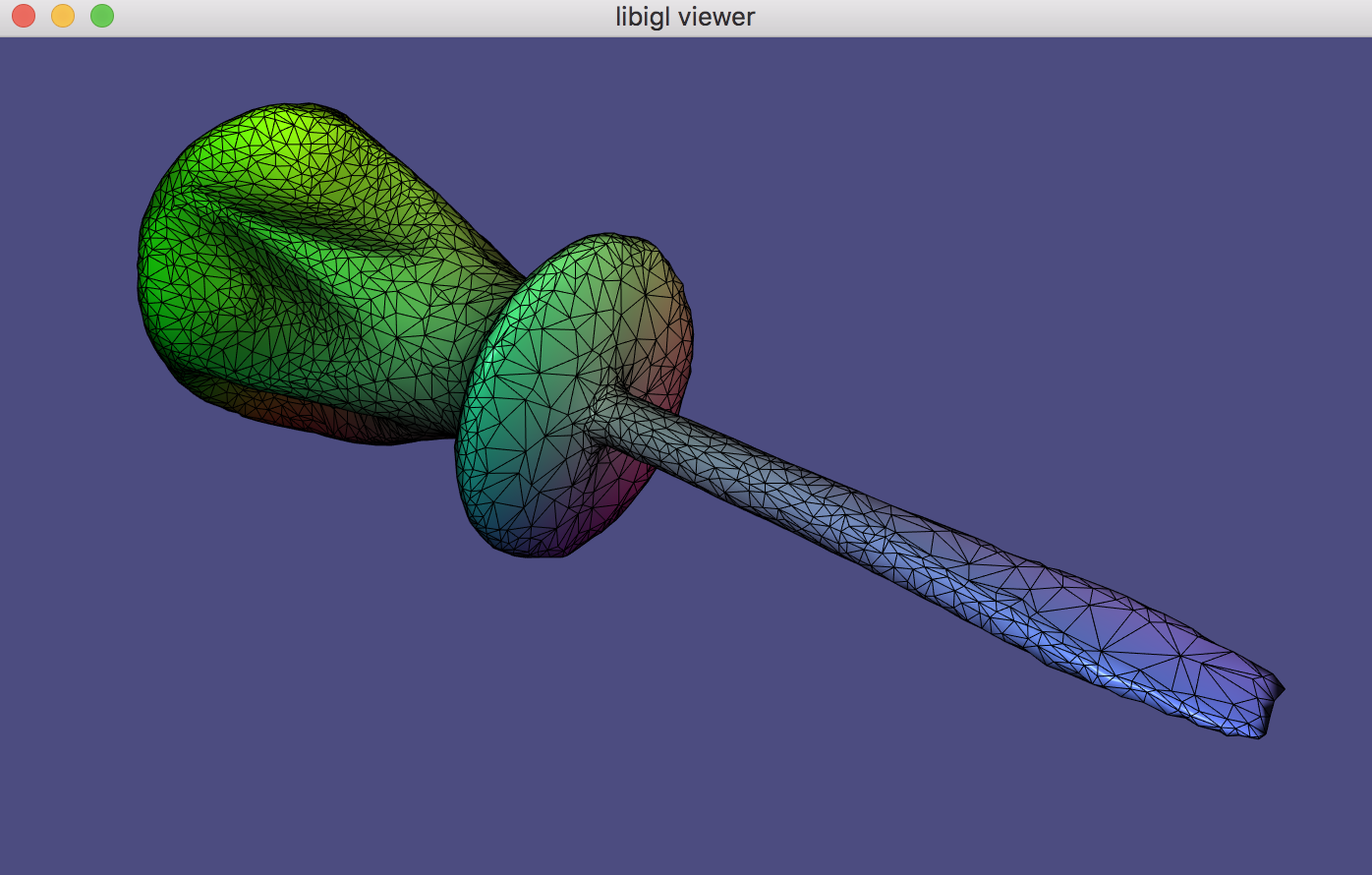

Scalar Field Visualization¶

Colors can be associated to faces or vertices using the

set_colors function:

viewer.data().set_colors(C);

C is a #C by 3 matrix with one RGB color per row. C must have as many rows

as the number of faces or the number of vertices of the mesh. Depending on

the size of C, the viewer applies the color to the faces or to the vertices.

In Example 104, the colors of mesh vertices are set according to their Cartesian

coordinates.

Per-Vertex scalar fields can be directly visualized using set_data function:

viewer.data().set_data(D);

D is a #V by 1 vector with one value corresponding to each vertex. set_data

will color according to linearly interpolating the data within a triangle (in

the fragment shader) and use this

interpolated data to look up a color in a colormap (stored as a texture). The

colormap defaults to igl::COLOR_MAP_TYPE_VIRIDIS with 21 discrete intervals.

A custom colormap may be set with set_colormap.

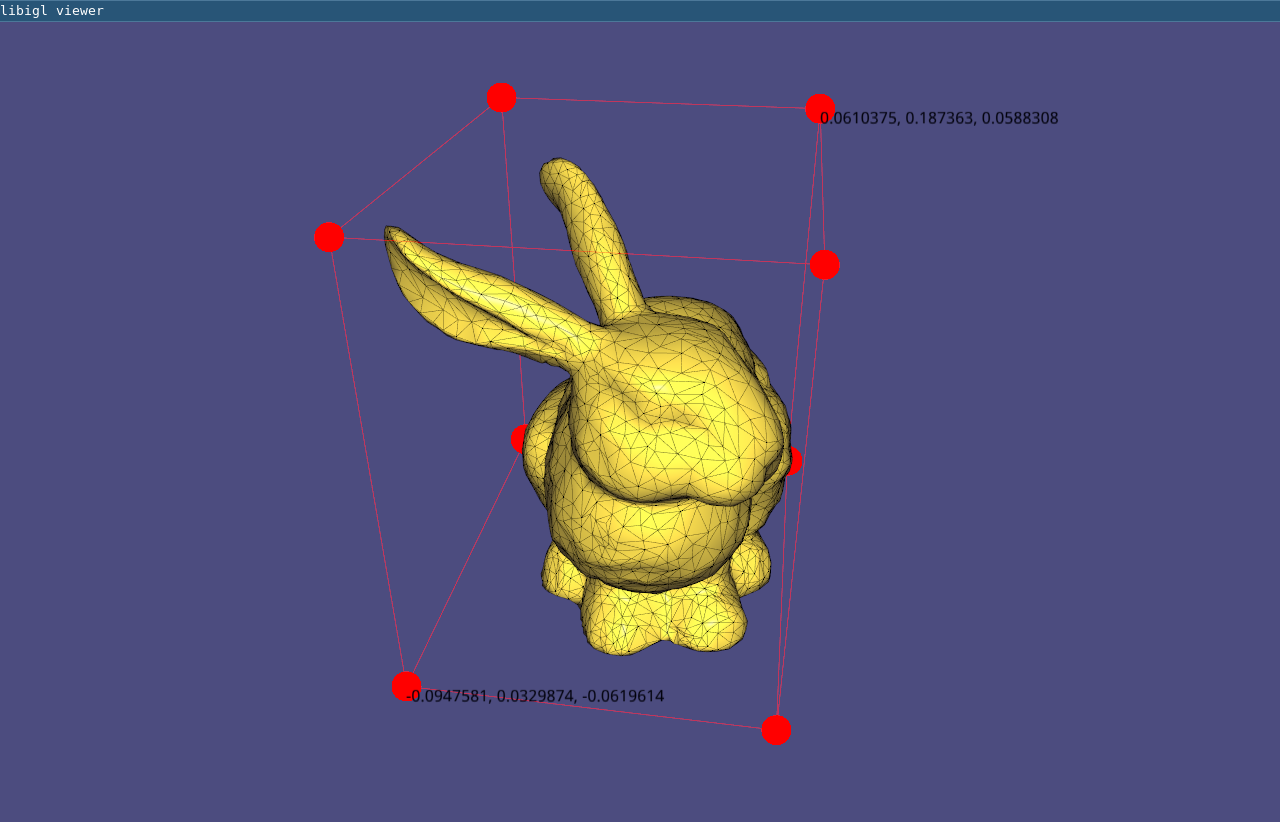

Overlays¶

In addition to plotting the surface, the viewer supports the visualization of points, lines and text labels: these overlays can be very helpful while developing geometric processing algorithms to plot debug information.

viewer.data().add_points(P,Eigen::RowVector3d(r,g,b));

Draws a point of color r,g,b for each row of P. The point is placed at the coordinates specified in each row of P, which is a #P by 3 matrix. Size of the points (in pixels) can be changed globally by setting viewer.data().point_size.

viewer.data().add_edges(P1,P2,Eigen::RowVector3d(r,g,b));

Draws a line of color r,g,b for each row of P1 and P2, which connects the 3D point in to the point in P2. Both P1 and P2 are of size #P by 3.

viewer.data().add_label(p,str);

Draws a label containing the string str at the position p, which is a vector of length 3.

These functions are demonstrate in Example 105 where

the bounding box of a mesh is plotted using lines and points.

Using matrices to encode the mesh and its attributes allows to write short and

efficient code for many operations, avoiding to write for loops. For example,

the bounding box of a mesh can be found by taking the colwise maximum and minimum of V:

Eigen::Vector3d m = V.colwise().minCoeff();

Eigen::Vector3d M = V.colwise().maxCoeff();

Viewer Menu¶

As of latest version, the viewer uses a new menu and completely replaces AntTweakBar and nanogui with Dear ImGui. To extend the default menu of the viewer and to expose more user defined variables you have to implement a custom interface, as in Example 106:

// Add content to the default menu window

menu.callback_draw_viewer_menu = [&]()

{

// Draw parent menu content

menu.draw_viewer_menu();

// Add new group

if (ImGui::CollapsingHeader("New Group", ImGuiTreeNodeFlags_DefaultOpen))

{

// Expose variable directly ...

ImGui::InputFloat("float", &floatVariable, 0, 0, 3);

// ... or using a custom callback

static bool boolVariable = true;

if (ImGui::Checkbox("bool", &boolVariable))

{

// do something

std::cout << "boolVariable: " << std::boolalpha << boolVariable << std::endl;

}

// Expose an enumeration type

enum Orientation { Up=0, Down, Left, Right };

static Orientation dir = Up;

ImGui::Combo("Direction", (int *)(&dir), "Up\0Down\0Left\0Right\0\0");

// We can also use a std::vector<std::string> defined dynamically

static int num_choices = 3;

static std::vector<std::string> choices;

static int idx_choice = 0;

if (ImGui::InputInt("Num letters", &num_choices))

{

num_choices = std::max(1, std::min(26, num_choices));

}

if (num_choices != (int) choices.size())

{

choices.resize(num_choices);

for (int i = 0; i < num_choices; ++i)

choices[i] = std::string(1, 'A' + i);

if (idx_choice >= num_choices)

idx_choice = num_choices - 1;

}

ImGui::Combo("Letter", &idx_choice, choices);

// Add a button

if (ImGui::Button("Print Hello", ImVec2(-1,0)))

{

std::cout << "Hello\n";

}

}

};

If you need a separate new menu window implement:

// Draw additional windows

menu.callback_draw_custom_window = [&]()

{

// Define next window position + size

ImGui::SetNextWindowPos(ImVec2(180.f * menu.menu_scaling(), 10), ImGuiSetCond_FirstUseEver);

ImGui::SetNextWindowSize(ImVec2(200, 160), ImGuiSetCond_FirstUseEver);

ImGui::Begin(

"New Window", nullptr,

ImGuiWindowFlags_NoSavedSettings

);

// Expose the same variable directly ...

ImGui::PushItemWidth(-80);

ImGui::DragFloat("float", &floatVariable, 0.0, 0.0, 3.0);

ImGui::PopItemWidth();

static std::string str = "bunny";

ImGui::InputText("Name", str);

ImGui::End();

};

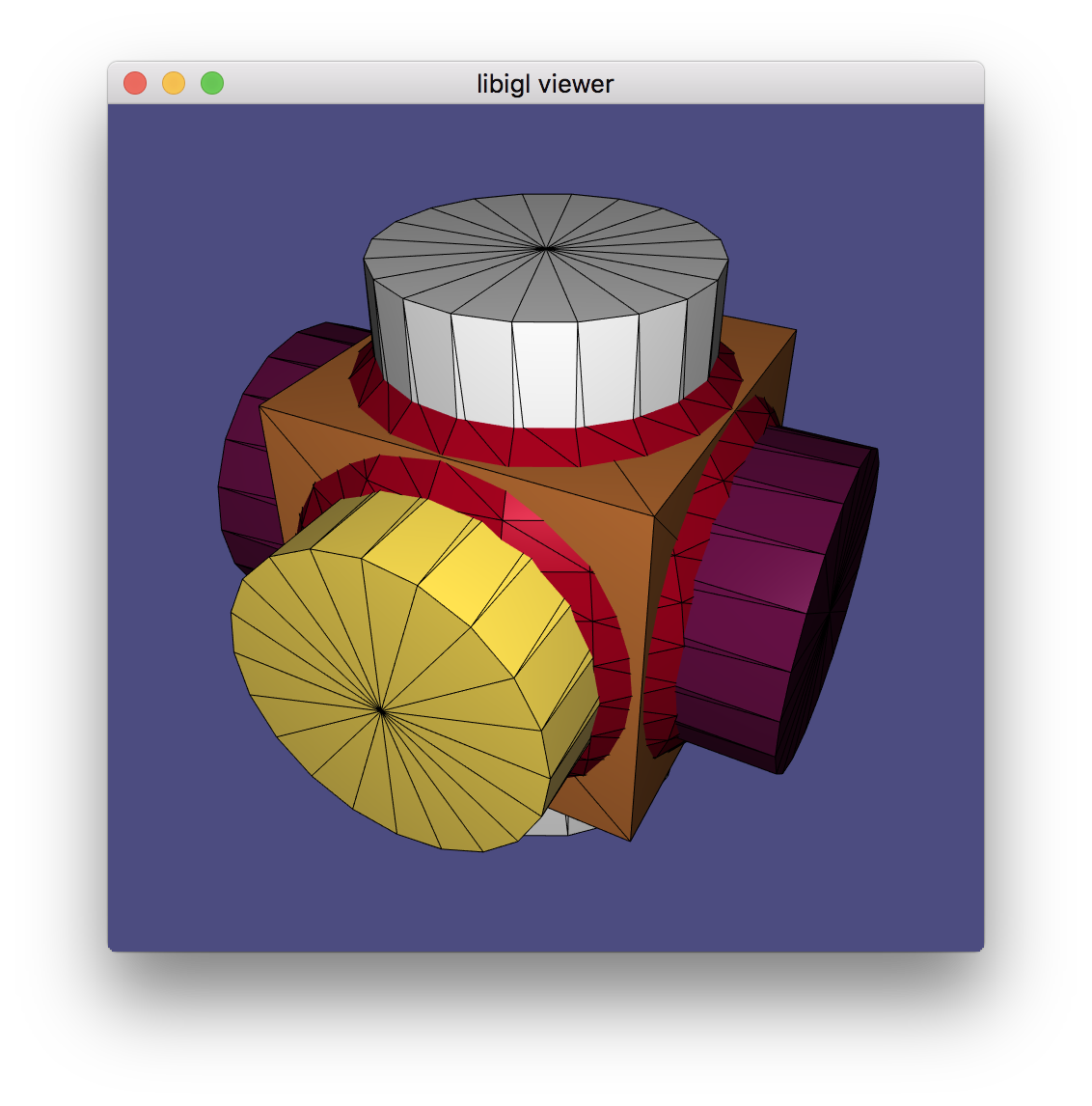

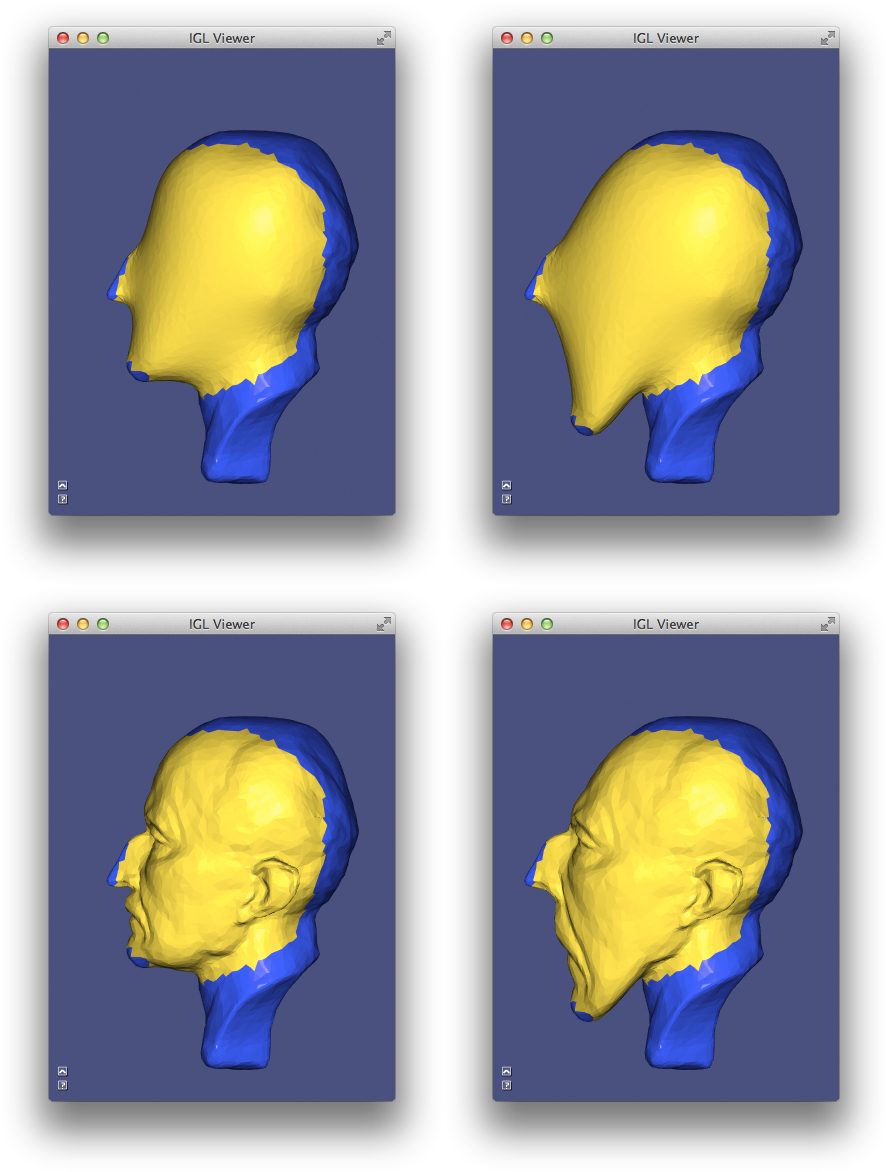

Multiple Meshes¶

Libigl’s igl::opengl::glfw::Viewer provides basic support for rendering

multiple meshes.

Which mesh is selected is controlled via the viewer.selected_data_index

field. By default the index is set to 0, so in the typical case of a single mesh

viewer.data() returns the igl::ViewerData corresponding to the one

and only mesh.

igl::opengl::glfw::Viewer can render multiple meshes, each with its own attributes like colors.

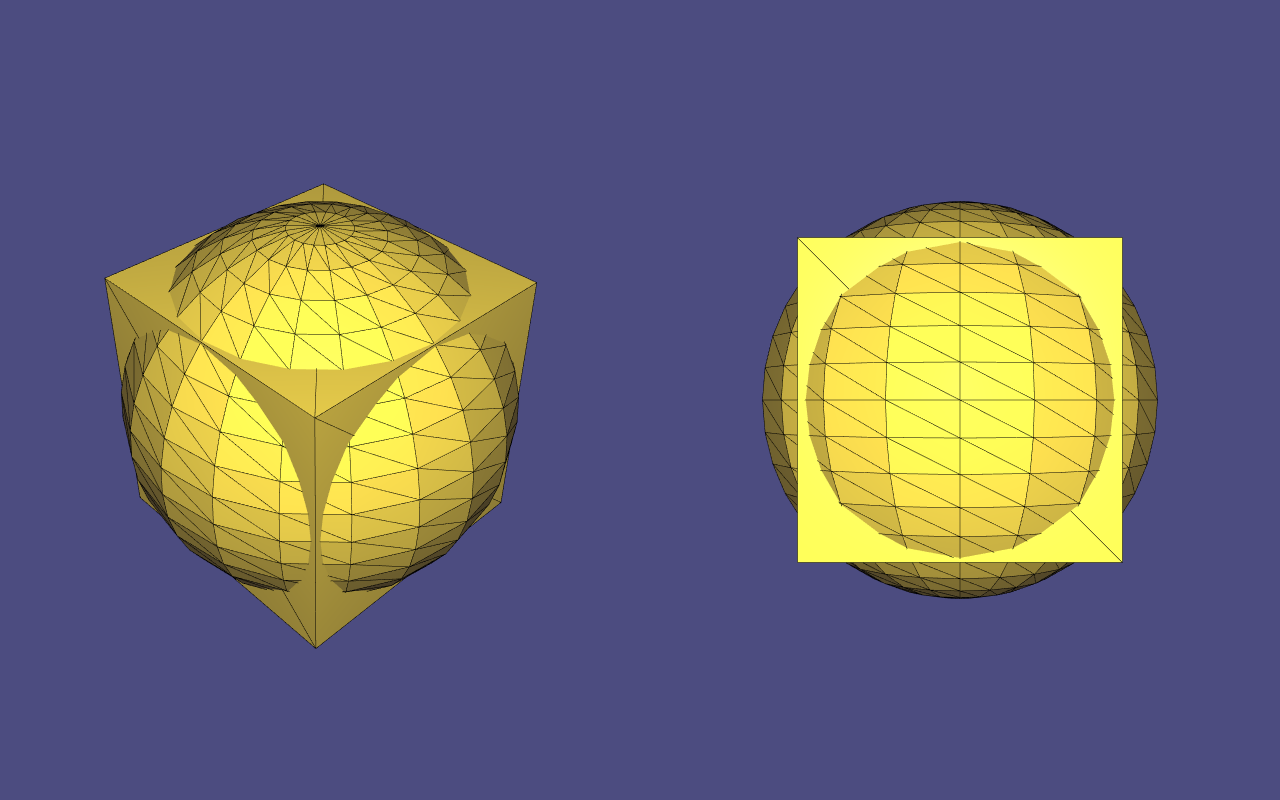

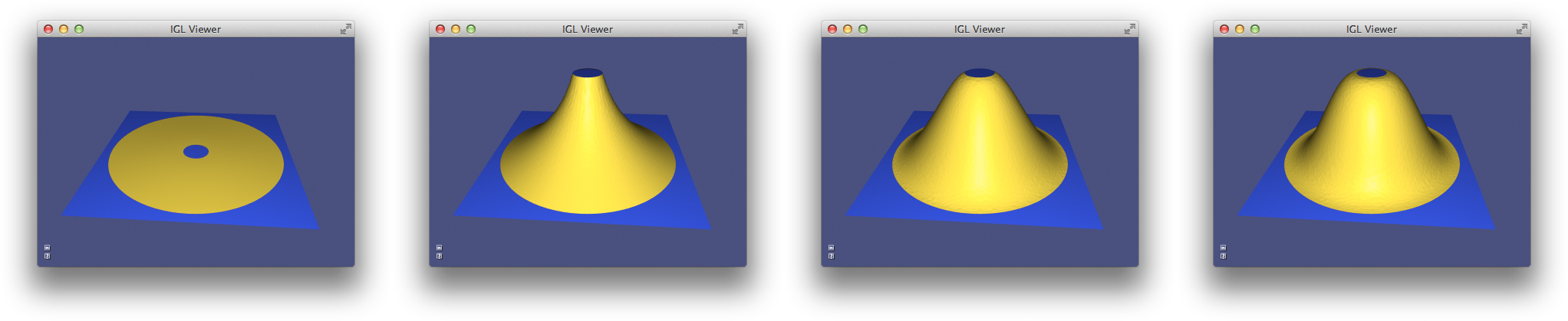

Multiple Views¶

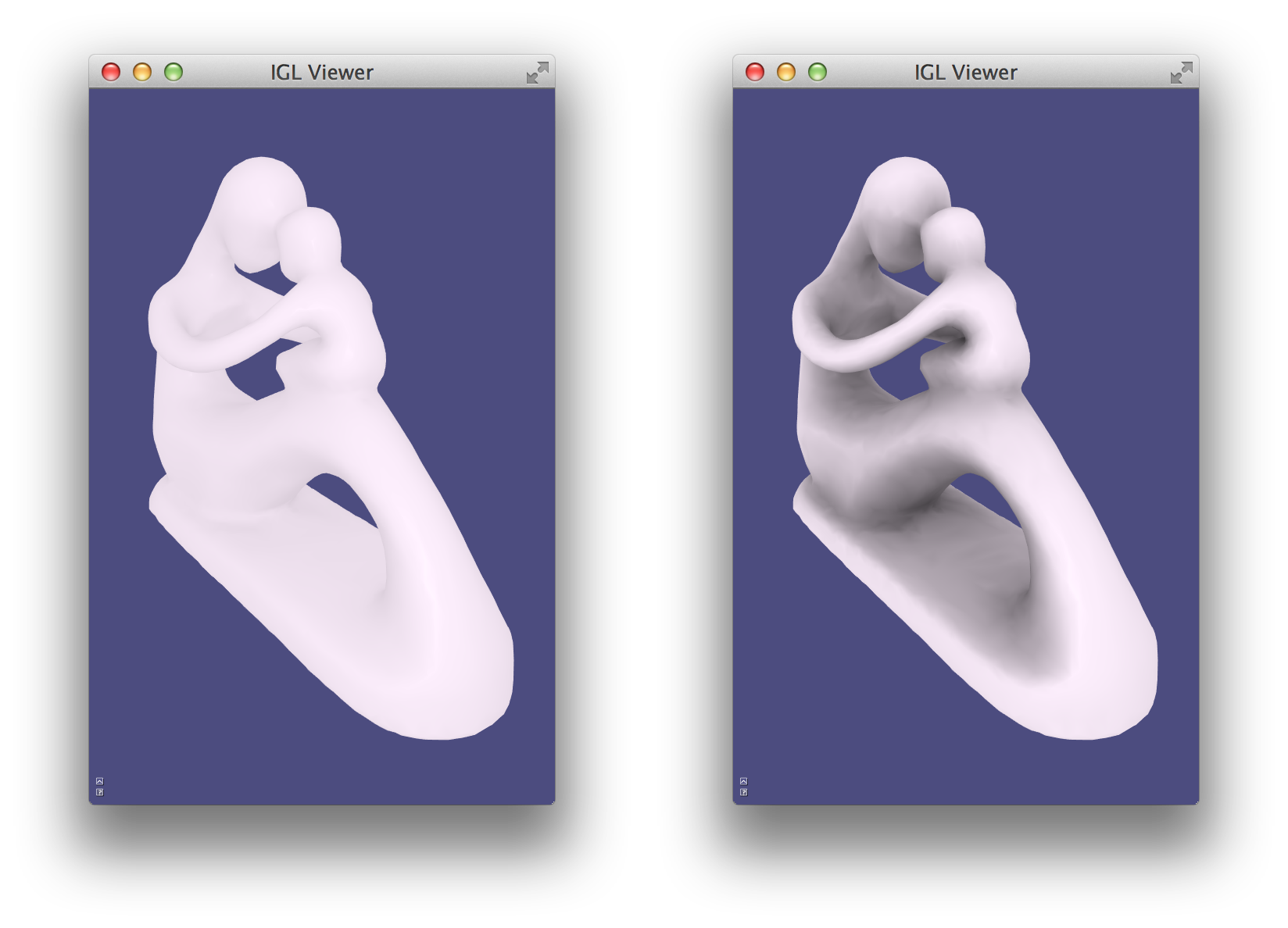

Libigl’s igl::opengl::glfw::Viewer provides basic support for rendering meshes with multiple views.

A new view core can be added to the viewer using the Viewer::append_core() method.

There can be a maximum of 31 cores created through the life of any viewer.

Each core is assigned an unsigned int id that is guaranteed to be unique.

A core can be accessed by its id calling the Viewer::core(id) method.

When there are more than one view core, the user is responsible for specifying each

viewport’s size and position by setting their viewport attribute. The user must also

indicates how to resize each viewport when the size of the window changes. For example:

viewer.callback_post_resize = [&](igl::opengl::glfw::Viewer &v, int w, int h) {

v.core( left_view).viewport = Eigen::Vector4f(0, 0, w / 2, h);

v.core(right_view).viewport = Eigen::Vector4f(w / 2, 0, w - (w / 2), h);

return true;

};

Note that the viewport currently hovered by the mouse can be selected using the

Viewer::selected_core_index() method, and the selected view core can then be

accessed by calling viewer.core_list[viewer.selected_core_index].

Finally, the visibility of a mesh on a given view core is controlled by a bitmask flag per mesh. This property can be easily controlled by calling the method

viewer.data(mesh_id).set_visible(false, view_id);

When appending a new mesh or a new view core, an optional argument controls the visibility

of the existing objects with respect to the new mesh/view. Please refer to the documentation

of Viewer::append_mesh() and Viewer::append_core() for more details.

igl::opengl::glfw::Viewer can render the same scene using multiple views, each with its own attributes like colors, and individual mesh visibility.

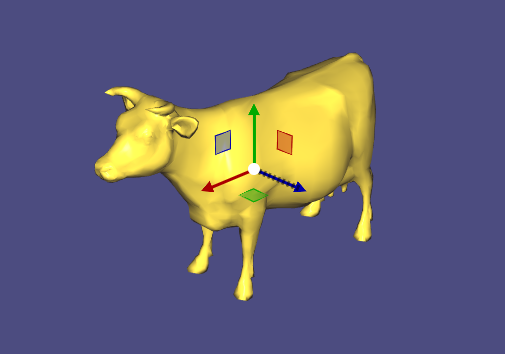

Viewer Guizmos¶

Bug

It is currently not possible to have more than one ImGui-related viewer plugin active at the same time (that includes ImGuiMenu, ImGuizmoPlugin and SelectionPlugin). Please follow #1656 for more information.

The viewer integrates with ImGuizmo to provide

widgets for manipulating a mesh. Mesh manipulations consist of translations, rotations,

and scaling, where W,w, E,e, and R,r can be used to toggle between them, respectively.

First, register the ImGuizmoPlugin plugin with the Viewer:

#include <igl/opengl/glfw/imgui/ImGuizmoPlugin.h>

// ImGuizmoPlugin replaces the ImGuiMenu plugin entirely

igl::opengl::glfw::imgui::ImGuizmoPlugin plugin;

vr.plugins.push_back(&plugin);

On initialization, ImGuizmo must be provided with the mesh centroid, as shown in Example 109:

// Initialize ImGuizmo at mesh centroid

plugin.T.block(0,3,3,1) =

0.5*(V.colwise().maxCoeff() + V.colwise().minCoeff()).transpose().cast<float>();

// Update can be applied relative to this remembered initial transform

const Eigen::Matrix4f T0 = plugin.T;

// Attach callback to apply imguizmo's transform to mesh

plugin.callback = [&](const Eigen::Matrix4f & T)

{

const Eigen::Matrix4d TT = (T*T0.inverse()).cast<double>().transpose();

vr.data().set_vertices(

(V.rowwise().homogeneous()*TT).rowwise().hnormalized());

vr.data().compute_normals();

};

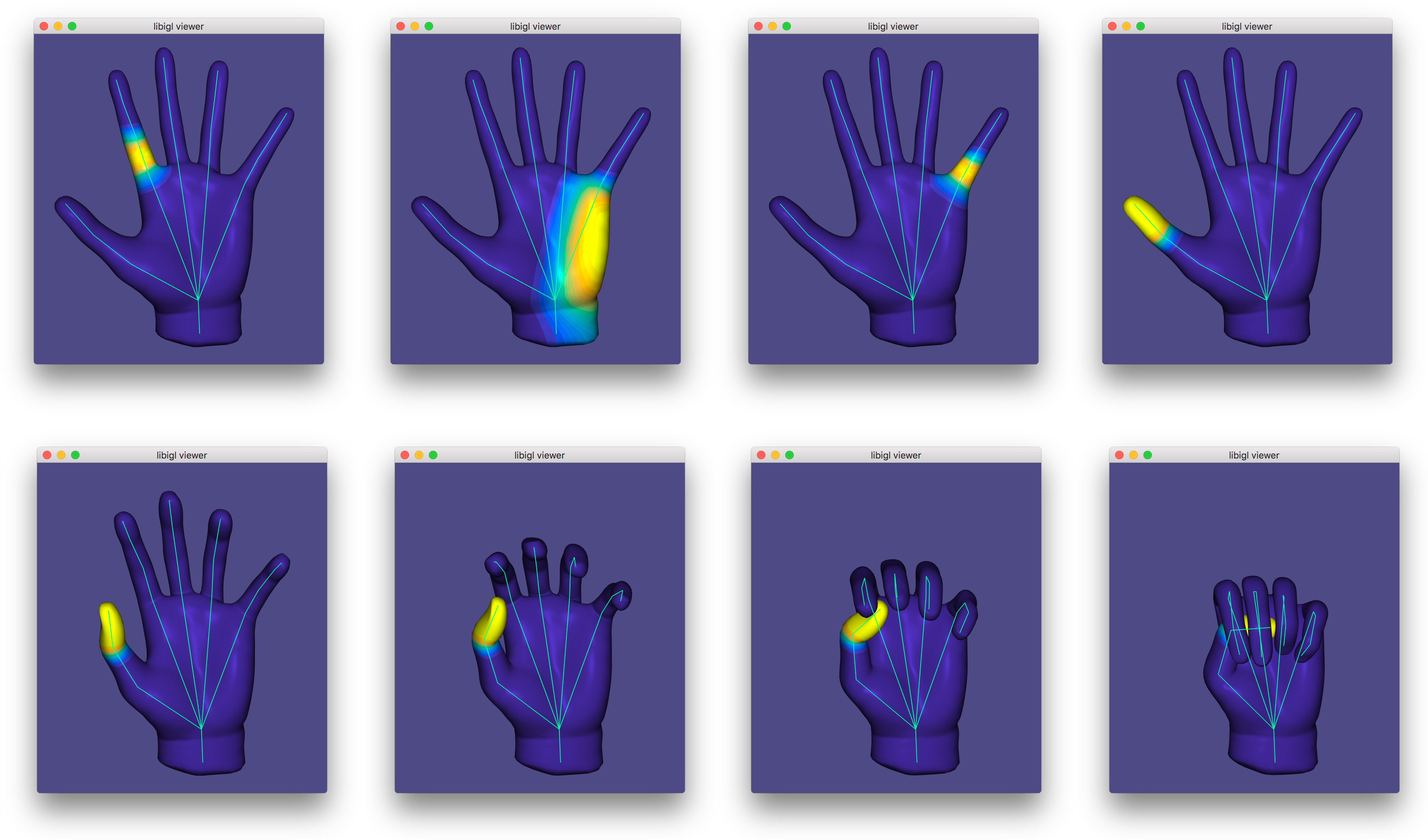

Msh Viewer¶

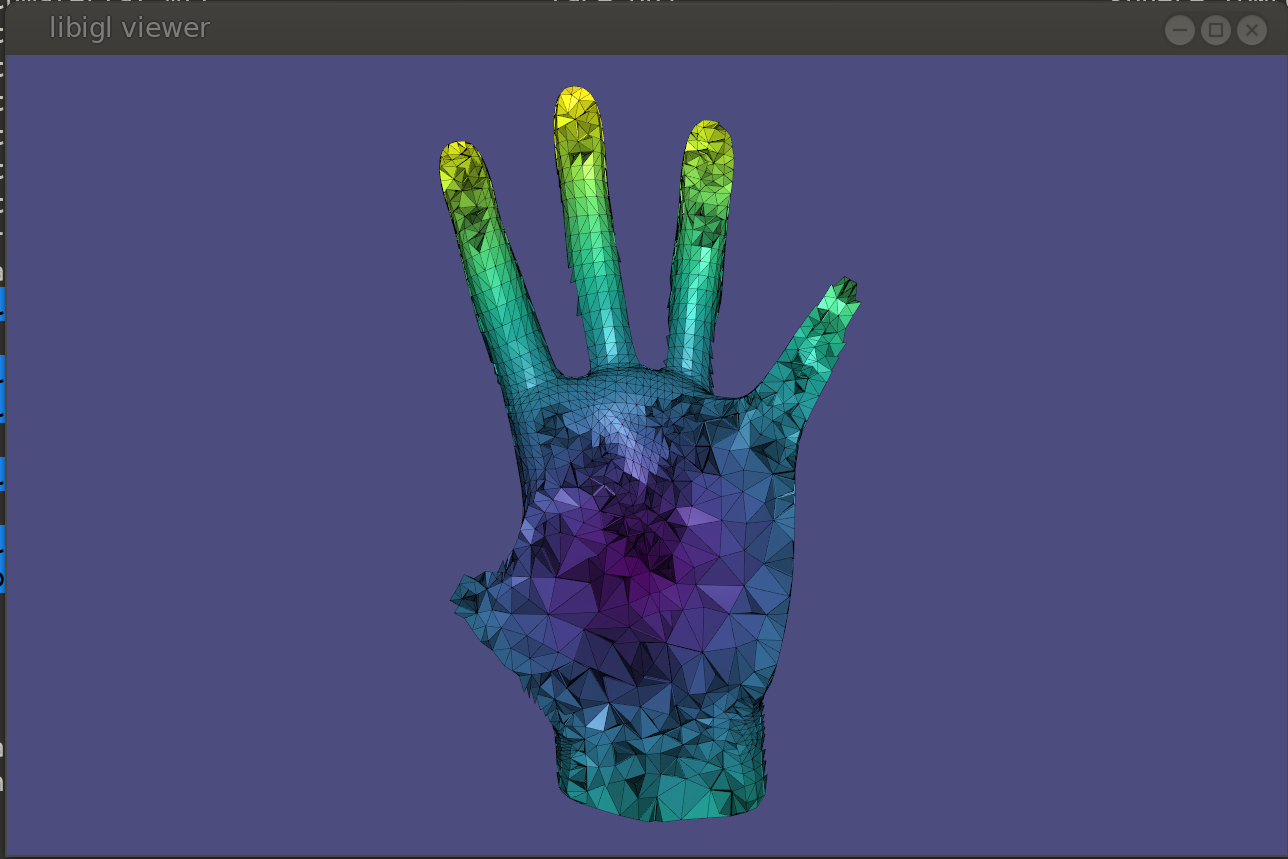

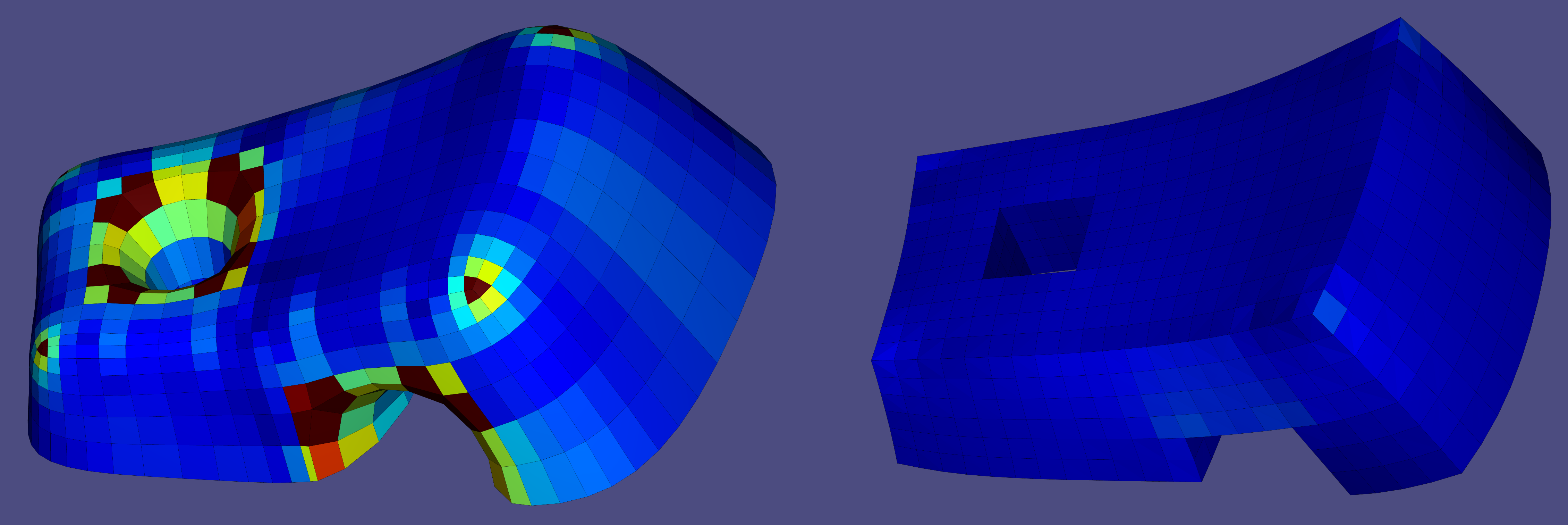

Libigl can read mixed meshes stored in Gmsh .msh version 2 file format.

These files can contain mixture of different meshes, as well as additional scalar and vector fields defined on element level and vertex level.

Eigen::MatrixXd X; // Vertex coorinates (Xx3)

Eigen::MatrixXi Tri; // Triangular elements (Yx3)

Eigen::MatrixXi Tet; // Tetrahedral elements (Zx4)

Eigen::VectorXi TriTag; // Integer tags defining triangular submeshes

Eigen::VectorXi TetTag; // Integer tags defining tetrahedral submeshes

std::vector<std::string> XFields; // headers (names) of fields defined on vertex level

std::vector<std::string> EFields; // headers (names) of fields defined on element level

std::vector<Eigen::MatrixXd> XF; // fields defined on vertex

std::vector<Eigen::MatrixXd> TriF; // fields defined on triangular elements

std::vector<Eigen::MatrixXd> TetF; // fields defined on tetrahedral elements

// loading mixed mesh from Gmsh file

igl::readMSH("hand.msh", X, Tri, Tet, TriTag, TetTag, XFields, XF, EFields, TriF, TetF);

The interactive viewer is unable to directly draw tetrahedra though. So for visualization purposes each tetrahedron can be converted to four triangles.

MatCaps¶

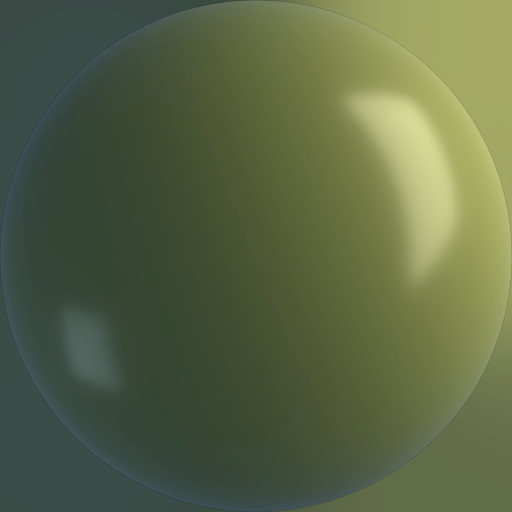

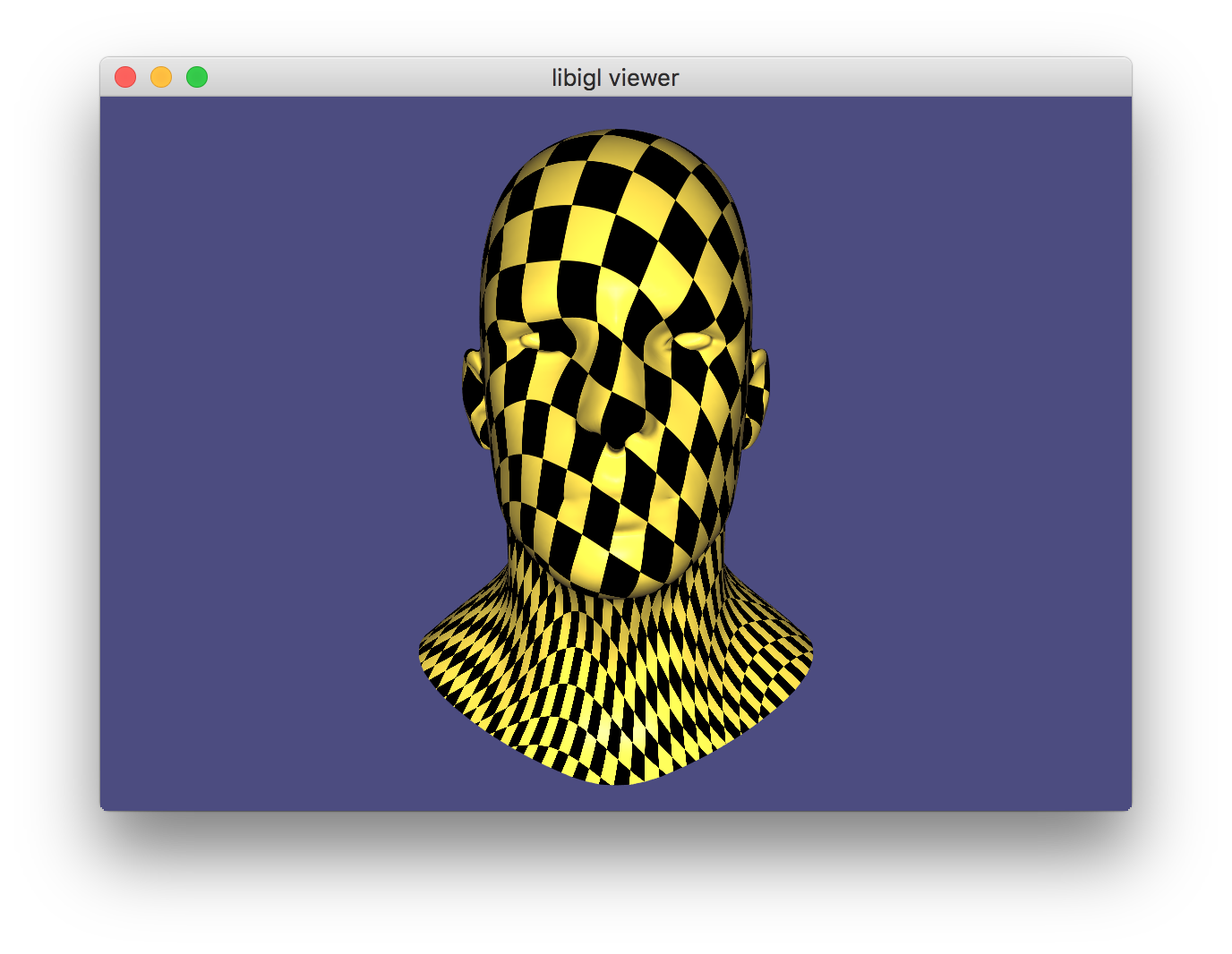

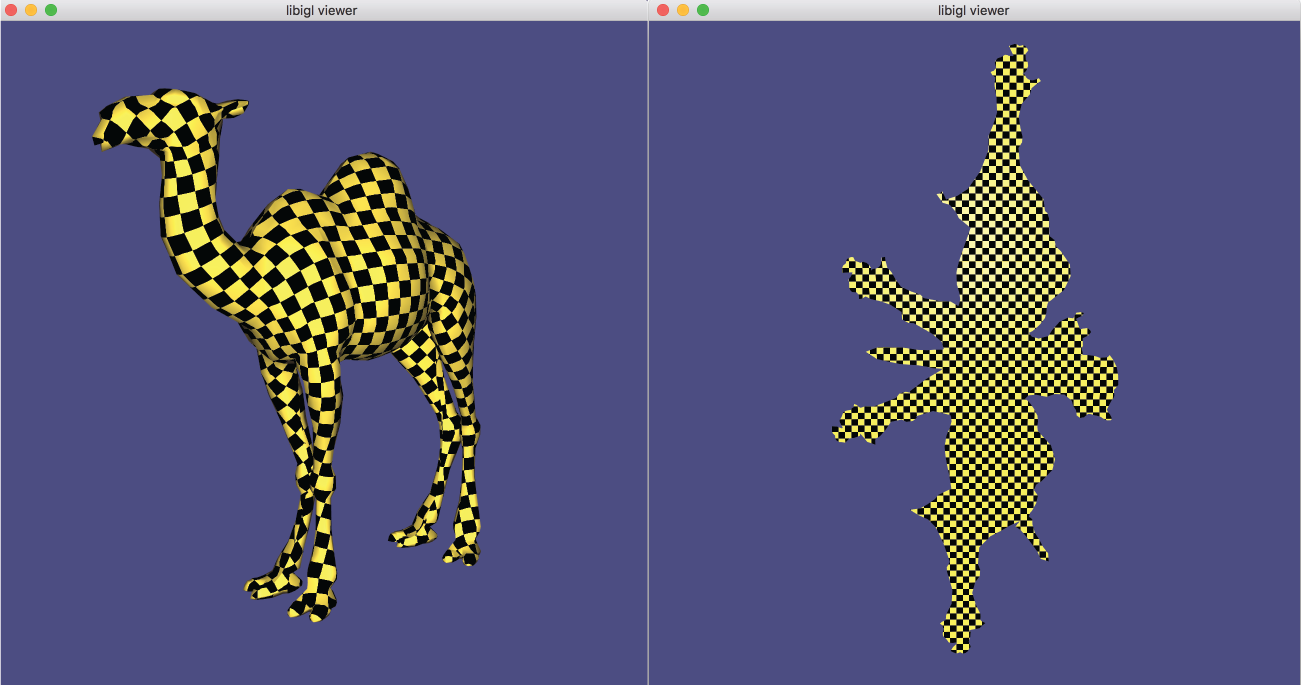

MatCaps (material captures), also known as environment maps, are a simple image-based rendering technique to achieve complex lighting without a complex shader program.

Using offline rendering or even a painting program, an image of a rendered unit sphere is created, such as this image of a sphere with a jade material viewed under studio lighting:

The position \mathbf{p} of each point on the sphere is also its unit normal vector \hat{\mathbf{n}} = \mathbf{p}. The idea of matcaps is to use this image of the sphere as a lookup table keyed on an input normal value and outputting the rgb color: I(\hat{\mathbf{n}}) \rightarrow (r,g,b).

When rendering a non-spherical shape, in the fragment shader we compute the normal vector \hat{\mathbf{n}} and then use its x- and y- components as texture coordinates to look up the corresponding point in the matcap image. In this way, there is no lighting model or lighting computation done in the fragment shader, it is simply a texture lookup, but rather than requiring a UV-mapping (parameterization) of the model, we use the per-fragment normals. By using the normal relative to the camera’s coordinate system we get view dependent complex lighting “for free”:

In libigl, if the rgba data for a matcap image is stored in R,G,B, and A

(as output, e.g., by igl::png::readPNG) then this can be attached to the

igl::opengl::ViewerData by setting it as the texture data and then turning on

matcap rendering:

viewer.data().set_texture(R,G,B,A);

viewer.data().use_matcap = true;

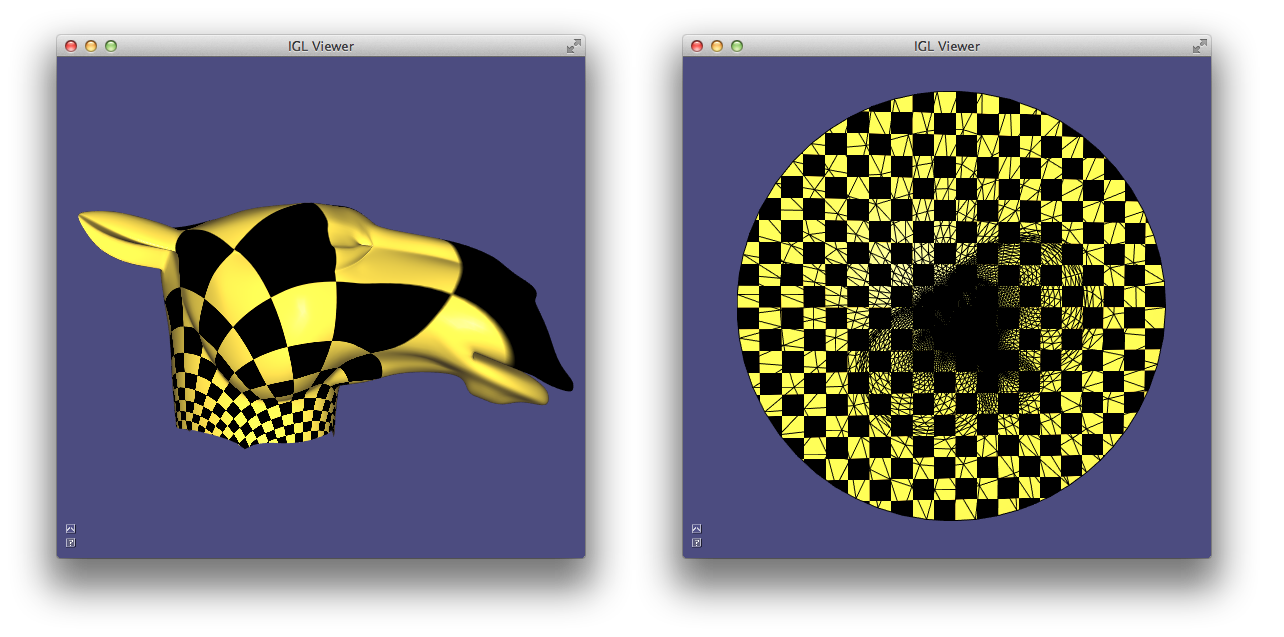

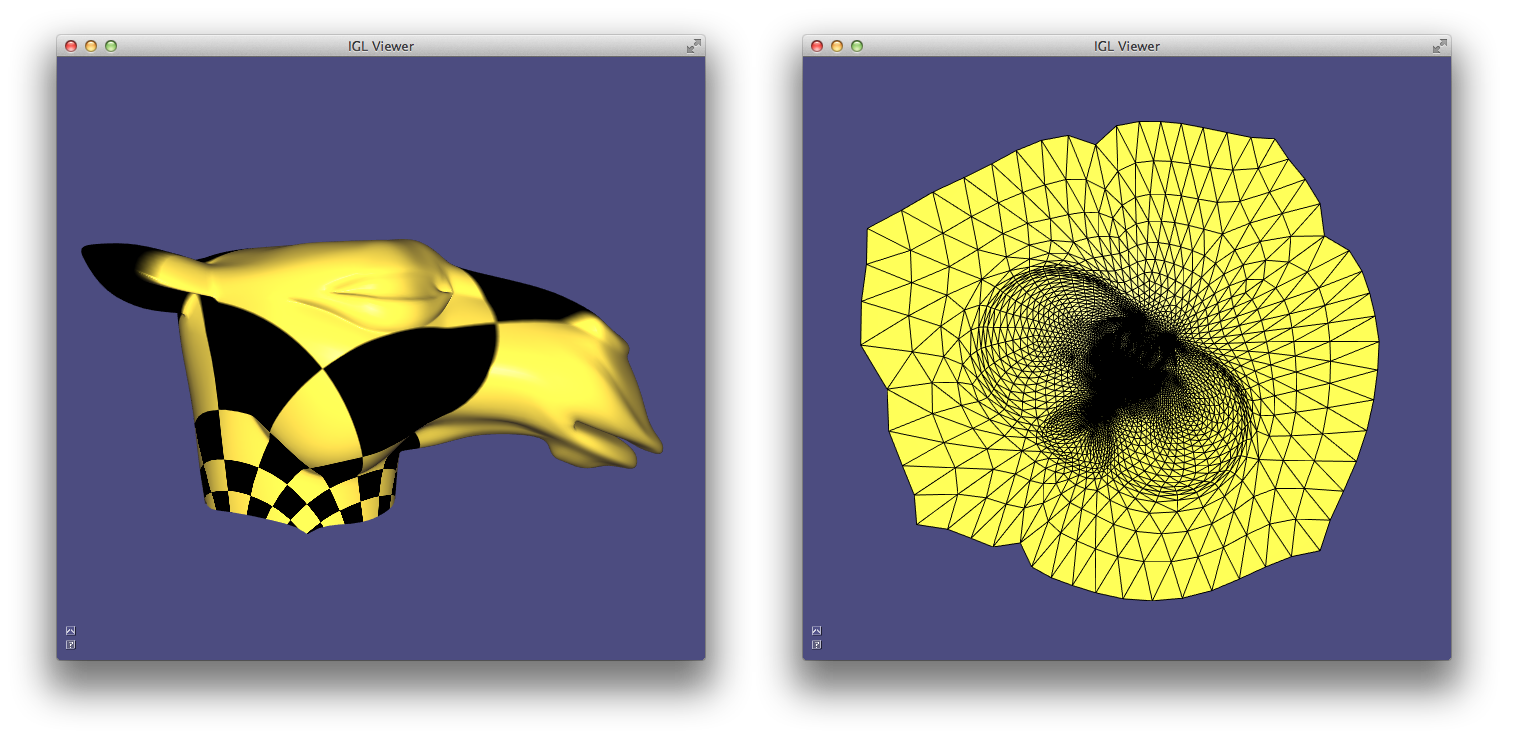

Chapter 2: Discrete Geometric Quantities And Operators¶

This chapter illustrates a few discrete quantities that libigl can compute on a mesh and the libigl functions that construct popular discrete differential geometry operators. It also provides an introduction to basic drawing and coloring routines of our viewer.

Normals¶

Surface normals are a basic quantity necessary for rendering a surface. There are a variety of ways to compute and store normals on a triangle mesh. Example 201 demonstrates how to compute and visualize normals with libigl.

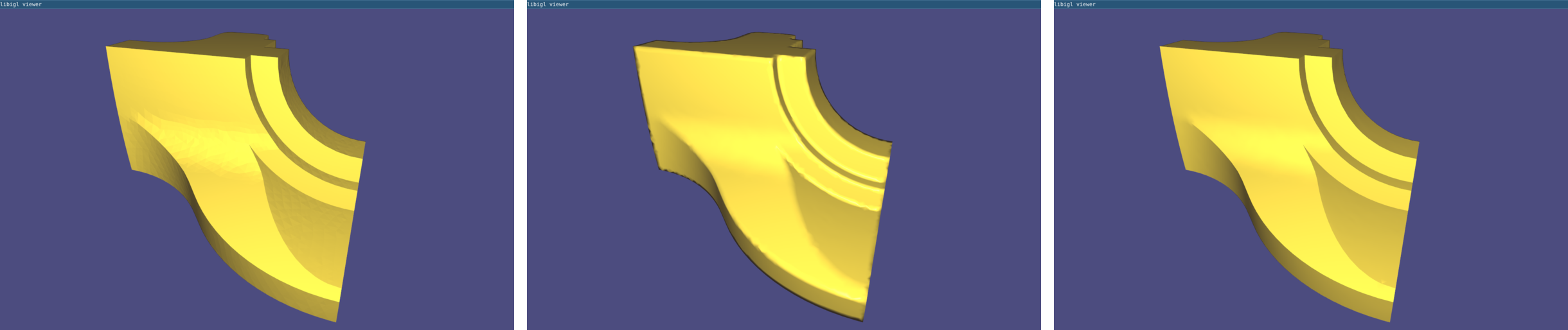

Per-face¶

Normals are well defined on each triangle of a mesh as the vector orthogonal to triangle’s plane. These piecewise-constant normals produce piecewise-flat renderings: the surface appears non-smooth and reveals its underlying discretization.

Per-vertex¶

Normals can be computed and stored on vertices, and interpolated in the interior of the triangles to produce smooth renderings (Phong shading). Most techniques for computing per-vertex normals take an average of incident face normals. The main difference between these techniques is their weighting scheme: Uniform weighting is heavily biased by the discretization choice, whereas area-based or angle-based weighting is more forgiving.

The typical half-edge style computation of area-based weights has this structure:

N.setZero(V.rows(),3);

for(int i : vertices)

{

for(face : incident_faces(i))

{

N.row(i) += face.area * face.normal;

}

}

N.rowwise().normalize();

At first glance, it might seem inefficient to loop over incident faces—and thus constructing the per-vertex normals— without using an half-edge data structure. However, per-vertex normals may be throwing each face normal to running sums on its corner vertices:

N.setZero(V.rows(),3);

for(int f = 0; f < F.rows();f++)

{

for(int c = 0; c < 3;c++)

{

N.row(F(f,c)) += area(f) * face_normal.row(f);

}

}

N.rowwise().normalize();

Per-corner¶

Storing normals per-corner is an efficient and convenient way of supporting both smooth and sharp (e.g. creases and corners) rendering. This format is common to OpenGL and the .obj mesh file format. Often such normals are tuned by the mesh designer, but creases and corners can also be computed automatically. Libigl implements a simple scheme which computes corner normals as averages of normals of faces incident on the corresponding vertex which do not deviate by more than a specified dihedral angle (e.g. 20°).

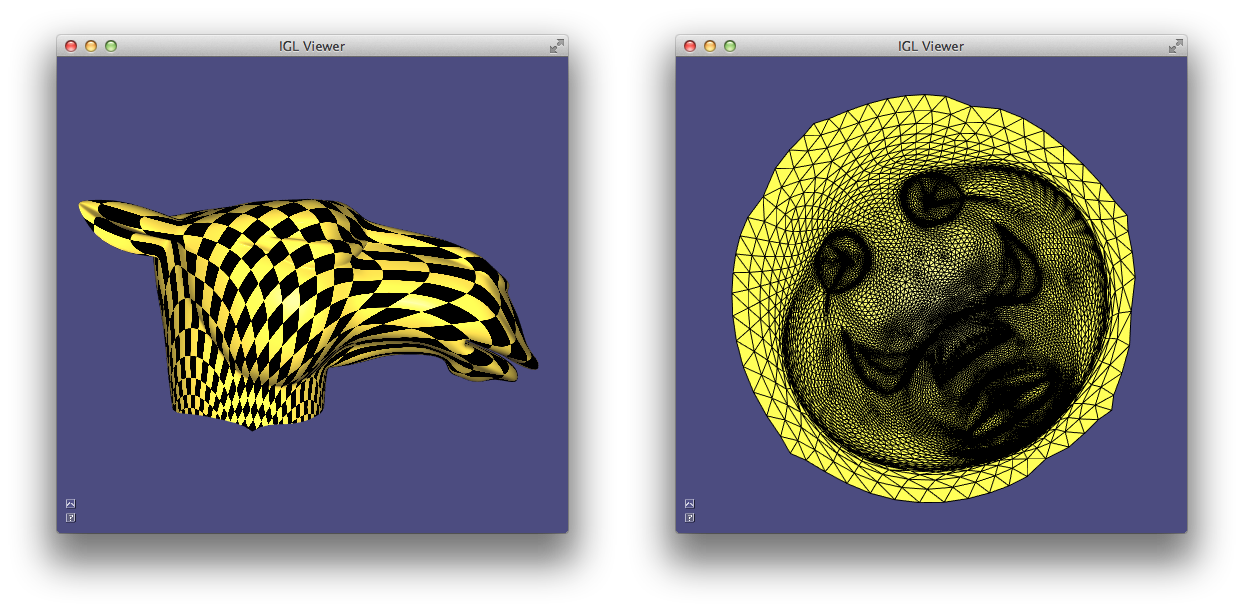

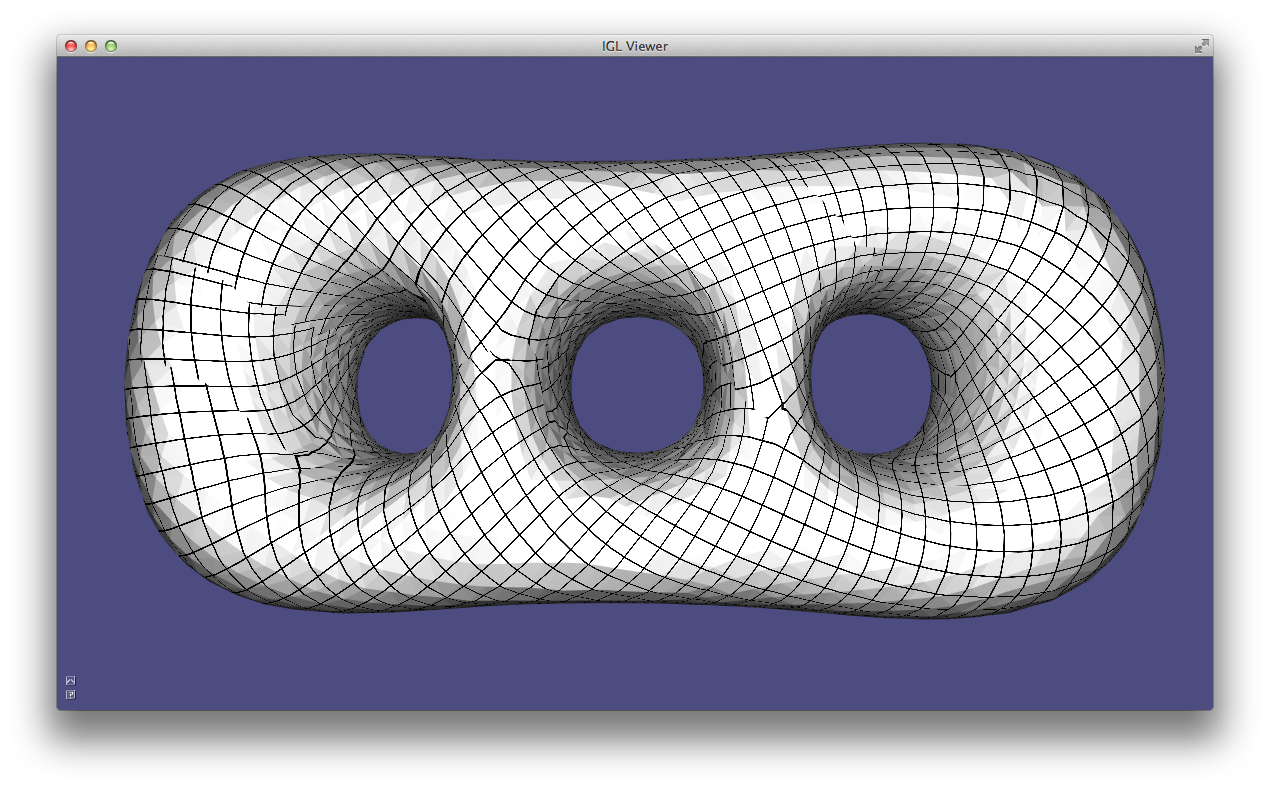

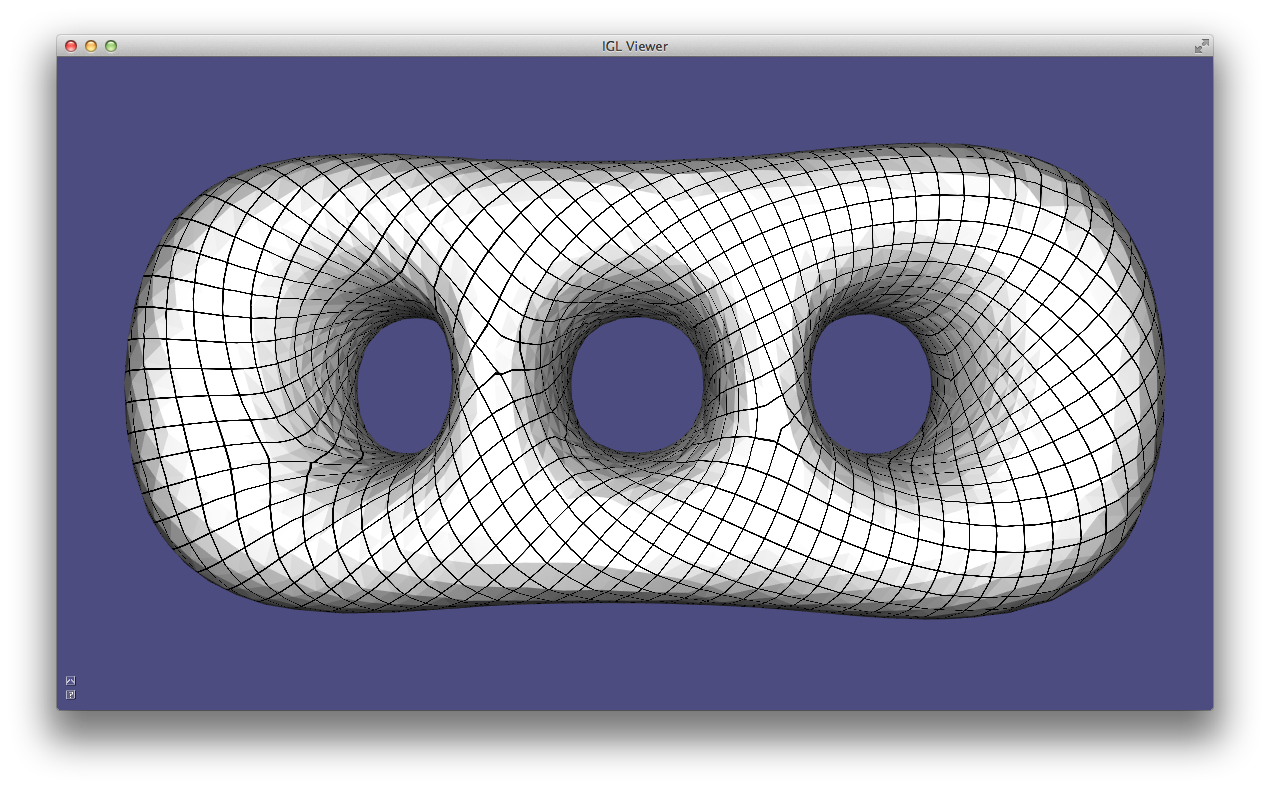

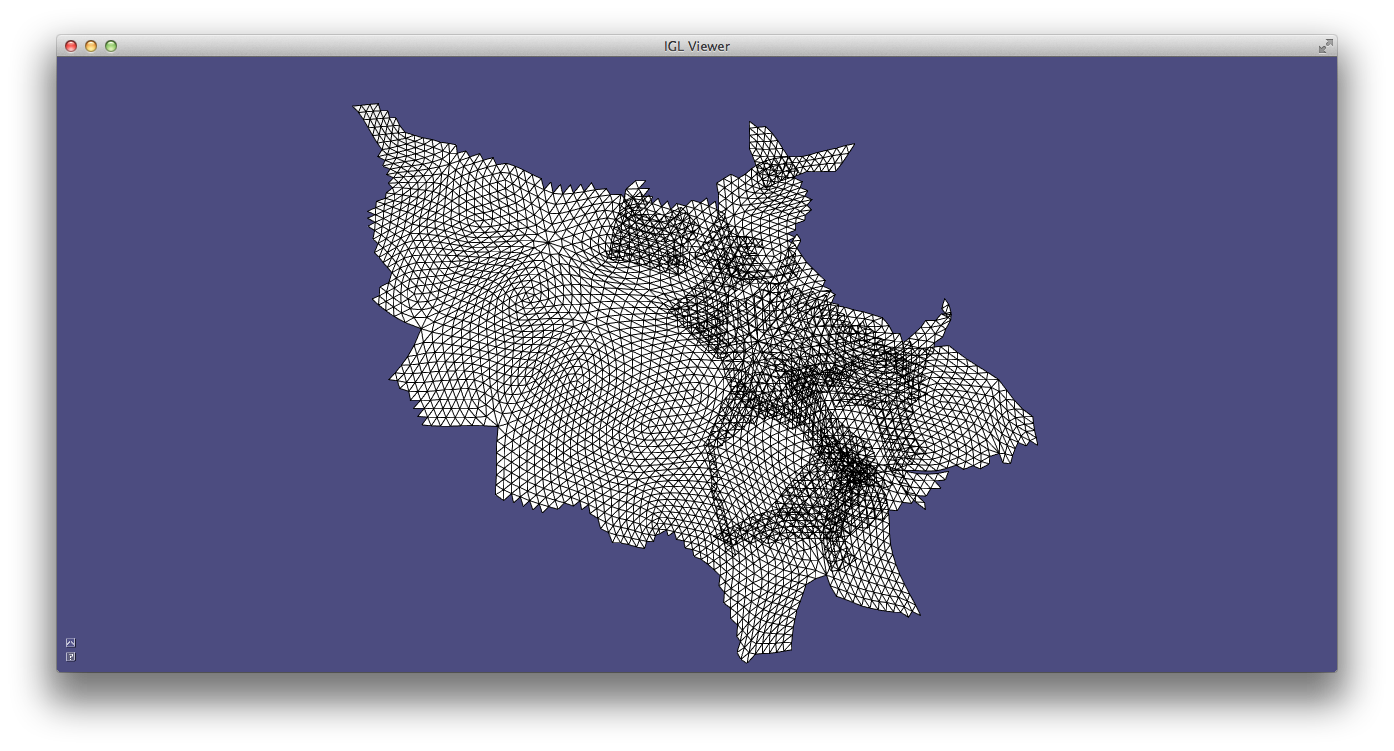

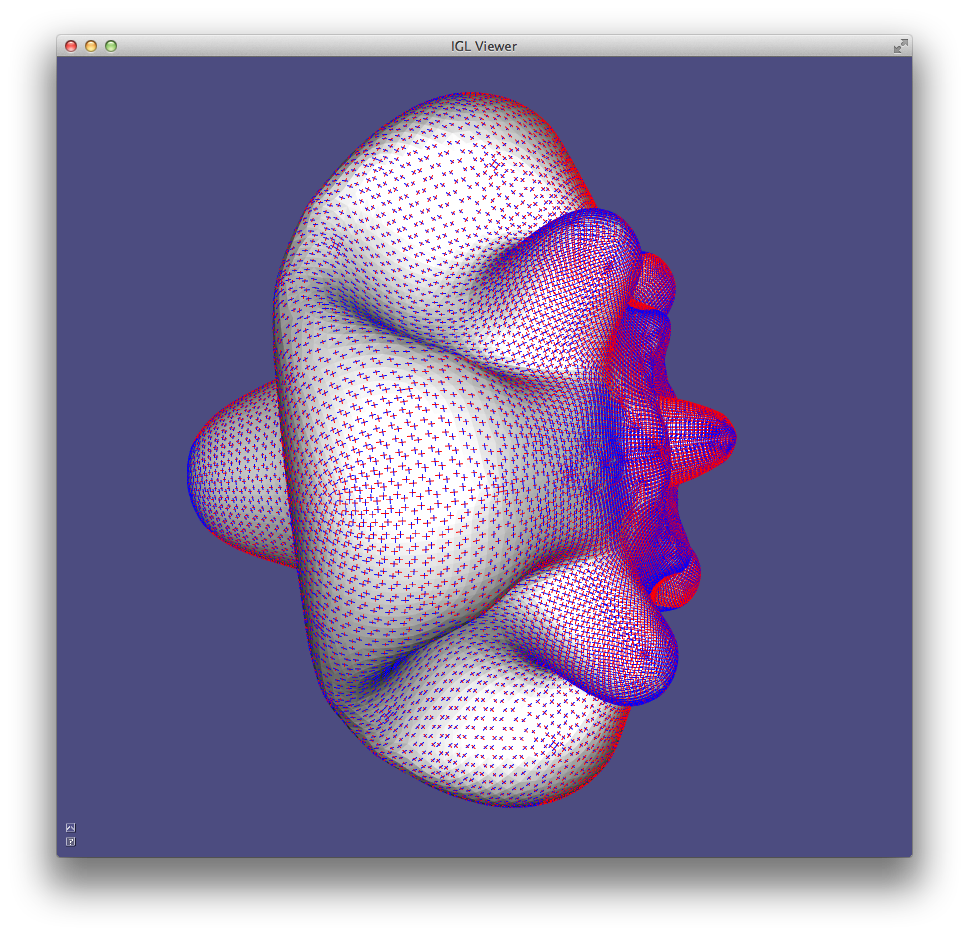

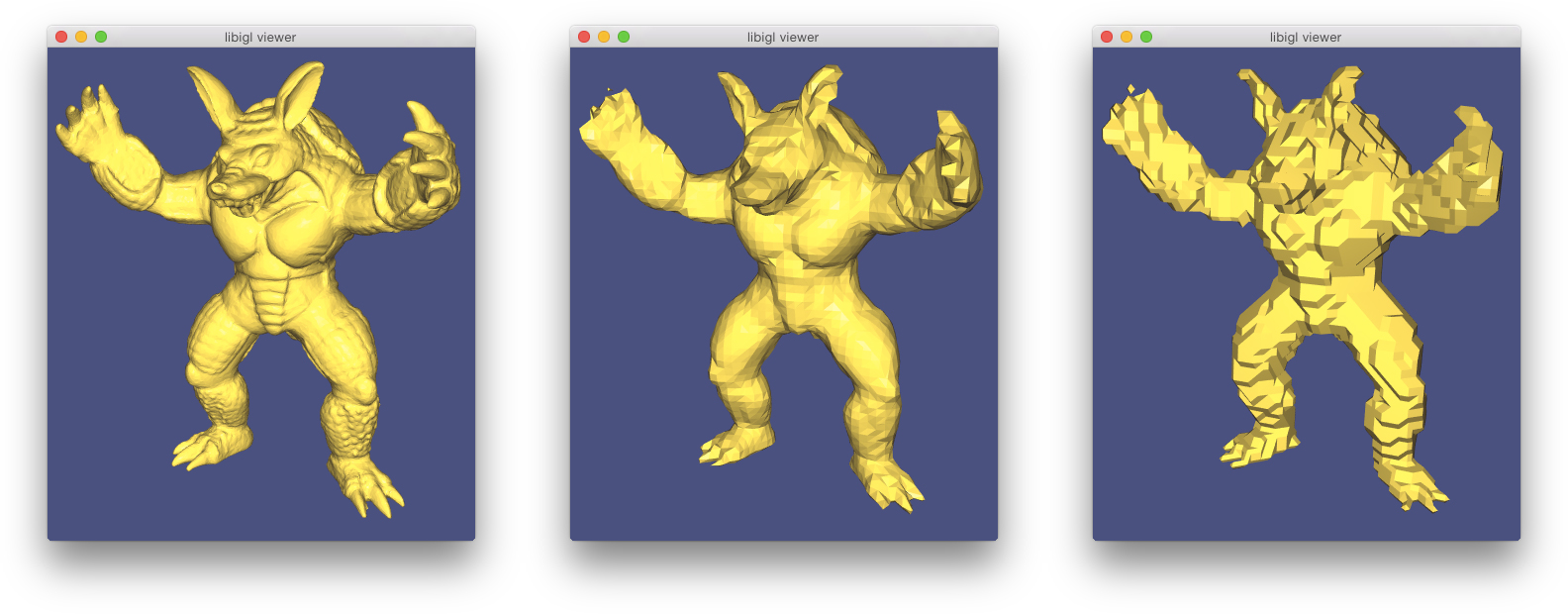

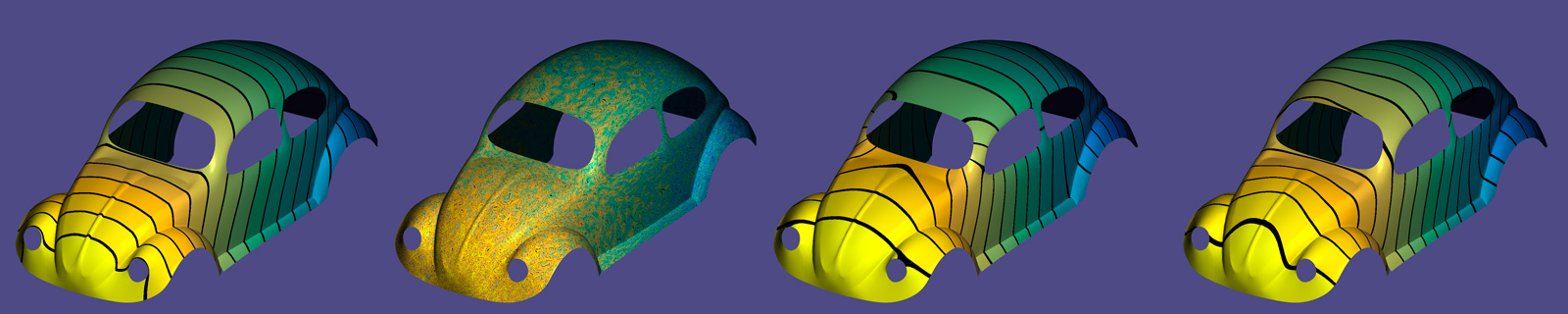

Normals example computes per-face (left), per-vertex (middle) and per-corner (right) normals

Gaussian Curvature¶

Gaussian curvature on a continuous surface is defined as the product of the principal curvatures:

k_G = k_1 k_2.

As an intrinsic measure, it depends on the metric and not the surface’s embedding.

Intuitively, Gaussian curvature tells how locally spherical or elliptic the surface is ( k_G>0 ), how locally saddle-shaped or hyperbolic the surface is ( k_G<0 ), or how locally cylindrical or parabolic ( k_G=0 ) the surface is.

In the discrete setting, one definition for a “discrete Gaussian curvature” on a triangle mesh is via a vertex’s angular deficit:

k_G(v_i) = 2π - \sum\limits_{j\in N(i)}θ_{ij},

where N(i) are the triangles incident on vertex i and θ_{ij} is the angle at vertex i in triangle j 3.

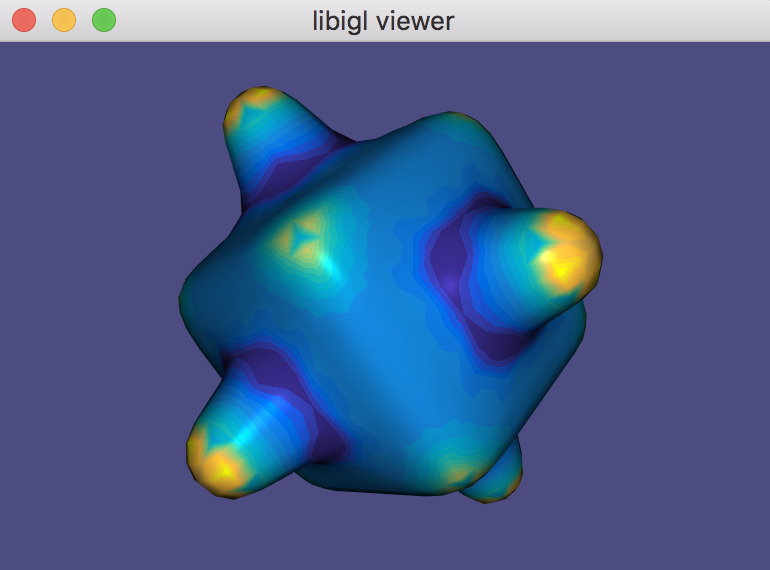

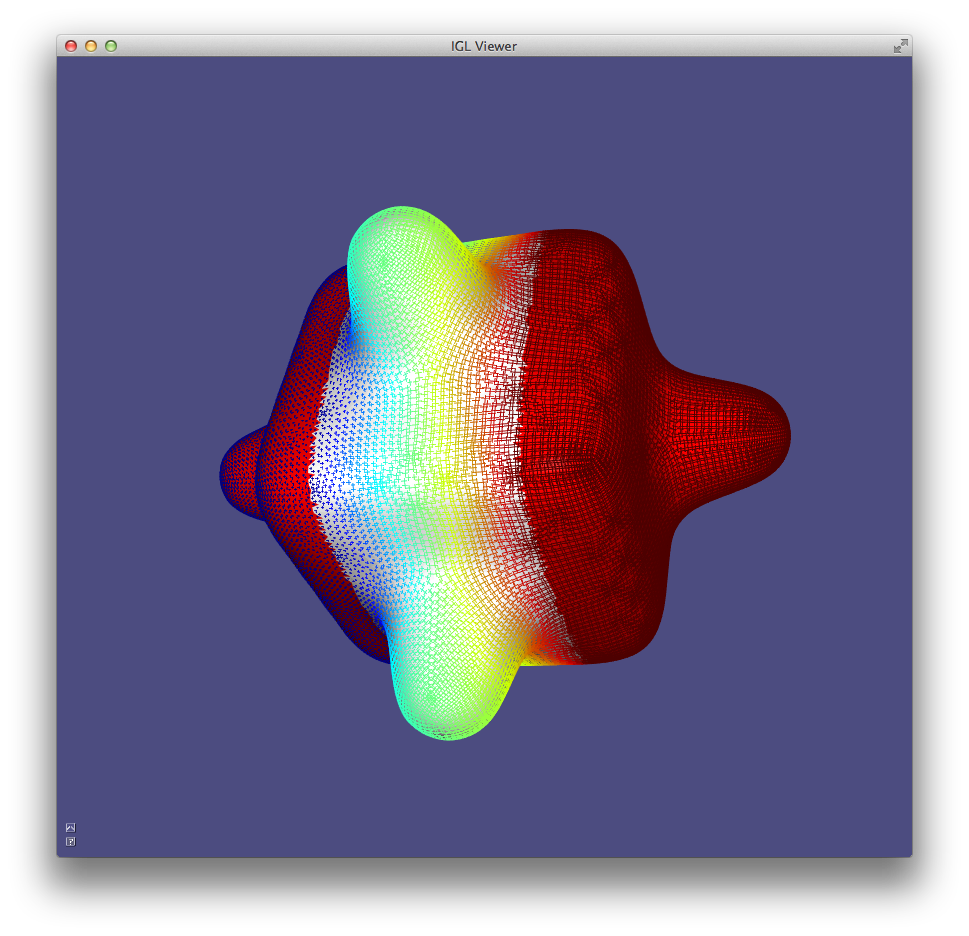

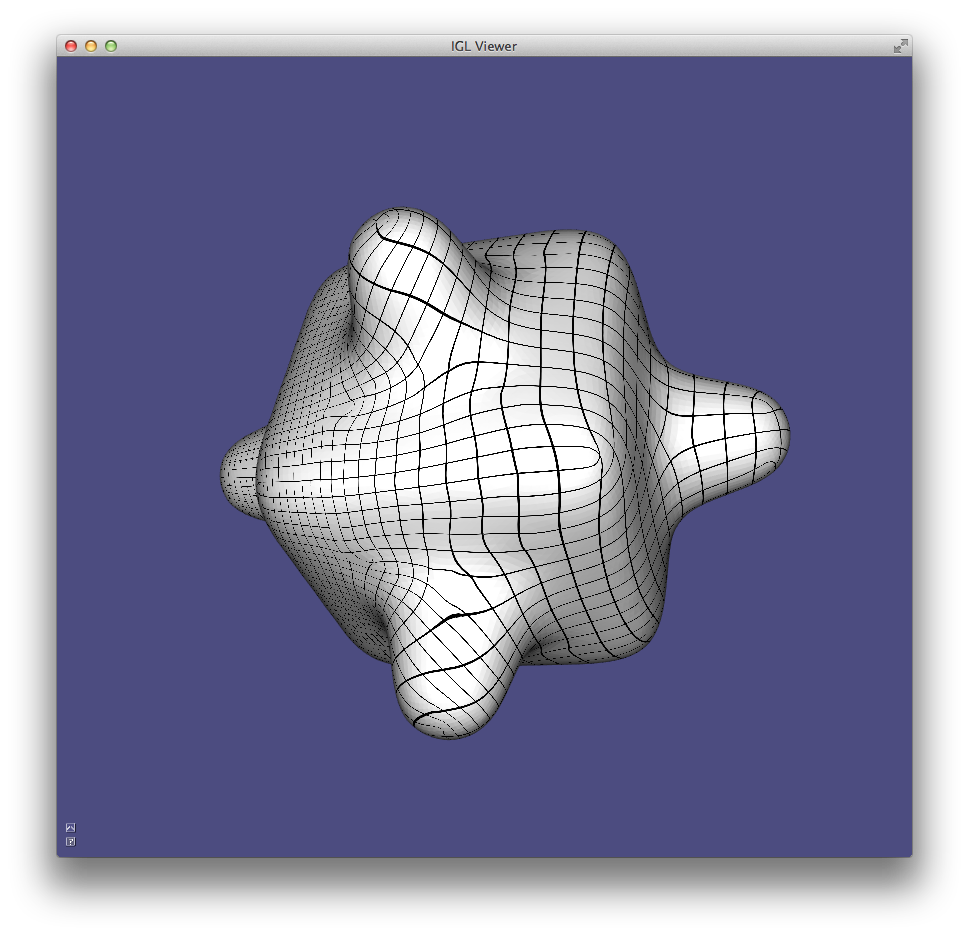

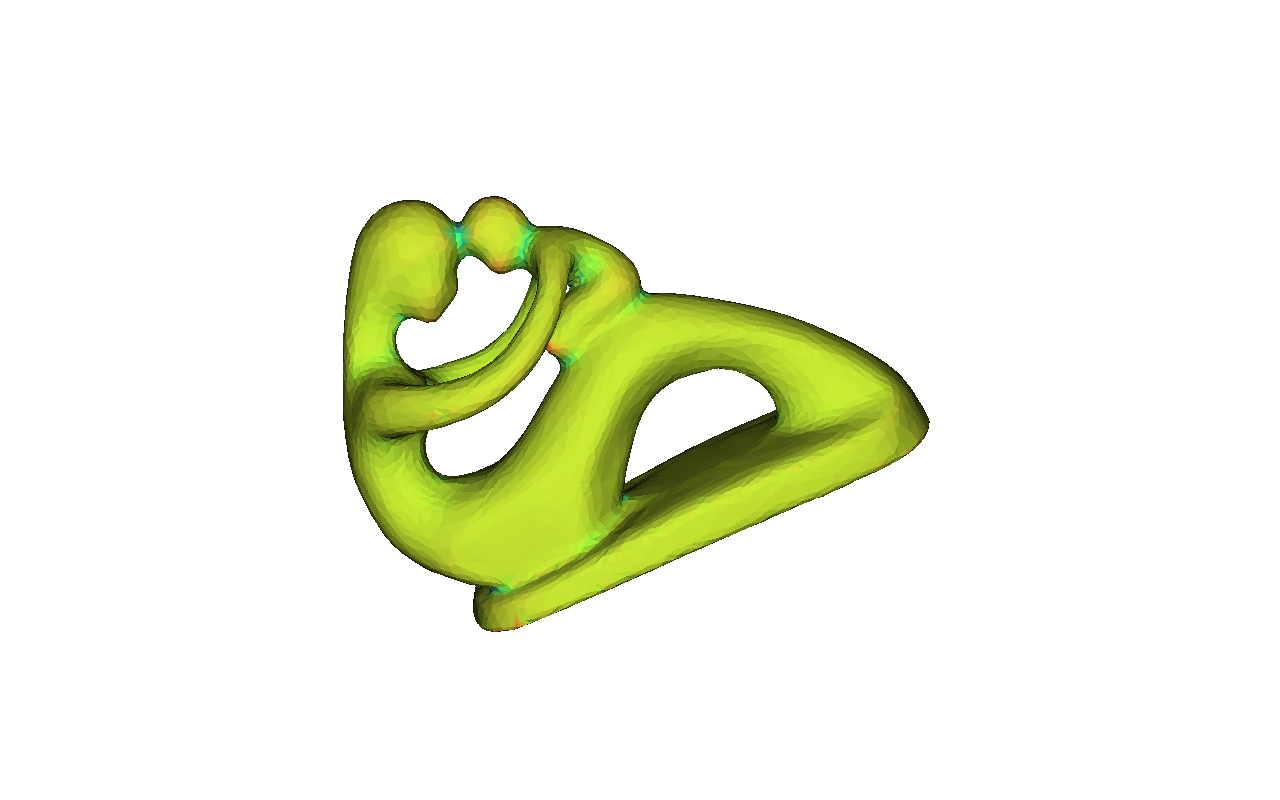

Just like the continuous analog, our discrete Gaussian curvature reveals elliptic, hyperbolic and parabolic vertices on the domain, as demonstrated in Example 202.

GaussianCurvature example computes discrete Gaussian curvature and visualizes it in pseudocolor.

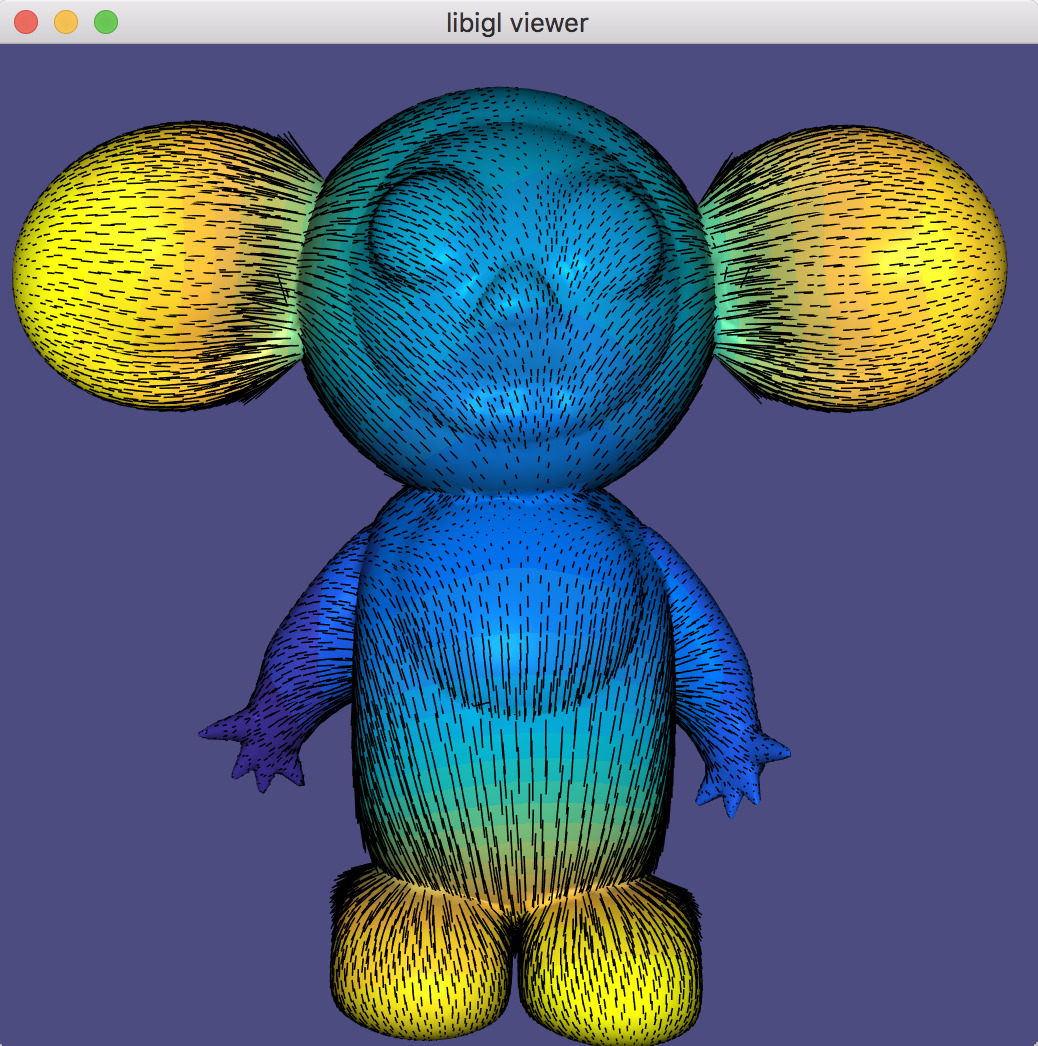

Curvature Directions¶

The two principal curvatures (k_1,k_2) at a point on a surface measure how much the surface bends in different directions. The directions of maximum and minimum (signed) bending are called principal directions and are always orthogonal.

Mean curvature is defined as the average of principal curvatures:

H = \frac{1}{2}(k_1 + k_2).

One way to extract mean curvature is by examining the Laplace-Beltrami operator applied to the surface positions. The result is a so-called mean-curvature normal:

-\Delta \mathbf{x} = H \mathbf{n}.

It is easy to compute this on a discrete triangle mesh in libigl using the cotangent Laplace-Beltrami operator 3.

#include <igl/cotmatrix.h>

#include <igl/massmatrix.h>

#include <igl/invert_diag.h>

...

MatrixXd HN;

SparseMatrix<double> L,M,Minv;

igl::cotmatrix(V,F,L);

igl::massmatrix(V,F,igl::MASSMATRIX_TYPE_VORONOI,M);

igl::invert_diag(M,Minv);

HN = -Minv*(L*V);

H = HN.rowwise().norm(); //up to sign

Combined with the angle defect definition of discrete Gaussian curvature, one can define principal curvatures and use least squares fitting to find directions 3.

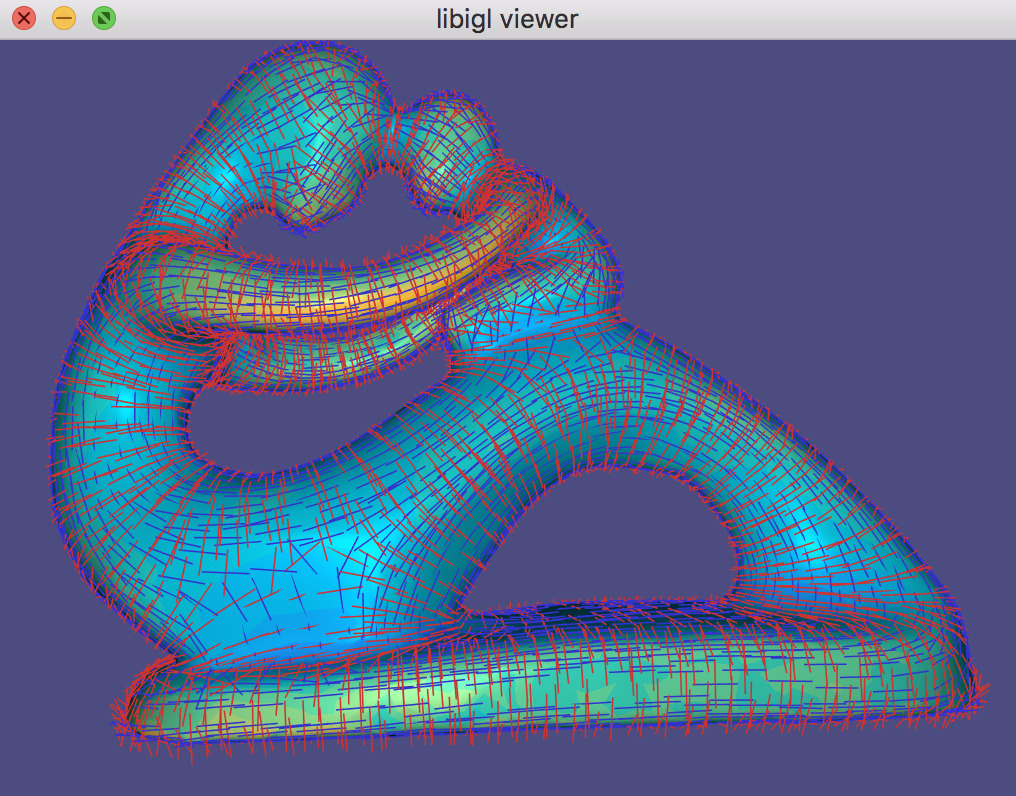

Alternatively, a robust method for determining principal curvatures is via quadric fitting 5. In the neighborhood around every vertex, a best-fit quadric is found and principal curvature values and directions are analytically computed on this quadric (Example 203).

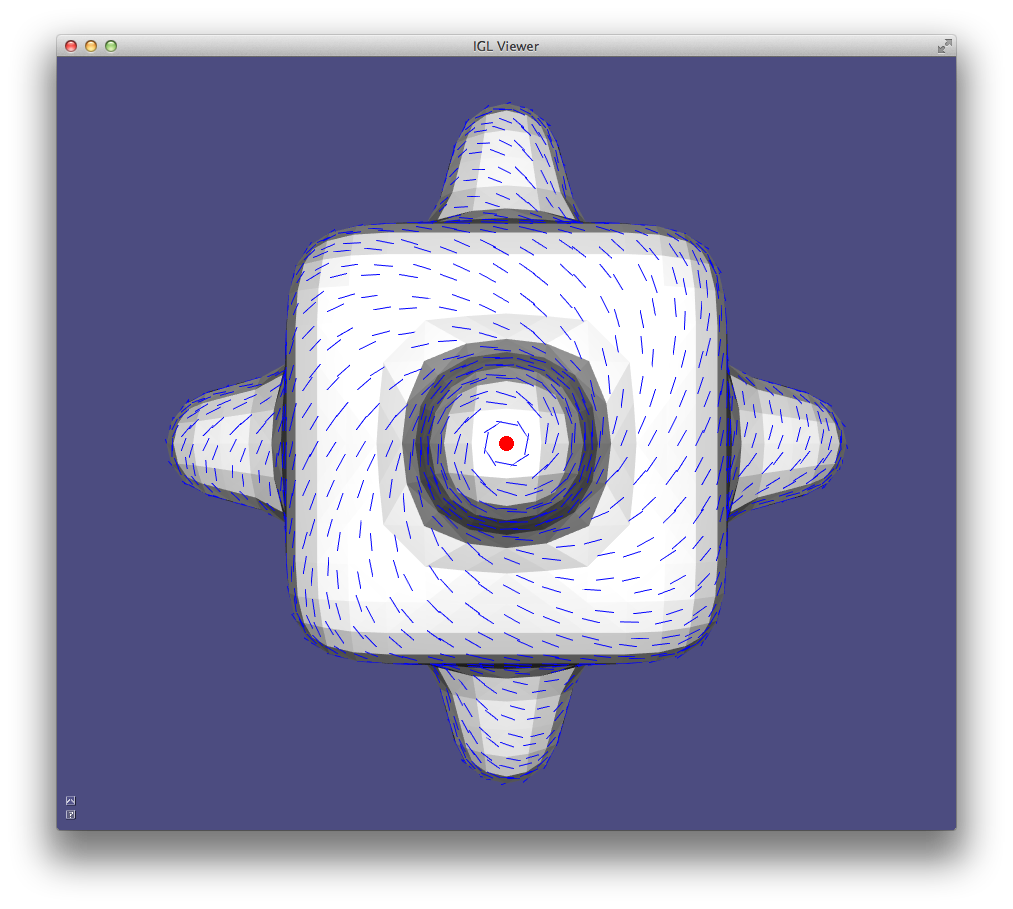

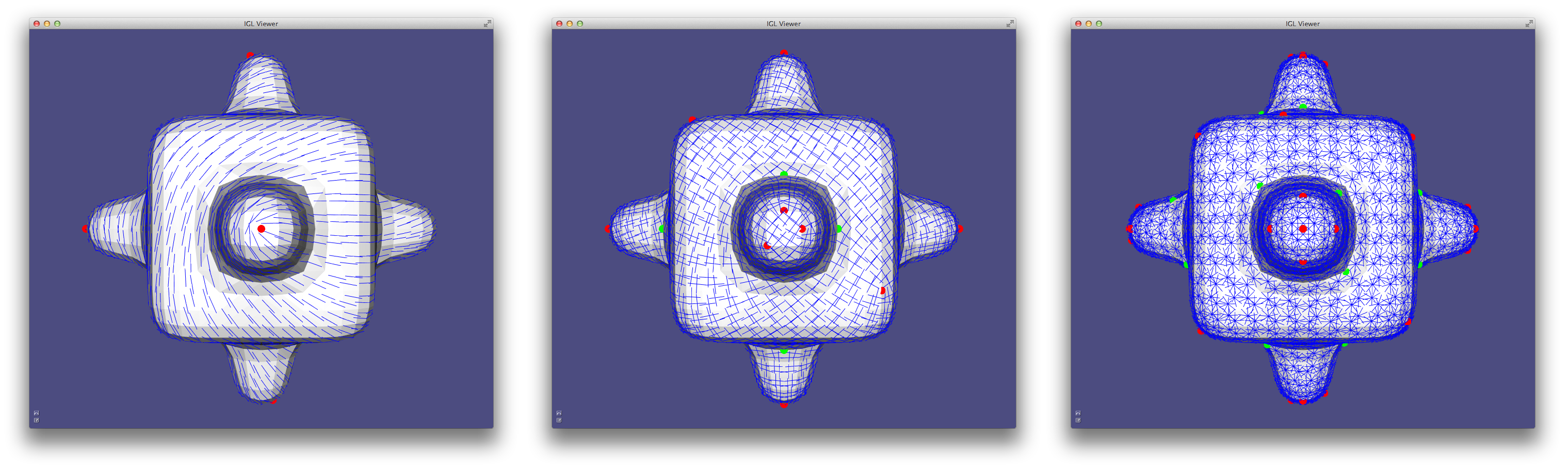

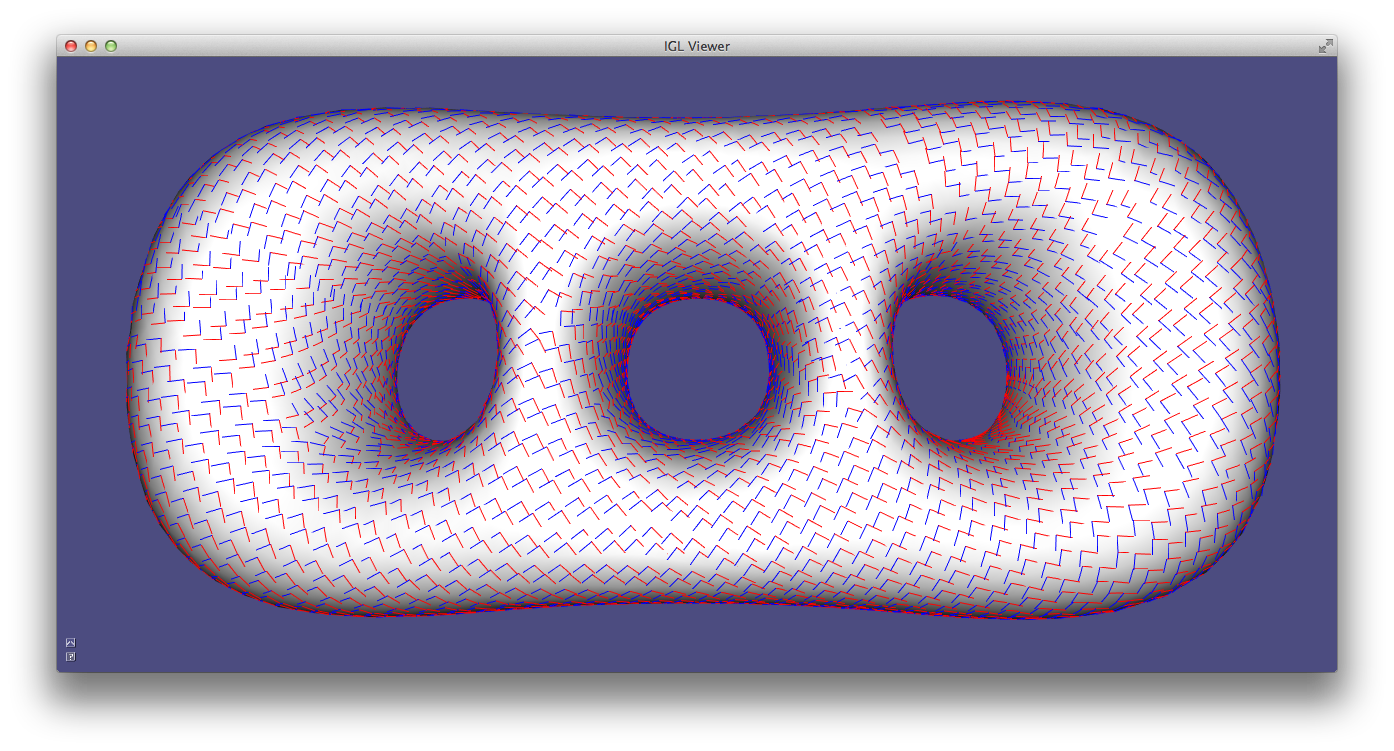

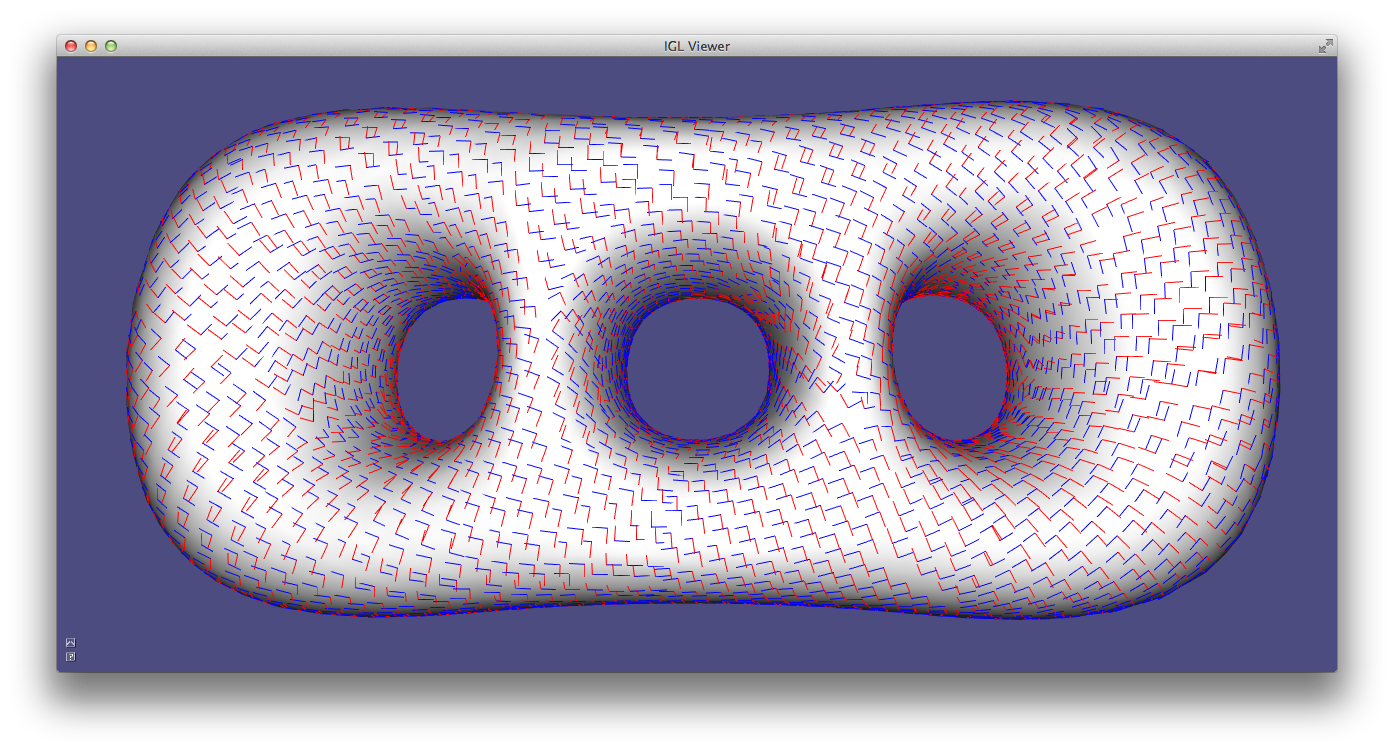

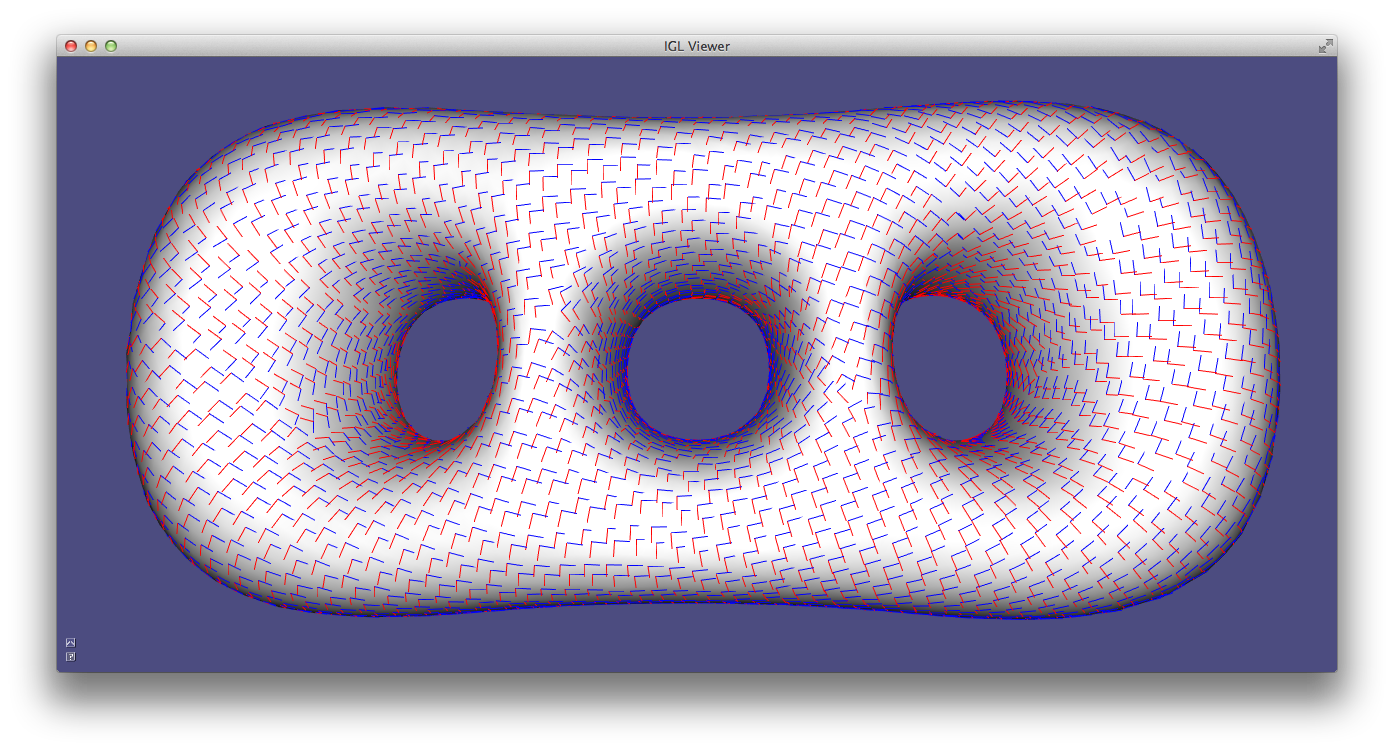

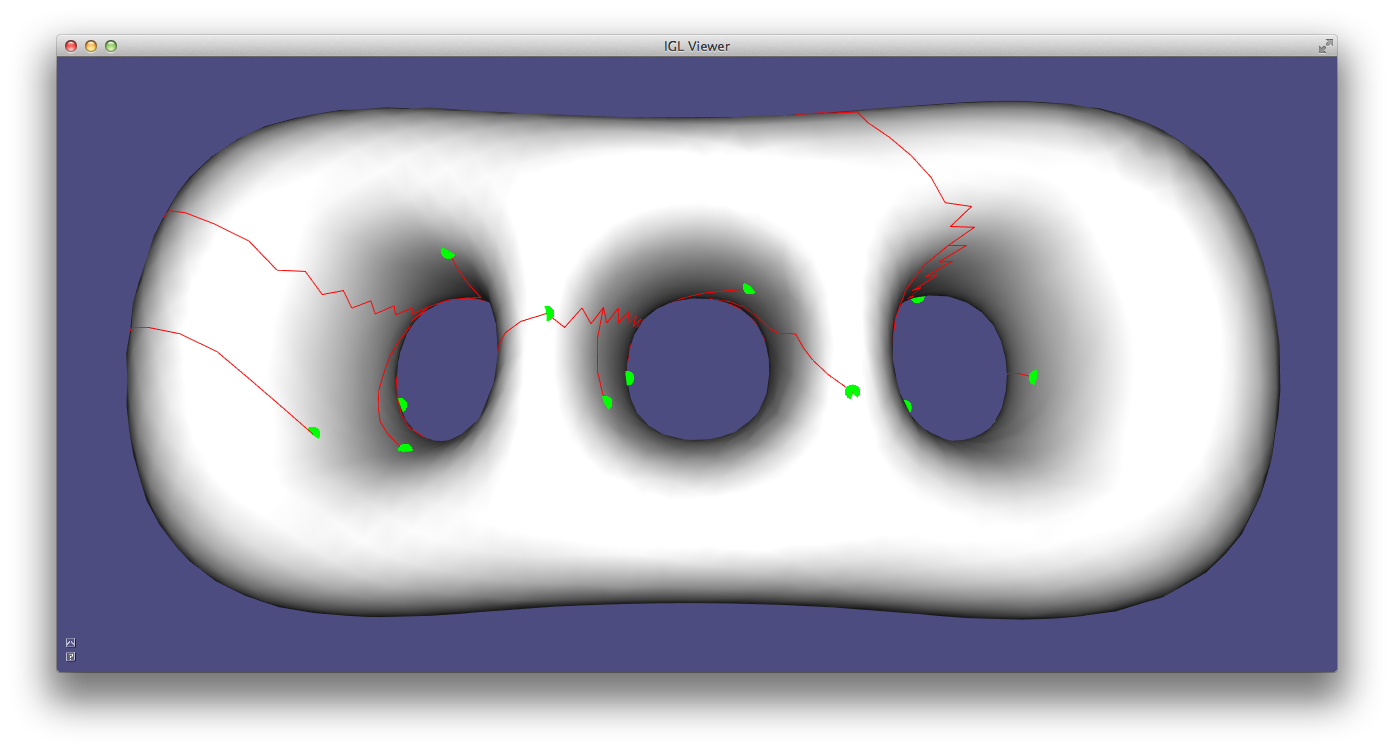

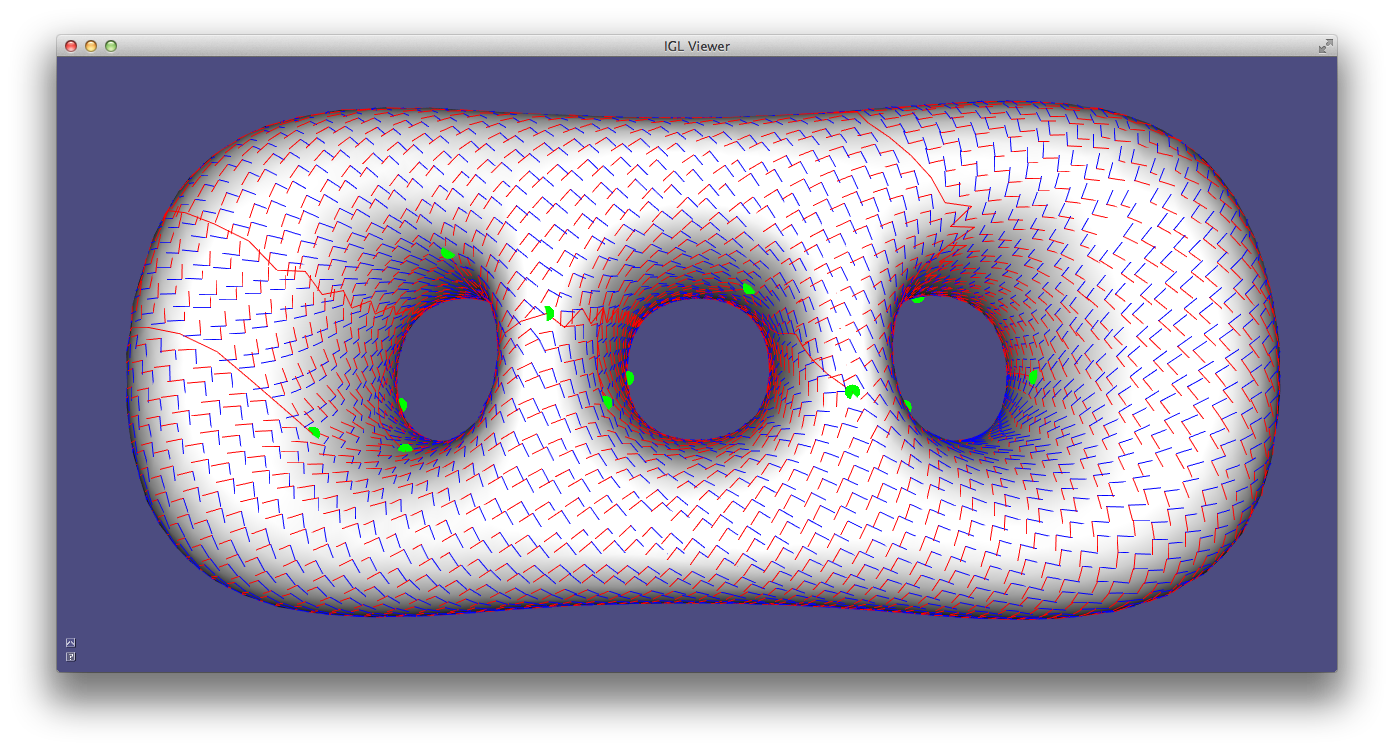

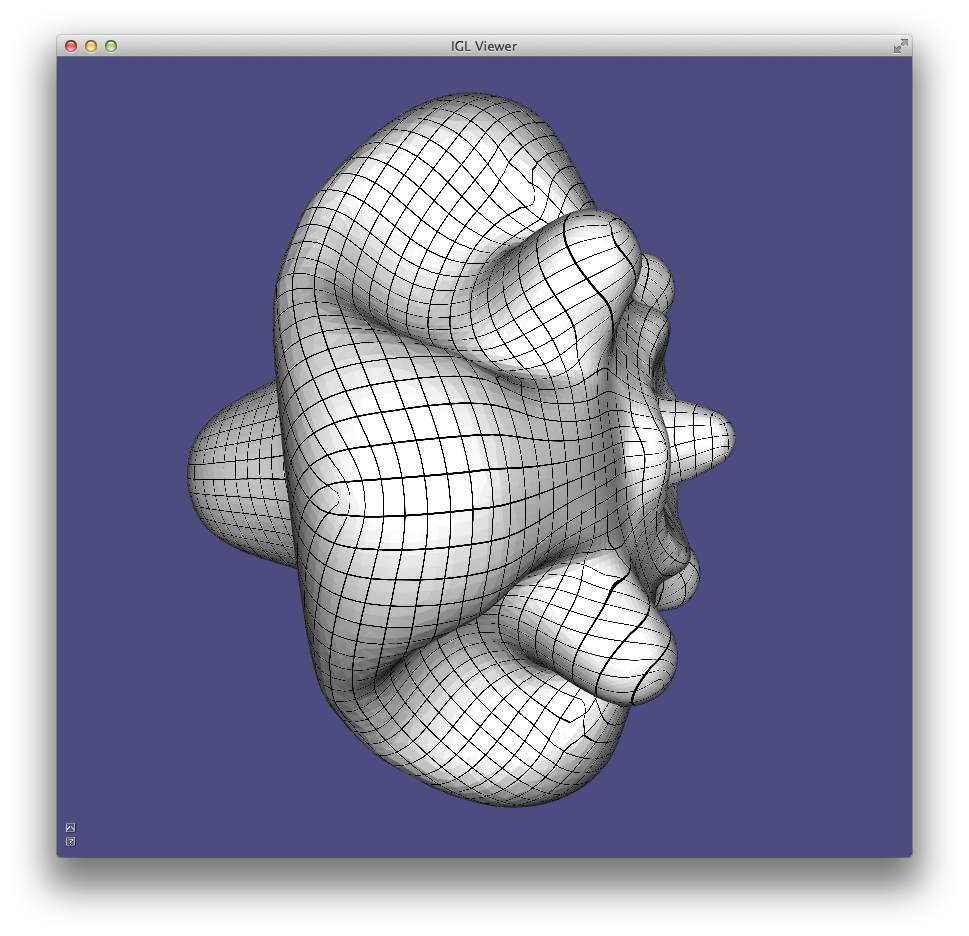

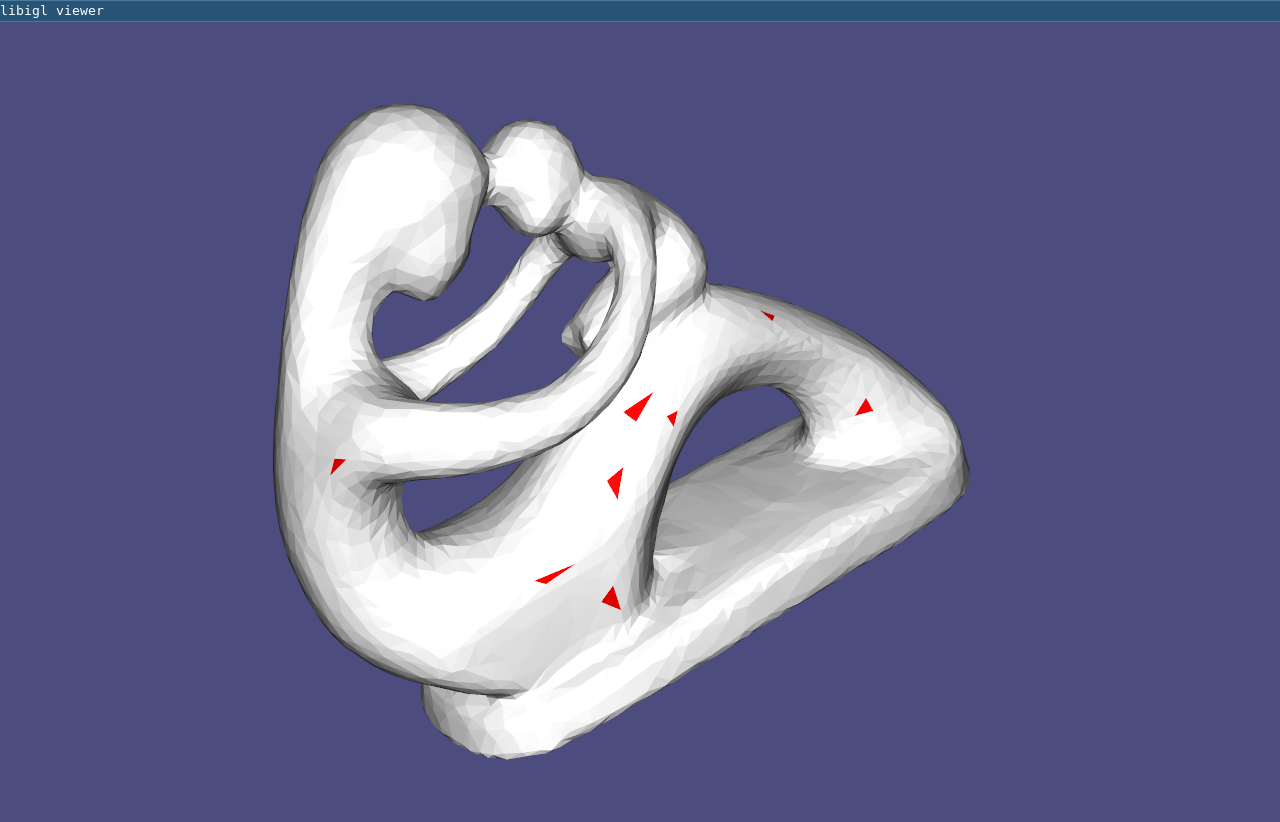

CurvatureDirections example computes principal curvatures via quadric fitting and visualizes mean curvature in pseudocolor and principal directions with a cross field.

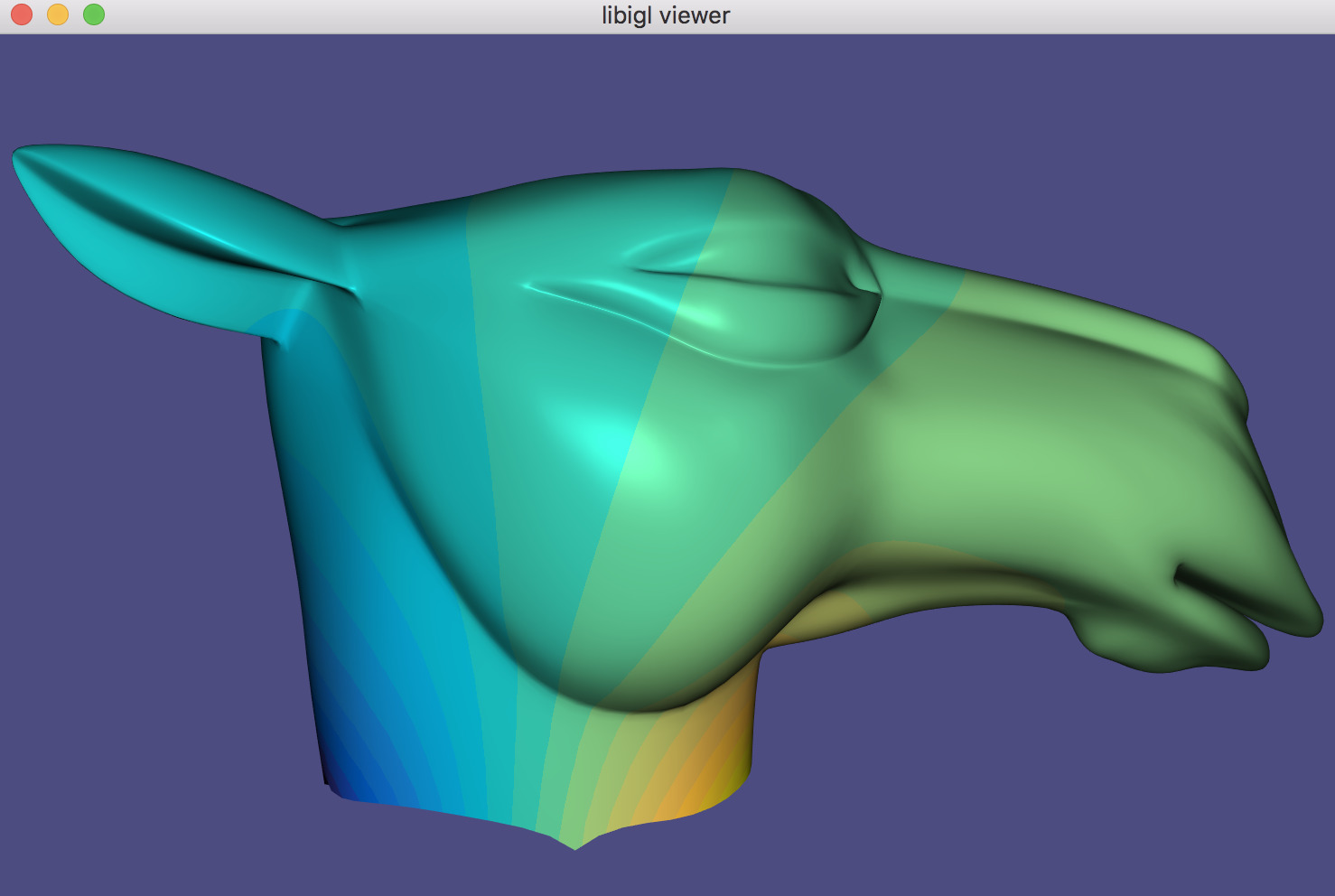

Gradient¶

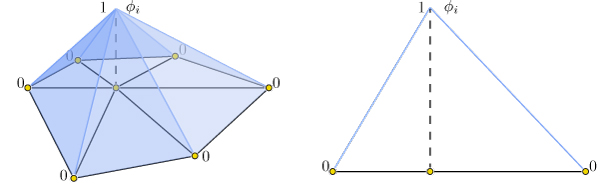

Scalar functions on a surface can be discretized as a piecewise linear function with values defined at each mesh vertex:

f(\mathbf{x}) \approx \sum\limits_{i=1}^n \phi_i(\mathbf{x})\, f_i,

where \phi_i is a piecewise linear hat function defined by the mesh so that for each triangle \phi_i is the linear function which is one only at vertex i and zero at the other corners.

Thus gradients of such piecewise linear functions are simply sums of gradients of the hat functions:

\nabla f(\mathbf{x}) \approx \nabla \sum\limits_{i=1}^n \phi_i(\mathbf{x})\, f_i = \sum\limits_{i=1}^n \nabla \phi_i(\mathbf{x})\, f_i.

This reveals that the gradient is a linear function of the vector of f_i values. Because the \phi_i are linear in each triangle, their gradients are constant in each triangle. Thus our discrete gradient operator can be written as a matrix multiplication taking vertex values to triangle values:

\nabla f \approx \mathbf{G}\,\mathbf{f},

where \mathbf{f} is n\times 1 and \mathbf{G} is an md\times n sparse

matrix. This matrix \mathbf{G} can be derived geometrically, e.g.

ch. 21.

Libigl’s grad function computes \mathbf{G} for

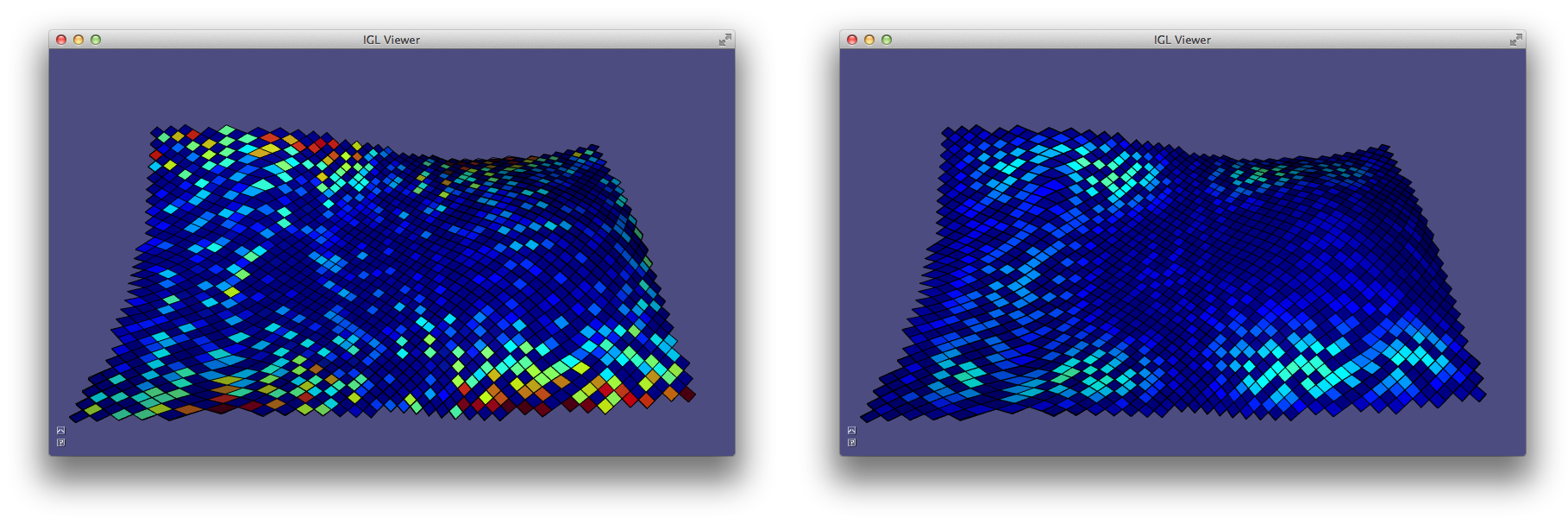

triangle and tetrahedral meshes (Example 204):

Gradient example computes gradients of an input function on a mesh and visualizes the vector field.

Laplacian¶

The discrete Laplacian is an essential geometry processing tool. Many interpretations and flavors of the Laplace and Laplace-Beltrami operator exist.

In open Euclidean space, the Laplace operator is the usual divergence of gradient (or equivalently the Laplacian of a function is the trace of its Hessian):

\Delta f = \frac{\partial^2 f}{\partial x^2} + \frac{\partial^2 f}{\partial y^2} + \frac{\partial^2 f}{\partial z^2}.

The Laplace-Beltrami operator generalizes this to surfaces.

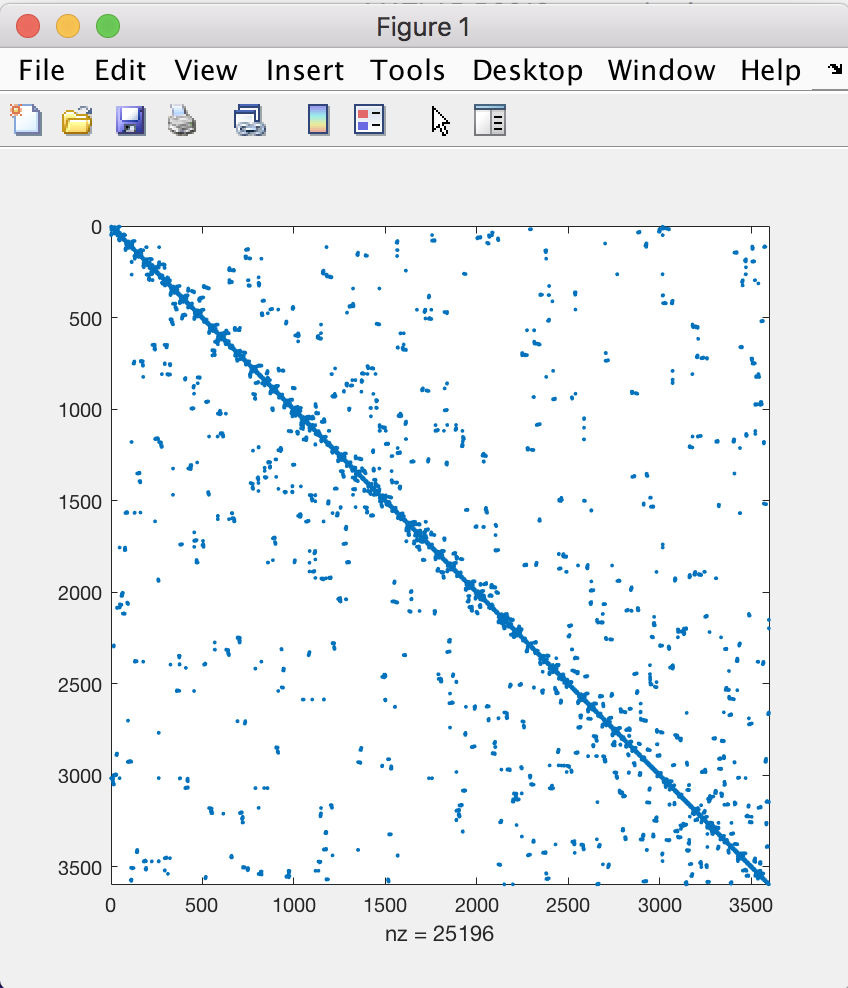

When considering piecewise-linear functions on a triangle mesh, a discrete Laplacian may be derived in a variety of ways. The most popular in geometry processing is the so-called ``cotangent Laplacian’’ \mathbf{L}, arising simultaneously from FEM, DEC and applying divergence theorem to vertex one-rings. As a linear operator taking vertex values to vertex values, the Laplacian \mathbf{L} is a n\times n matrix with elements:

L_{ij} = \begin{cases}j \in N(i) &\cot \alpha_{ij} + \cot \beta_{ij},\\ j \notin N(i) & 0,\\ i = j & -\sum\limits_{k\neq i} L_{ik}, \end{cases}

where N(i) are the vertices adjacent to (neighboring) vertex i, and \alpha_{ij},\beta_{ij} are the angles opposite to edge {ij}. This formula leads to a typical half-edge style implementation for constructing \mathbf{L}:

for(int i : vertices)

{

for(int j : one_ring(i))

{

for(int k : triangle_on_edge(i,j))

{

L(i,j) += cot(angle(i,j,k));

L(i,i) -= cot(angle(i,j,k));

}

}

}

Similarly as before, it may seem to loop over one-rings without having an half-edge data structure. However, this is not the case, since the Laplacian may be built by summing together contributions for each triangle, much in spirit with its FEM discretization of the Dirichlet energy (sum of squared gradients):

for(triangle t : triangles)

{

for(edge i,j : t)

{

L(i,j) += cot(angle(i,j,k));

L(j,i) += cot(angle(i,j,k));

L(i,i) -= cot(angle(i,j,k));

L(j,j) -= cot(angle(i,j,k));

}

}

Libigl implements discrete “cotangent” Laplacians for triangles meshes and tetrahedral meshes, building both with fast geometric rules rather than “by the book” FEM construction which involves many (small) matrix inversions, cf. 6.

The operator applied to mesh vertex positions amounts to smoothing by flowing the surface along the mean curvature normal direction (Example 205). Note that this is equivalent to minimizing surface area.

Laplacian example computes conformalized mean curvature flow using the cotangent Laplacian 2.

Mass Matrix¶

The mass matrix \mathbf{M} is another n \times n matrix which takes vertex values to vertex values. From an FEM point of view, it is a discretization of the inner-product: it accounts for the area around each vertex. Consequently, \mathbf{M} is often a diagonal matrix, such that M_{ii} is the barycentric or voronoi area around vertex i in the mesh 3. The inverse of this matrix is also very useful as it transforms integrated quantities into point-wise quantities, e.g.:

\Delta f \approx \mathbf{M}^{-1} \mathbf{L} \mathbf{f}.

In general, when encountering squared quantities integrated over the surface, the mass matrix will be used as the discretization of the inner product when sampling function values at vertices:

\int_S x\, y\ dA \approx \mathbf{x}^T\mathbf{M}\,\mathbf{y}.

An alternative mass matrix \mathbf{T} is a md \times md matrix which takes triangle vector values to triangle vector values. This matrix represents an inner-product accounting for the area associated with each triangle (i.e. the triangles true area).

Alternative Construction Of Laplacian¶

An alternative construction of the discrete cotangent Laplacian is by “squaring” the discrete gradient operator. This may be derived by applying Green’s identity (ignoring boundary conditions for the moment):

\int_S \|\nabla f\|^2 dA = \int_S f \Delta f dA

Or in matrix form which is immediately translatable to code:

\mathbf{f}^T \mathbf{G}^T \mathbf{T} \mathbf{G} \mathbf{f} = \mathbf{f}^T \mathbf{M} \mathbf{M}^{-1} \mathbf{L} \mathbf{f} = \mathbf{f}^T \mathbf{L} \mathbf{f}.

So we have that \mathbf{L} = \mathbf{G}^T \mathbf{T} \mathbf{G}. This also hints that we may consider \mathbf{G}^T as a discrete divergence operator, since the Laplacian is the divergence of the gradient. Naturally, \mathbf{G}^T is a n \times md sparse matrix which takes vector values stored at triangle faces to scalar divergence values at vertices.

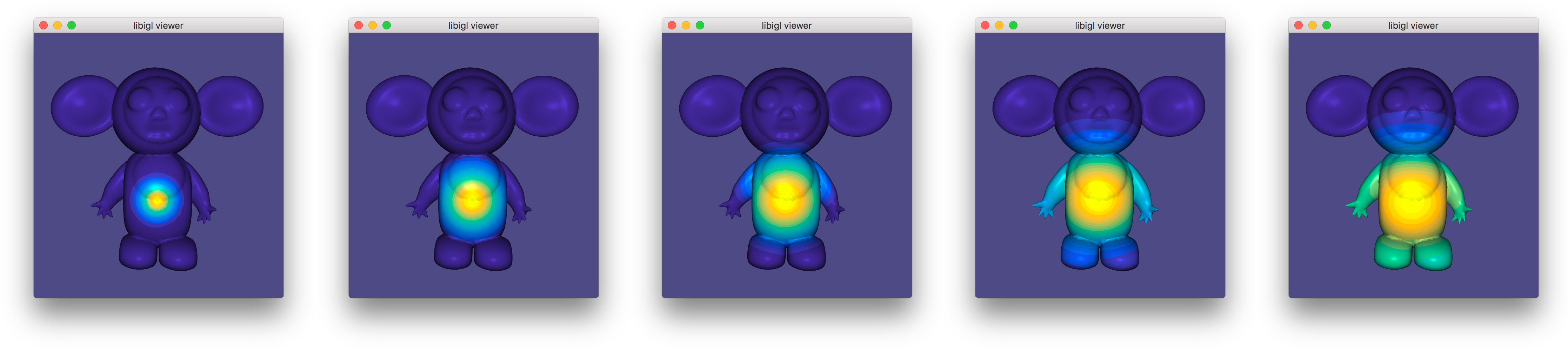

Exact Discrete Geodesic Distances¶

The discrete geodesic distance between two points is the length of the shortest path between then restricted to the surface. For triangle meshes, such a path is made of a set of segments which can be either edges of the mesh or crossing a triangle.

Libigl includes a wrapper for the exact geodesic algorithm 4 developed by Danil Kirsanov (https://code.google.com/archive/p/geodesic/), exposing it through an Eigen-based API. The function

igl::exact_geodesic(V,F,VS,FS,VT,FT,d);

vid, to all vertices of F you can use:

Eigen::VectorXi VS,FS,VT,FT;

// The selected vertex is the source

VS.resize(1);

VS << vid;

// All vertices are the targets

VT.setLinSpaced(V.rows(),0,V.rows()-1);

Eigen::VectorXd d;

igl::exact_geodesic(V,F,VS,FS,VT,FT,d);

allows to interactively pick the source vertex and displays the distance using a periodic color pattern.](images/geodesicdistance.jpg)

Chapter 3: Matrices And Linear Algebra¶

Libigl relies heavily on the Eigen library for dense and sparse linear algebra routines. Besides geometry processing routines, libigl has linear algebra routines which bootstrap Eigen and make it feel even more similar to a high-level algebra library such as Matlab.

Slice¶

A very familiar and powerful routine in Matlab is array slicing. This allows reading from or writing to a possibly non-contiguous sub-matrix. Let’s consider the Matlab code:

B = A(R,C);

If A is a m \times n matrix and R is a j-long list of row-indices

(between 1 and m) and C is a k-long list of column-indices, then as a

result B will be a j \times k matrix drawing elements from A according to

R and C. In libigl, the same functionality is provided by the slice

function (Example 301):

VectorXi R,C;

MatrixXd A,B;

...

igl::slice(A,R,C,B);

Note that A and B could also be sparse matrices.

Similarly, consider the Matlab code:

A(R,C) = B;

Now, the selection is on the left-hand side so the j \times k matrix B is

being written into the submatrix of A determined by R and C. This

functionality is provided in libigl using slice_into:

igl::slice_into(B,R,C,A);

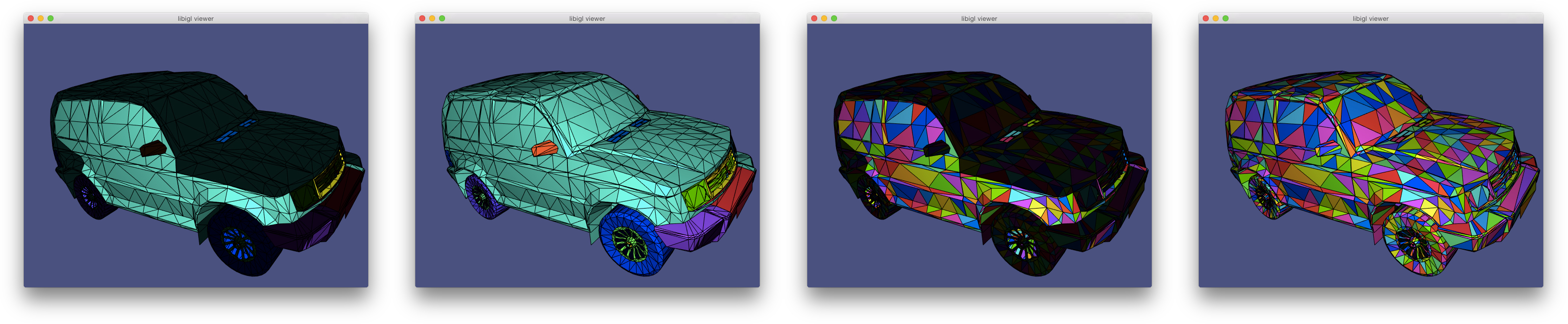

Slice shows how to use igl::slice to change the colors for triangles on a mesh.

Sort¶

Matlab and other higher-level languages make it very easy to extract indices of sorting and comparison routines. For example in Matlab, one can write:

[Y,I] = sort(X,1,'ascend');

so if X is a m \times n matrix then Y will also be an m \times n matrix

with entries sorted along dimension 1 in 'ascend'ing order. The second

output I is a m \times n matrix of indices such that Y(i,j) =

X(I(i,j),j);. That is, I reveals how X is sorted into Y.

This same functionality is supported in libigl:

igl::sort(X,1,true,Y,I);

Similarly, sorting entire rows can be accomplished in Matlab using:

[Y,I] = sortrows(X,'ascend');

where now I is a m vector of indices such that Y = X(I,:).

In libigl, this is supported with

igl::sortrows(X,true,Y,I);

I reveals the index of sort so that it can be reproduced with

igl::slice(X,I,1,Y).

Analogous functions are available in libigl for: max, min, and unique.

Sort shows how to use igl::sortrows to pseudocolor triangles according to their barycenters’ sorted order (Example 302).

Other Matlab-style Functions¶

Libigl implements a variety of other routines with the same api and functionality as common Matlab functions.

| Name | Description |

|---|---|

igl::all |

Whether all elements are non-zero (true) |

igl::any |

Whether any elements are non-zero (true) |

igl::cat |

Concatenate two matrices (especially useful for dealing with Eigen sparse matrices) |

igl::ceil |

Round entries up to nearest integer |

igl::cumsum |

Cumulative sum of matrix elements |

igl::colon |

Act like Matlab’s :, similar to Eigen’s LinSpaced |

igl::components |

Connected components of graph (cf. Matlab’s graphconncomp) |

igl::count |

Count non-zeros in rows or columns |

igl::cross |

Cross product per-row |

igl::cumsum |

Cumulative summation |

igl::dot |

dot product per-row |

igl::eigs |

Solve sparse eigen value problem |

igl::find |

Find subscripts of non-zero entries |

igl::floor |

Round entries down to nearest integer |

igl::histc |

Counting occurrences for building a histogram |

igl::hsv_to_rgb |

Convert HSV colors to RGB (cf. Matlab’s hsv2rgb) |

igl::intersect |

Set intersection of matrix elements. |

igl::isdiag |

Determine whether matrix is diagonal |

igl::ismember |

Determine whether elements in A occur in B |

igl::jet |

Quantized colors along the rainbow. |

igl::max |

Compute maximum entry per row or column |

igl::median |

Compute the median per column |

igl::min |

Compute minimum entry per row or column |

igl::mod |

Compute per element modulo |

igl::mode |

Compute the mode per column |

igl::null |

Compute the null space basis of a matrix |

igl::nchoosek |

Compute all k-size combinations of n-long vector |

igl::orth |

Orthogonalization of a basis |

igl::parula |

Generate a quantized colormap from blue to yellow |

igl::pinv |

Compute Moore-Penrose pseudoinverse |

igl::randperm |

Generate a random permutation of [0,…,n-1] |

igl::rgb_to_hsv |

Convert RGB colors to HSV (cf. Matlab’s rgb2hsv) |

igl::repmat |

Repeat a matrix along columns and rows |

igl::round |

Per-element round to whole number |

igl::setdiff |

Set difference of matrix elements |

igl::setunion |

Set union of matrix elements |

igl::setxor |

Set exclusive “or” of matrix elements |

igl::slice |

Slice parts of matrix using index lists: (cf. Matlab’s B = A(I,J)) |

igl::slice_mask |

Slice parts of matrix using boolean masks: (cf. Matlab’s B = A(M,N)) |

igl::slice_into |

Slice left-hand side of matrix assignment using index lists (cf. Matlab’s B(I,J) = A) |

igl::sort |

Sort elements or rows of matrix |

igl::speye |

Identity as sparse matrix |

igl::sum |

Sum along columns or rows (of sparse matrix) |

igl::unique |

Extract unique elements or rows of matrix |

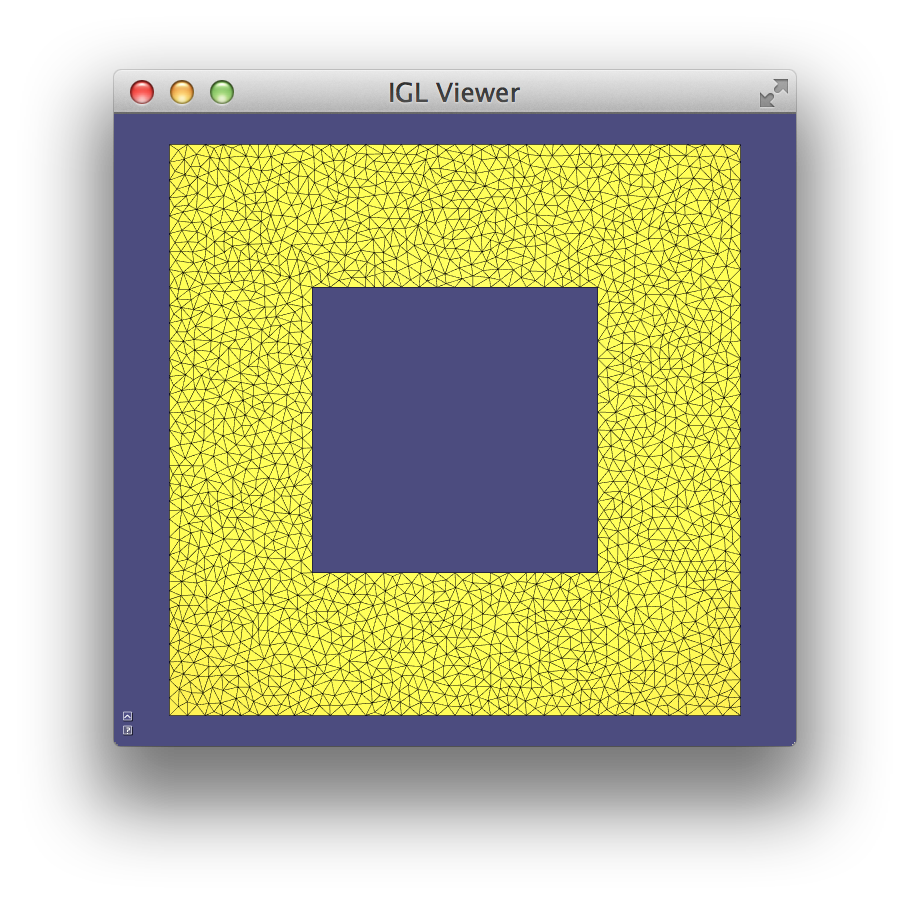

Laplace Equation¶

A common linear system in geometry processing is the Laplace equation:

∆z = 0

subject to some boundary conditions, for example Dirichlet boundary conditions (fixed value):

\left.z\right|_{\partial{S}} = z_{bc}

In the discrete setting, the linear system can be written as:

\mathbf{L} \mathbf{z} = \mathbf{0}

where \mathbf{L} is the n \times n discrete Laplacian and \mathbf{z} is a vector of per-vertex values. Most of \mathbf{z} correspond to interior vertices and are unknown, but some of \mathbf{z} represent values at boundary vertices. Their values are known so we may move their corresponding terms to the right-hand side.

Conceptually, this is very easy if we have sorted \mathbf{z} so that interior vertices come first and then boundary vertices:

The bottom block of equations is no longer meaningful so we’ll only consider the top block:

We can move the known values to the right-hand side:

Finally we can solve this equation for the unknown values at interior vertices \mathbf{z}_{in}.

However, our vertices will often not be sorted in this way. One option would be to sort V,

then proceed as above and then unsort the solution Z to match V. However,

this solution is not very general.

With array slicing no explicit sort is needed. Instead we can slice-out

submatrix blocks (\mathbf{L}_{in,in}, \mathbf{L}_{in,b}, etc.) and follow

the linear algebra above directly. Then we can slice the solution into the

rows of Z corresponding to the interior vertices (Example 303).

LaplaceEquation example solves a Laplace equation with Dirichlet boundary conditions.

Quadratic Energy Minimization¶

The same Laplace equation may be equivalently derived by minimizing Dirichlet energy subject to the same boundary conditions:

\mathop{\text{minimize }}_z \frac{1}{2}\int\limits_S \|\nabla z\|^2 dA

On our discrete mesh, recall that this becomes

\mathop{\text{minimize }}_\mathbf{z} \frac{1}{2}\mathbf{z}^T \mathbf{G}^T \mathbf{D} \mathbf{G} \mathbf{z} \rightarrow \mathop{\text{minimize }}_\mathbf{z} \mathbf{z}^T \mathbf{L} \mathbf{z}

The general problem of minimizing some energy over a mesh subject to fixed value boundary conditions is so wide spread that libigl has a dedicated api for solving such systems.

Let us consider a general quadratic minimization problem subject to different common constraints:

subject to

where

- \mathbf{Q} is a (usually sparse) n \times n positive semi-definite matrix of quadratic coefficients (Hessian),

- \mathbf{B} is a n \times 1 vector of linear coefficients,

- \mathbf{z}_b is a |b| \times 1 portion of \mathbf{z} corresponding to boundary or fixed vertices,

- \mathbf{z}_{bc} is a |b| \times 1 vector of known values corresponding to \mathbf{z}_b,

- \mathbf{A}_{eq} is a (usually sparse) m \times n matrix of linear equality constraint coefficients (one row per constraint), and

- \mathbf{B}_{eq} is a m \times 1 vector of linear equality constraint right-hand side values.

This specification is overly general as we could write \mathbf{z}_b = \mathbf{z}_{bc} as rows of \mathbf{A}_{eq} \mathbf{z} = \mathbf{B}_{eq}, but these fixed value constraints appear so often that they merit a dedicated place in the API.

In libigl, solving such quadratic optimization problems is split into two routines: precomputation and solve. Precomputation only depends on the quadratic coefficients, known value indices and linear constraint coefficients:

igl::min_quad_with_fixed_data mqwf;

igl::min_quad_with_fixed_precompute(Q,b,Aeq,true,mqwf);

The output is a struct mqwf which contains the system matrix factorization

and is used during solving with arbitrary linear terms, known values, and

constraint in the right-hand sides:

igl::min_quad_with_fixed_solve(mqwf,B,bc,Beq,Z);

The output Z is a n \times 1 vector of solutions with fixed values

correctly placed to match the mesh vertices V.

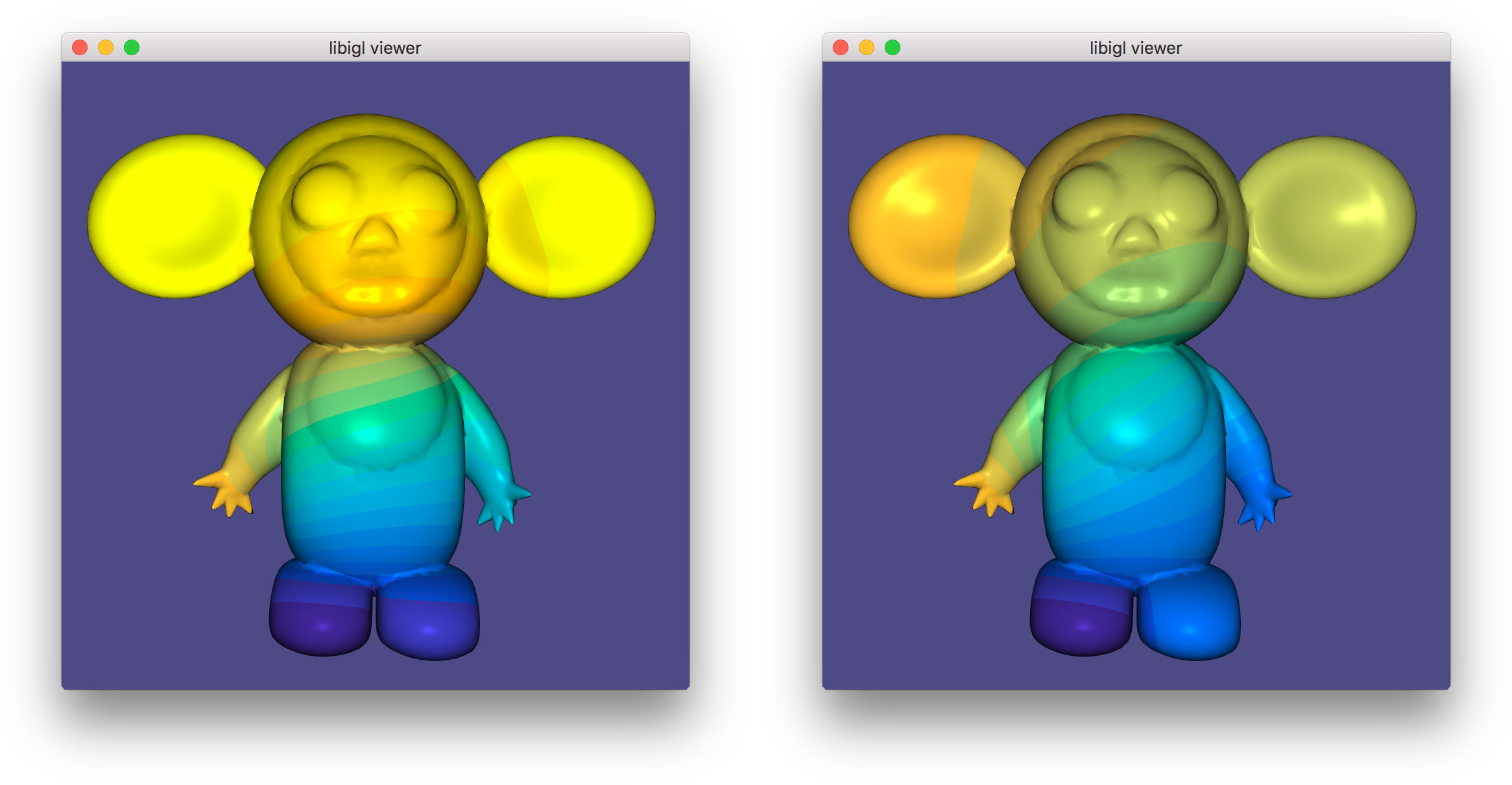

Linear Equality Constraints¶

We saw above that min_quad_with_fixed_* in libigl provides a compact way to

solve general quadratic programs. Let’s consider another example, this time

with active linear equality constraints. Specifically let’s solve the

bi-Laplace equation or equivalently minimize the Laplace energy:

subject to fixed value constraints and a linear equality constraint:

z_{a} = 1, z_{b} = -1 and z_{c} = z_{d}.

Notice that we can rewrite the last constraint in the familiar form from above:

z_{c} - z_{d} = 0.

Now we can assembly Aeq as a 1 \times n sparse matrix with a coefficient

1 in the column corresponding to vertex c and a -1 at d. The right-hand

side Beq is simply zero.

Internally, min_quad_with_fixed_* solves using the Lagrange Multiplier

method. This method adds additional variables for each linear constraint (in

general a m \times 1 vector of variables \lambda) and then solves the

saddle problem:

This can be rewritten in a more familiar form by stacking \mathbf{z} and \lambda into one (m+n) \times 1 vector of unknowns:

Differentiating with respect to \left( \mathbf{z}^T \lambda^T \right) reveals

a linear system and we can solve for \mathbf{z} and \lambda. The only

difference from the straight quadratic minimization system, is that this

saddle problem system will not be positive definite. Thus, we must use a

different factorization technique (LDLT rather than LLT): libigl’s

min_quad_with_fixed_precompute automatically chooses the correct solver in

the presence of linear equality constraints (Example 304).

LinearEqualityConstraints first solves with just fixed value constraints (left: 1 and -1 on the left hand and foot respectively), then solves with an additional linear equality constraint (right: points on right hand and foot constrained to be equal).

Quadratic Programming¶

We can generalize the quadratic optimization in the previous section even more by allowing inequality constraints. Specifically box constraints (lower and upper bounds):

\mathbf{l} \le \mathbf{z} \le \mathbf{u},

where \mathbf{l},\mathbf{u} are n \times 1 vectors of lower and upper bounds and general linear inequality constraints:

\mathbf{A}_{ieq} \mathbf{z} \le \mathbf{B}_{ieq},

where \mathbf{A}_{ieq} is a k \times n matrix of linear coefficients and \mathbf{B}_{ieq} is a k \times 1 matrix of constraint right-hand sides.

Again, we are overly general as the box constraints could be written as rows of the linear inequality constraints, but bounds appear frequently enough to merit a dedicated api.

Libigl implements its own active set routine for solving quadratric programs (QPs). This algorithm works by iteratively “activating” violated inequality constraints by enforcing them as equalities and “deactivating” constraints which are no longer needed.

After deciding which constraints are active at each iteration, the problem reduces to a quadratic minimization subject to linear equality constraints, and the method from the previous section is invoked. This is repeated until convergence.

Currently the implementation is efficient for box constraints and sparse non-overlapping linear inequality constraints.

Unlike alternative interior-point methods, the active set method benefits from a warm-start (initial guess for the solution vector \mathbf{z}).

igl::active_set_params as;

// Z is optional initial guess and output

igl::active_set(Q,B,b,bc,Aeq,Beq,Aieq,Bieq,lx,ux,as,Z);

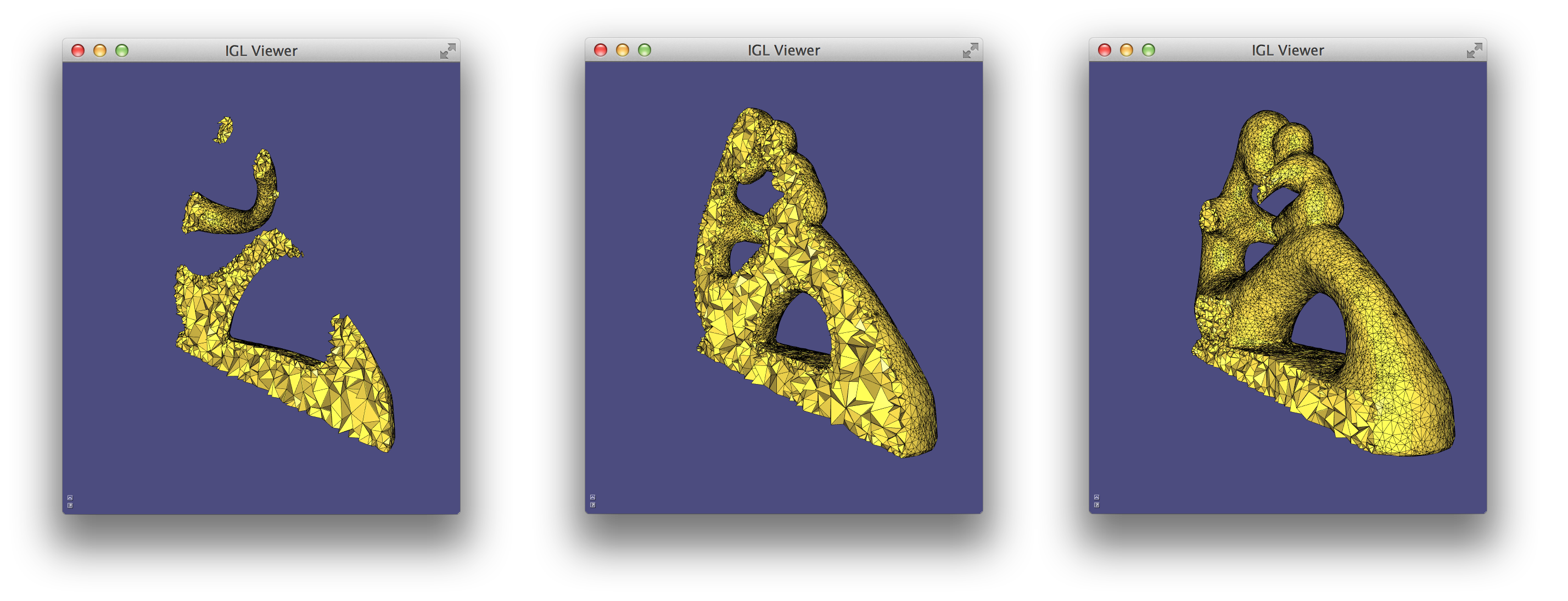

Eigen Decomposition¶

Libigl has rudimentary support for extracting eigen pairs of a generalized eigen value problem:

Ax = \lambda B x

where A is a sparse symmetric matrix and B is a sparse positive definite matrix. Most commonly in geometry processing, we let A=L the cotangent Laplacian and B=M the per-vertex mass matrix (e.g. 10). Typically applications will make use of the low frequency eigen modes. Analogous to the Fourier decomposition, a function f on a surface can be represented via its spectral decomposition of the eigen modes of the Laplace-Beltrami:

f = \sum\limits_{i=1}^\infty a_i \phi_i

where each \phi_i is an eigen function satisfying: \Delta \phi_i = \lambda_i \phi_i and a_i are scalar coefficients. For a discrete triangle mesh, a completely analogous decomposition exists, albeit with finite sum:

\mathbf{f} = \sum\limits_{i=1}^n a_i \phi_i

where now a column vector of values at vertices \mathbf{f} \in \mathcal{R}^n specifies a piecewise linear function and \phi_i \in \mathcal{R}^n is an eigen vector satisfying:

\mathbf{L} \phi_i = \lambda_i \mathbf{M} \phi_i.

Note that Vallet & Levy 10 propose solving a symmetrized standard eigen problem \mathbf{M}^{-1/2}\mathbf{L}\mathbf{M}^{-1/2} \phi_i = \lambda_i \phi_i. Libigl implements a generalized eigen problem solver so this unnecessary symmetrization can be avoided.

Often the sum above is truncated to the first k eigen vectors. If the low frequency modes are chosen, i.e. those corresponding to small \lambda_i values, then this truncation effectively regularizes \mathbf{f} to smooth, slowly changing functions over the mesh (e.g. 8). Modal analysis and model subspaces have been used frequently in real-time deformation (e.g. 7).

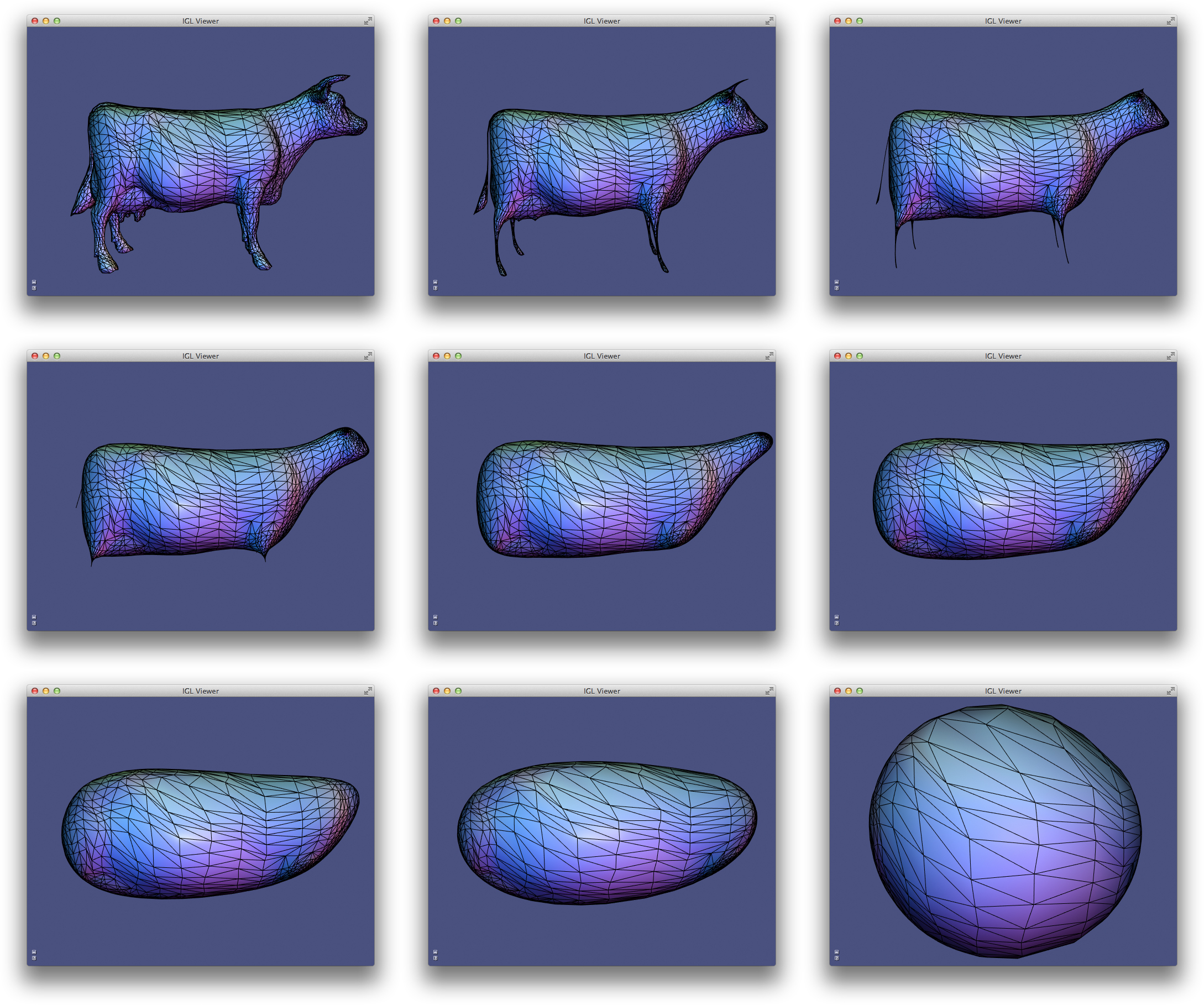

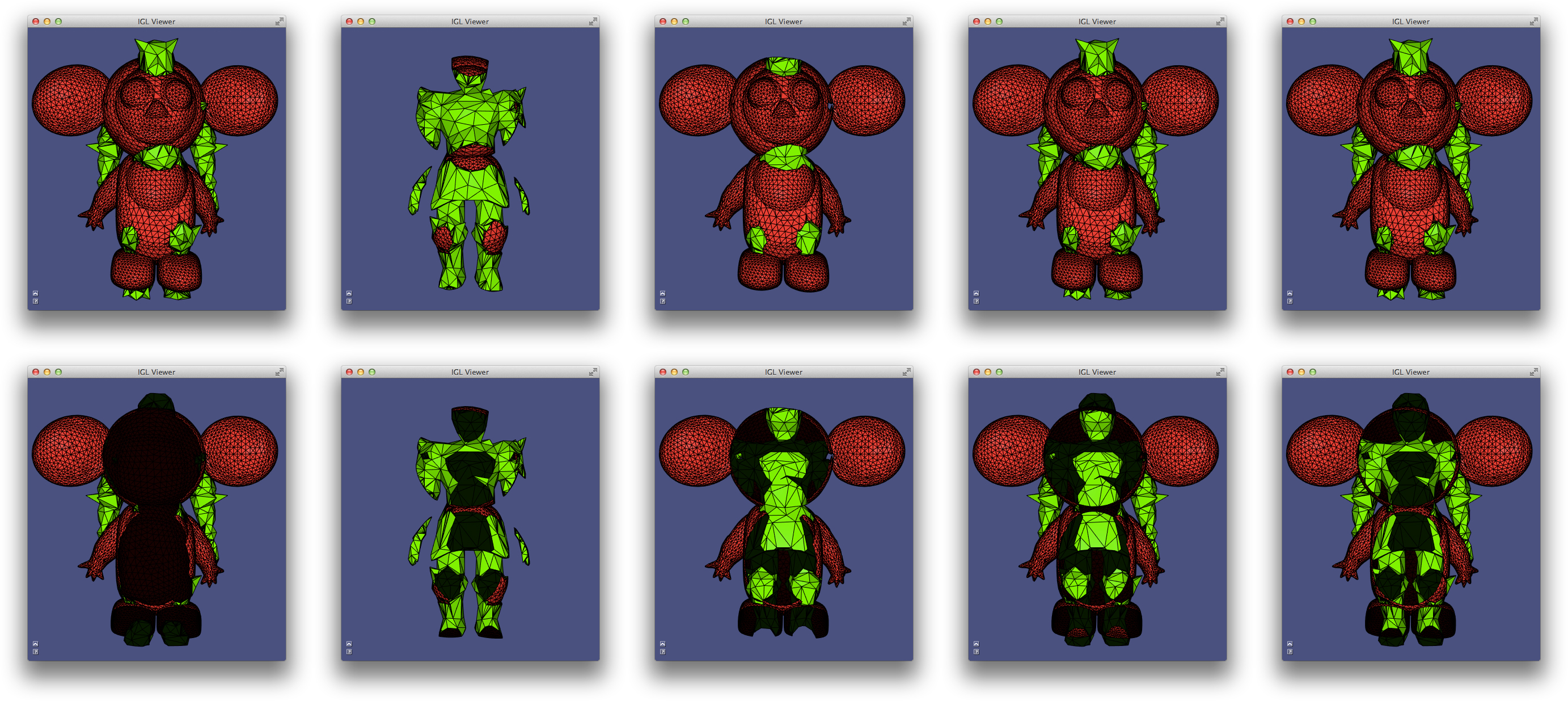

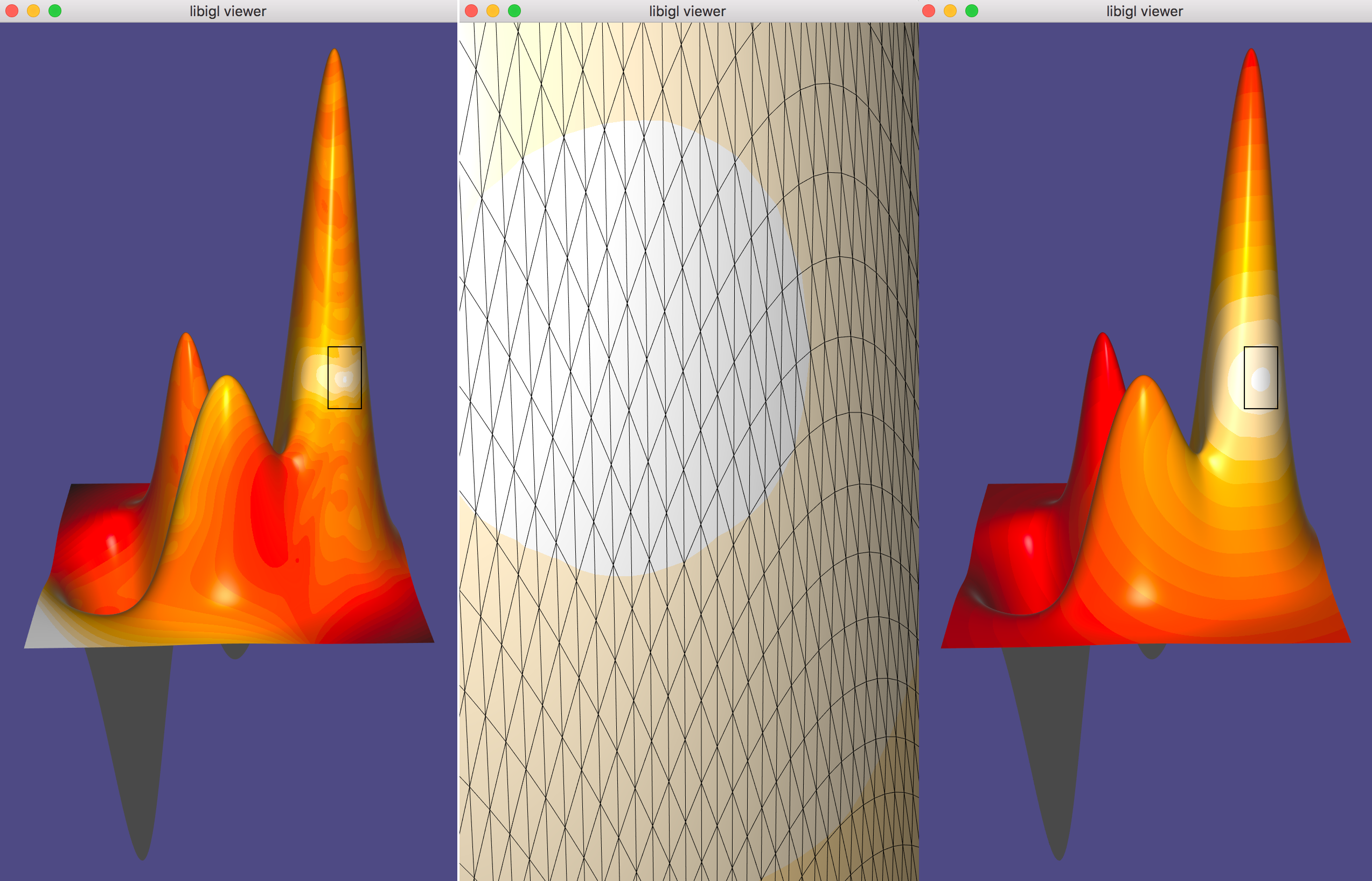

In Example 306), the first 5 eigen vectors

of the discrete Laplace-Beltrami operator are computed and displayed in

pseudo-color atop the beetle. Eigen vectors are computed using igl::eigs

(mirroring MATLAB’s eigs). The 5 eigen vectors are placed into the columns

of U and the eigen values are placed into the entries of S:

SparseMatrix<double> L,M;

igl::cotmatrix(V,F,L);

igl::massmatrix(V,F,igl::MASSMATRIX_TYPE_DEFAULT,M);

Eigen::MatrixXd U;

Eigen::VectorXd S;

igl::eigs(L,M,5,igl::EIGS_TYPE_SM,U,S);

Chapter 4: Shape Deformation¶

Modern mesh-based shape deformation methods satisfy user deformation constraints at handles (selected vertices or regions on the mesh) and propagate these handle deformations to the rest of shape smoothly and without removing or distorting details. Libigl provides implementations of a variety of state-of-the-art deformation techniques, ranging from quadratic mesh-based energy minimizers, to skinning methods, to non-linear elasticity-inspired techniques.

Biharmonic Deformation¶

The period of research between 2000 and 2010 produced a collection of techniques that cast the problem of handle-based shape deformation as a quadratic energy minimization problem or equivalently the solution to a linear partial differential equation.

There are many flavors of these techniques, but a prototypical subset are those that consider solutions to the bi-Laplace equation, that is a biharmonic function 11. This fourth-order PDE provides sufficient flexibility in boundary conditions to ensure C^1 continuity at handle constraints (in the limit under refinement) 15.

Biharmonic Surfaces¶

Let us first begin our discussion of biharmonic deformation, by considering biharmonic surfaces. We will casually define biharmonic surfaces as surface whose position functions are biharmonic with respect to some initial parameterization:

\Delta^2 \mathbf{x}' = 0

and subject to some handle constraints, conceptualized as “boundary conditions”:

\mathbf{x}'_{b} = \mathbf{x}_{bc}.

where \mathbf{x}' is the unknown 3D position of a point on the surface. So we are asking that the bi-Laplacian of each of spatial coordinate function to be zero.

In libigl, one can solve a biharmonic problem with igl::harmonic

and setting k=2 (bi-harmonic):

// U_bc contains deformation of boundary vertices b

igl::harmonic(V,F,b,U_bc,2,U);

This produces a smooth surface that interpolates the handle constraints, but all original details on the surface will be smoothed away. Most obviously, if the original surface is not already biharmonic, then giving all handles the identity deformation (keeping them at their rest positions) will not reproduce the original surface. Rather, the result will be the biharmonic surface that does interpolate those handle positions.

Thus, we may conclude that this is not an intuitive technique for shape deformation.

Biharmonic Deformation Fields¶

Now we know that one useful property for a deformation technique is “rest pose reproduction”: applying no deformation to the handles should apply no deformation to the shape.

To guarantee this by construction we can work with deformation fields (ie. displacements) \mathbf{d} rather than directly with positions \mathbf{x}. Then the deformed positions can be recovered as

\mathbf{x}' = \mathbf{x}+\mathbf{d}.

A smooth deformation field \mathbf{d} which interpolates the deformation fields of the handle constraints will impose a smooth deformed shape \mathbf{x}'. Naturally, we consider biharmonic deformation fields:

\Delta^2 \mathbf{d} = 0

subject to the same handle constraints, but rewritten in terms of their implied deformation field at the boundary (handles):

\mathbf{d}_b = \mathbf{x}_{bc} - \mathbf{x}_b.

Again we can use igl::harmonic with k=2, but this time solve for the

deformation field and then recover the deformed positions:

// U_bc contains deformation of boundary vertices b

D_bc = U_bc - igl::slice(V,b,1);

igl::harmonic(V,F,b,D_bc,2,D);

U = V+D;

Relationship To “differential Coordinates” And Laplacian Surface Editing¶

Biharmonic functions (whether positions or displacements) are solutions to the bi-Laplace equation, but also minimizers of the “Laplacian energy”. For example, for displacements \mathbf{d}, the energy reads

\int\limits_S \|\Delta \mathbf{d}\|^2 dA,

where we define \Delta \mathbf{d} to simply apply the Laplacian coordinate-wise.

By linearity of the Laplace(-Beltrami) operator we can reexpress this energy in terms of the original positions \mathbf{x} and the unknown positions \mathbf{x}' = \mathbf{x} - \mathbf{d}:

\int\limits_S \|\Delta (\mathbf{x}' - \mathbf{x})\|^2 dA = \int\limits_S \|\Delta \mathbf{x}' - \Delta \mathbf{x})\|^2 dA.

In the early work of Sorkine et al., the quantities \Delta \mathbf{x}' and \Delta \mathbf{x} were dubbed “differential coordinates” 21. Their deformations (without linearized rotations) is thus equivalent to biharmonic deformation fields.

Polyharmonic Deformation¶

We can generalize biharmonic deformation by considering different powers of the Laplacian, resulting in a series of PDEs of the form:

\Delta^k \mathbf{d} = 0.

with k\in{1,2,3,\dots}. The choice of k determines the level of continuity at the handles. In particular, k=1 implies C^0 at the boundary, k=2 implies C^1, k=3 implies C^2 and in general k implies C^{k-1}.

int k = 2;// or 1,3,4,...

igl::harmonic(V,F,b,bc,k,Z);

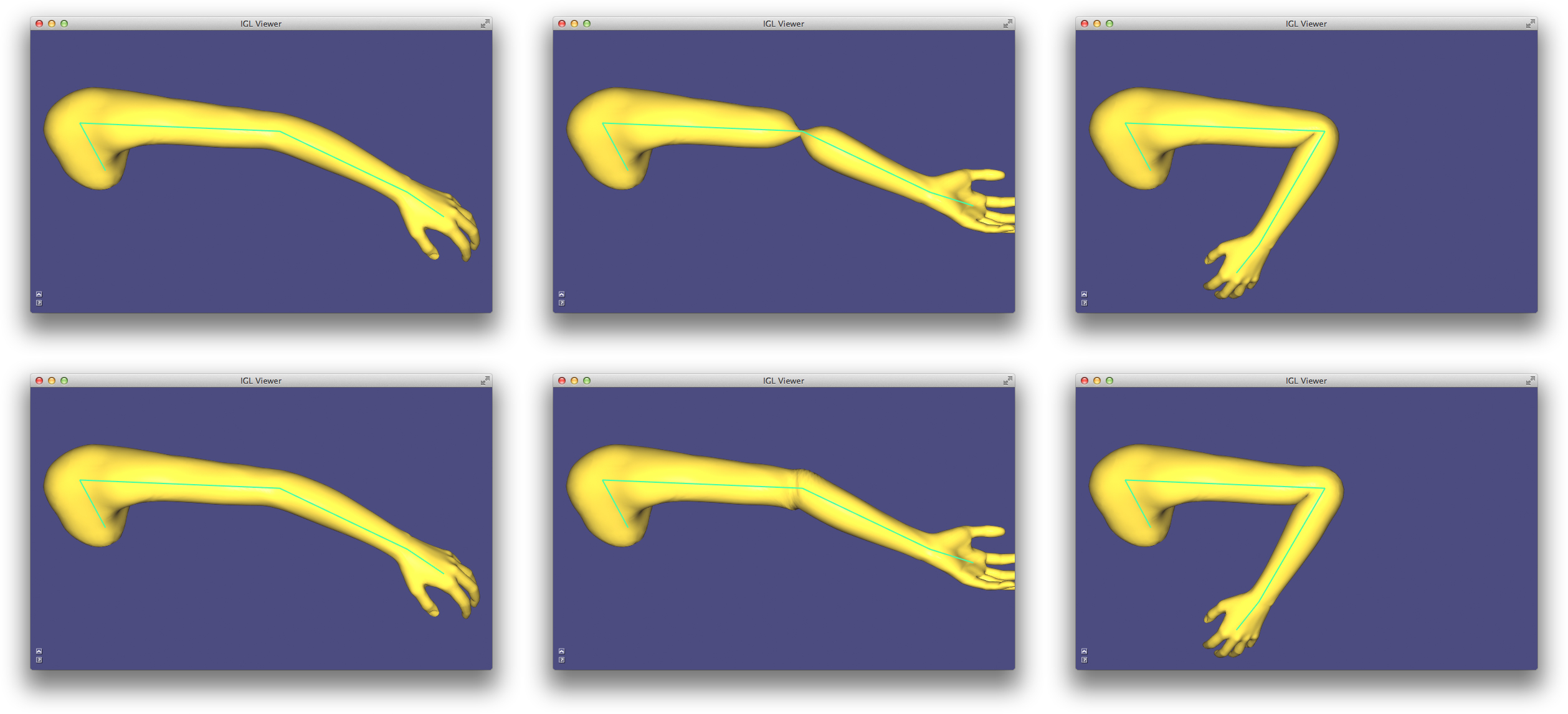

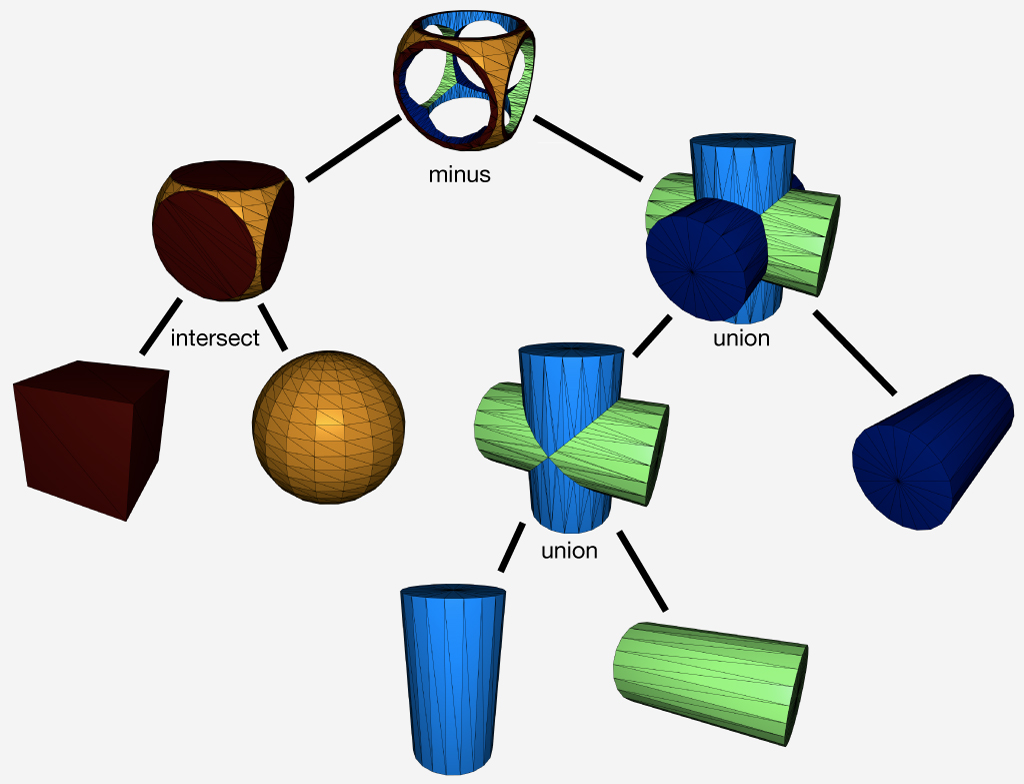

Bounded Biharmonic Weights¶

In computer animation, shape deformation is often referred to as “skinning”. Constraints are posed as relative rotations of internal rigid “bones” inside a character. The deformation method, or skinning method, determines how the surface of the character (i.e. its skin) should move as a function of the bone rotations.

The most popular technique is linear blend skinning. Each point on the shape computes its new location as a linear combination of bone transformations:

\mathbf{x}' = \sum\limits_{i = 1}^m w_i(\mathbf{x}) \mathbf{T}_i \left(\begin{array}{c}\mathbf{x}_i\\1\end{array}\right),

where w_i(\mathbf{x}) is the scalar weight function of the ith bone evaluated at \mathbf{x} and \mathbf{T}_i is the bone transformation as a 4 \times 3 matrix.

This formula is embarassingly parallel (computation at one point does not depend on shared data need by computation at another point). It is often implemented as a vertex shader. The weights and rest positions for each vertex are sent as vertex shader attributes and bone transformations are sent as uniforms. Then vertices are transformed within the vertex shader, just in time for rendering.

As the skinning formula is linear (hence its name), we can write it as matrix multiplication:

\mathbf{X}' = \mathbf{M} \mathbf{T},

where \mathbf{X}' is n \times 3 stack of deformed positions as row vectors, \mathbf{M} is a n \times m\cdot dim matrix containing weights and rest positions and \mathbf{T} is a m\cdot (dim+1) \times dim stack of transposed bone transformations.

Traditionally, the weight functions w_j are painted manually by skilled rigging professionals. Modern techniques now exist to compute weight functions automatically given the shape and a description of the skeleton (or in general any handle structure such as a cage, collection of points, selected regions, etc.).

Bounded biharmonic weights are one such technique that casts weight computation as a constrained optimization problem 13. The weights enforce smoothness by minimizing the familiar Laplacian energy:

\sum\limits_{i = 1}^m \int_S (\Delta w_i)^2 dA

subject to constraints which enforce interpolation of handle constraints:

w_i(\mathbf{x}) = \begin{cases} 1 & \text{ if } \mathbf{x} \in H_i\\ 0 & \text{ otherwise } \end{cases},

where H_i is the ith handle, and constraints which enforce non-negativity, parition of unity and encourage sparsity:

0\le w_i \le 1 and \sum\limits_{i=1}^m w_i = 1.

This is a quadratic programming problem and libigl solves it using its active set solver or by calling out to Mosek.

Dual Quaternion Skinning¶

Even with high quality weights, linear blend skinning is limited. In particular, it suffers from known artifacts stemming from blending rotations as matrices: a weight combination of rotation matrices is not necessarily a rotation. Consider an equal blend between rotating by -\pi/2 and by \pi/2 about the z-axis. Intuitively one might expect to get the identity matrix, but instead the blend is a degenerate matrix scaling the x and y coordinates by zero:

0.5\left(\begin{array}{ccc}0&-1&0\\1&0&0\\0&0&1\end{array}\right)+ 0.5\left(\begin{array}{ccc}0&1&0\\-1&0&0\\0&0&1\end{array}\right)= \left(\begin{array}{ccc}0&0&0\\0&0&0\\0&0&1\end{array}\right)

In practice, this means the shape shrinks and collapses in regions where bone weights overlap: near joints.

Dual quaternion skinning presents a solution 17. This method represents rigid transformations as a pair of unit quaternions, \hat{\mathbf{q}}. The linear blend skinning formula is replaced with a linear blend of dual quaternions:

\mathbf{x}' = \cfrac{\sum\limits_{i=1}^m w_i(\mathbf{x})\hat{\mathbf{q}_i}} {\left\|\sum\limits_{i=1}^m w_i(\mathbf{x})\hat{\mathbf{q}_i}\right\|} \mathbf{x},

where \hat{\mathbf{q}_i} is the dual quaternion representation of the rigid transformation of bone i. The normalization forces the result of the linear blending to again be a unit dual quaternion and thus also a rigid transformation.

Like linear blend skinning, dual quaternion skinning is best performed in the

vertex shader. The only difference being that bone transformations are sent as

dual quaternions rather than affine transformation matrices. Libigl supports

CPU-side dual quaternion skinning with the igl::dqs function, which takes a

more traditional representation of rigid transformations as input and

internally converts to the dual quaternion representation before blending:

// vQ is a list of rotations as quaternions

// vT is a list of translations

igl::dqs(V,W,vQ,vT,U);

As-rigid-as-possible¶

Skinning and other linear methods for deformation are inherently limited. Difficult arises especially when large rotations are imposed by the handle constraints.

In the context of energy-minimization approaches, the problem stems from comparing positions (our displacements) in the coordinate frame of the undeformed shape. These quadratic energies are at best invariant to global rotations of the entire shape, but not smoothly varying local rotations. Thus linear techniques will not produce non-trivial bending and twisting.

Furthermore, when considering solid shapes (e.g. discretized with tetrahedral meshes) linear methods struggle to maintain local volume, and they often suffer from shrinking and bulging artifacts.

Non-linear deformation techniques present a solution to these problems. They work by comparing the deformation of a mesh vertex to its rest position rotated to a new coordinate frame which best matches the deformation. The non-linearity stems from the mutual dependence of the deformation and the best-fit rotation. These techniques are often labeled “as-rigid-as-possible” as they penalize the sum of all local deformations’ deviations from rotations.

To arrive at such an energy, let’s consider a simple per-triangle energy:

E_\text{linear}(\mathbf{X}') = \sum\limits_{t \in T} a_t \sum\limits_{\{i,j\} \in t} w_{ij} \left\| \left(\mathbf{x}'_i - \mathbf{x}'_j\right) - \left(\mathbf{x}_i - \mathbf{x}_j\right)\right\|^2

where \mathbf{X}' are the mesh’s unknown deformed vertex positions, t is a triangle in a list of triangles T, a_t is the area of triangle t and \{i,j\} is an edge in triangle t. Thus, this energy measures the norm of change between an edge vector in the original mesh \left(\mathbf{x}_i - \mathbf{x}_j\right) and the unknown mesh \left(\mathbf{x}'_i - \mathbf{x}'_j\right).

This energy is not rotation invariant. If we rotate the mesh by 90 degrees the change in edge vectors not aligned with the axis of rotation will be large, despite the overall deformation being perfectly rigid.

So, the “as-rigid-as-possible” solution is to append auxiliary variables \mathbf{R}_t for each triangle t which are constrained to be rotations. Then the energy is rewritten, this time comparing deformed edge vectors to their rotated rest counterparts:

E_\text{arap}(\mathbf{X}',\{\mathbf{R}_1,\dots,\mathbf{R}_{|T|}\}) = \sum\limits_{t \in T} a_t \sum\limits_{\{i,j\} \in t} w_{ij} \left\| \left(\mathbf{x}'_i - \mathbf{x}'_j\right)- \mathbf{R}_t\left(\mathbf{x}_i - \mathbf{x}_j\right)\right\|^2.

The separation into the primary vertex position variables \mathbf{X}' and the rotations \{\mathbf{R}_1,\dots,\mathbf{R}_{|T|}\} lead to strategy for optimization, too. If the rotations \{\mathbf{R}_1,\dots,\mathbf{R}_{|T|}\} are held fixed then the energy is quadratic in the remaining variables \mathbf{X}' and can be optimized by solving a (sparse) global linear system. Alternatively, if \mathbf{X}' are held fixed then each rotation is the solution to a localized Procrustes problem (found via 3 \times 3 SVD or polar decompostion). These two steps—local and global—each weakly decrease the energy, thus we may safely iterate them until convergence.

The different flavors of “as-rigid-as-possible” depend on the dimension and codimension of the domain and the edge-sets T. The proposed surface manipulation technique by Sorkine and Alexa 22, considers T to be the set of sets of edges emanating from each vertex (spokes). Later, Chao et al. derived the relationship between “as-rigid-as-possible” mesh energies and co-rotational elasticity considering 0-codimension elements as edge-sets: triangles in 2D and tetrahedra in 3D 12. They also showed how Sorkine and Alexa’s edge-sets are not a discretization of a continuous energy, proposing instead edge-sets for surfaces containing all edges of elements incident on a vertex (spokes and rims). They show that this amounts to measuring bending, albeit in a discretization-dependent way.

Libigl, supports these common flavors. Selecting one is a matter of setting the energy type before the precompuation phase:

igl::ARAPData arap_data;

arap_data.energy = igl::ARAP_ENERGY_TYPE_SPOKES;

//arap_data.energy = igl::ARAP_ENERGY_TYPE_SPOKES_AND_RIMS;

//arap_data.energy = igl::ARAP_ENERGY_TYPE_ELEMENTS; //triangles or tets

igl::arap_precomputation(V,F,dim,b,arap_data);

Just like igl::min_quad_with_fixed_*, this precomputation phase only depends

on the mesh, fixed vertex indices b and the energy parameters. To solve with

certain constraints on the positions of vertices in b, we may call:

igl::arap_solve(bc,arap_data,U);

which uses U as an initial guess and then computes the solution into it.

Libigl’s implementation of as-rigid-as-possible deformation takes advantage of the highly optimized singular value decomposition code from McAdams et al. 20 which leverages SSE intrinsics.

The concept of local rigidity will be revisited shortly in the context of surface parameterization.

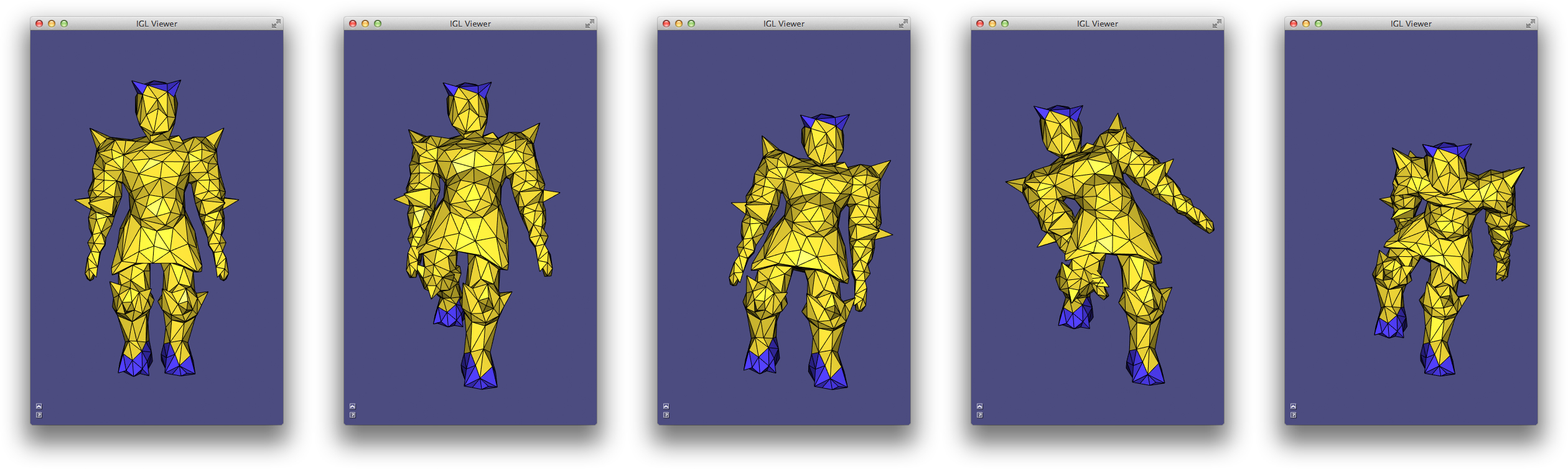

Fast Automatic Skinning Transformations¶

Non-linear optimization is, unsurprisingly, slower than its linear cousins. In the case of the as-rigid-as-possible optimization, the bottleneck is typically the large number of polar decompositions necessary to recover best fit rotations for each edge-set (i.e. for each triangle, tetrahedron, or vertex cell). Even if this code is optimized, the number of primary degrees of freedom is tied to the discretization level, despite the deformations’ low frequency behavior.

This invites two routes toward fast non-linear optimization. First, is it necessary (or even advantageous) to find so many best-fit rotations? Second, can we reduce the degrees of freedom to better reflect the frequency of the desired deformations.

Taken in turn, these optimizations culminate in a method which optimizes over the space of linear blend skinning deformations spanned by high-quality weights (i.e. manually painted ones or bounded biharmonic weights). This space is a low-dimensional subspace of all possible mesh deformations, captured by writing linear blend skinning in matrix form:

\mathbf{X}' = \mathbf{M}\mathbf{T}

where the mesh vertex positions in the n \times 3 matrix \mathbf{X}' are replaced by a linear combination of a small number of degrees of freedom in the (3+1)m \times 3 stack of transposed “handle” transformations. Swapping in \mathbf{M}\mathbf{T} for \mathbf{X}' in the ARAP energies above immediately sees performance gains during the global solve step as m << n.

The complexity of the local step—fitting rotations—is still bound to the original mesh discretization. However, if the skinning is well behaved, we can make the assumption that places on the shape with similar skinning weights will deform similarly and thus imply similar best-fit rotations. Therefore, we cluster edge-sets according to their representation in weight-space: where a vertex \mathbf{x} takes the coordinates [w_1(\mathbf{x}),w_2(\mathbf{x}),\dots,w_m(\mathbf{x})]. The number of clustered edge-sets show diminishing returns on the deformation quality so we may choose a small number of clusters, proportional to the number of skinning weight functions (rather than the number of discrete mesh vertices).

This proposed deformation model 14, can simultaneously be seen as a fast, subspace optimization for ARAP and as an automatic method for finding the best skinning transformation degrees of freedom.

A variety of user interfaces are supported via linear equality constraints on the skinning transformations associated with handles. To fix a transformation entirely we simply add the constraint:

\left(\begin{array}{cccc} 1 & 0 & 0 & 0\\ 0 & 1 & 0 & 0\\ 0 & 0 & 1 & 0\\ 0 & 0 & 0 & 1\end{array}\right) \mathbf{T}_i^T = \hat{\mathbf{T}}_i^T,

where \hat{\mathbf{T}}_i^T is the (3+1) \times 3 transposed fixed transformation for handle i.

To fix only the origin of a handle, we add a constraint requiring the transformation to interpolate a point in space (typically the centroid of all points with w_i = 1:

\mathbf{c}'^T\mathbf{T}_i^T = \mathbf{c}^T,

where \mathbf{c}^T is the 1 \times (3+1) position of the point at rest in transposed homogeneous coordinates, and \mathbf{c}'^T the point given by the user.

We can similarly fix just the linear part of the transformation at a handle, freeing the translation component (producing a “chickenhead” effect):

\left(\begin{array}{cccc} 1&0&0&0\\ 0&1&0&0\\ 0&0&1&0\end{array}\right) \mathbf{T}_i^T = \hat{\mathbf{L}}_i^T,

where \hat{\mathbf{L}}_i^T is the fixed 3 \times 3 linear part of the transformation at handle i.

And lastly we can allow the user to entirely free the transformation’s degrees of freedom, delegating the optimization to find the best possible values for all elements. To do this, we simply abstain from adding a corresponding constraint.

Arap With Grouped Edge-sets¶

Being a subspace method, an immediate disadvantage is the reduced degrees of freedom. This brings performance, but in some situations limits behavior too much. In such cases one can use the skinning subspace to build an effective clustering of rotation edge-sets for a traditional ARAP optimization: forgoing the subspace substitution. This has a two-fold effect. The cost of the rotation fitting, local step drastically reduces, and the deformations are “regularized” according the clusters. From a high level point of view, if the clusters are derived from skinning weights, then they will discourage bending, especially along isolines of the weight functions. If handles are not known in advance, one could also cluster according to a “geodesic embedding” like the biharmonic distance embedding.

In this light, we can think of the “spokes+rims” style surface ARAP as a (slight and redundant) clustering of the per-triangle edge-sets.

Biharmonic Coordinates¶

Linear blend skinning (as above) deforms a mesh by propagating full affine transformations at handles (bones, points, regions, etc.) to the rest of the shape via weights. Another deformation framework, called “generalized barycentric coordinates”, is a special case of linear blend skinning 16: transformations are restricted to pure translations and weights are required to retain affine precision. This latter requirement means that we can write the rest-position of any vertex in the mesh as the weighted combination of the control handle locations:

\mathbf{x} = \sum\limits_{i=1}^m w_i(\mathbf{x}) * \mathbf{c}_i,

where \mathbf{c}_i is the rest position of the ith control point. This simplifies the deformation formula at run-time. We can simply take the new position of each point of the shape to be the weighted combination of the translated control point positions:

\mathbf{x}' = \sum\limits_{i=1}^m w_i(\mathbf{x}) * \mathbf{c}_i'.

There are many different flavors of “generalized barycentric coordinates” (see table in “Automatic Methods” section, 16). The vague goal of “generalized barycentric coordinates” is to capture as many properties of simplicial barycentric coordinates (e.g. for triangles in 2D and tetrahedral in 3D) for larger sets of points or polyhedra. Some generalized barycentric coordinates can be computed in closed form; others require optimization-based precomputation. Nearly all flavors require connectivity information describing how the control points form a external polyhedron around the input shape: a cage. However, a recent techinique does not require a cage 23. This method ensures affine precision during optimization over weights of a smoothness energy with affine functions in its kernel:

\mathop{\text{min}}_\mathbf{W}\,\, \text{trace}(\frac{1}{2}\mathbf{W}^T \mathbf{A} \mathbf{W}), \text{subject to: } \mathbf{C} = \mathbf{W}\mathbf{C}

subject to interpolation constraints at selected vertices. If \mathbf{A} has affine functions in its kernel—that is, if \mathbf{A}\mathbf{V} = 0—then the weights \mathbf{W} will retain affine precision and we’ll have that:

\mathbf{V} = \mathbf{W}\mathbf{C}

the matrix form of the equality above. The proposed way to define \mathbf{A}

is to construct a matrix \mathbf{K} that measures the Laplacian at all

interior vertices and at all boundary vertices. The usual definition of the

discrete Laplacian (e.g. what libigl returns from igl::cotmatrix), measures

the Laplacian of a function for interior vertices, but measures the Laplacian

of a function minus the normal derivative of a function for boundary

vertices. Thus, we can let:

\mathbf{K} = \mathbf{L} + \mathbf{N}

where \mathbf{L} is the usual Laplacian and \mathbf{N} is matrix that computes normal derivatives of a piecewise-linear function at boundary vertices of a mesh. Then \mathbf{A} is taken as quadratic form computing the square of the integral-average of \mathbf{K} applied to a function and integrated over the mesh:

\mathbf{A} = (\mathbf{M}^{-1}\mathbf{K})^2_\mathbf{M} = \mathbf{K}^T \mathbf{M}^{-1} \mathbf{K}.

Since the Laplacian \mathbf{K} is a second-order derivative it measures zero on affine functions, thus \mathbf{A} has affine functions in its null space. A short derivation proves that this implies \mathbf{W} will be affine precise (see 23).

Minimizers of this “squared Laplacian” energy are in some sense discrete biharmonic functions. Thus they’re dubbed “biharmonic coordinates” (not the same as bounded biharmonic weights, which are not generalized barycentric coordinates).

In libigl, one can compute biharmonic coordinates given a mesh (V,F) and a

list S of selected control points or control regions (which act like skinning

handles):

igl::biharmonic_coordinates(V,F,S,W);

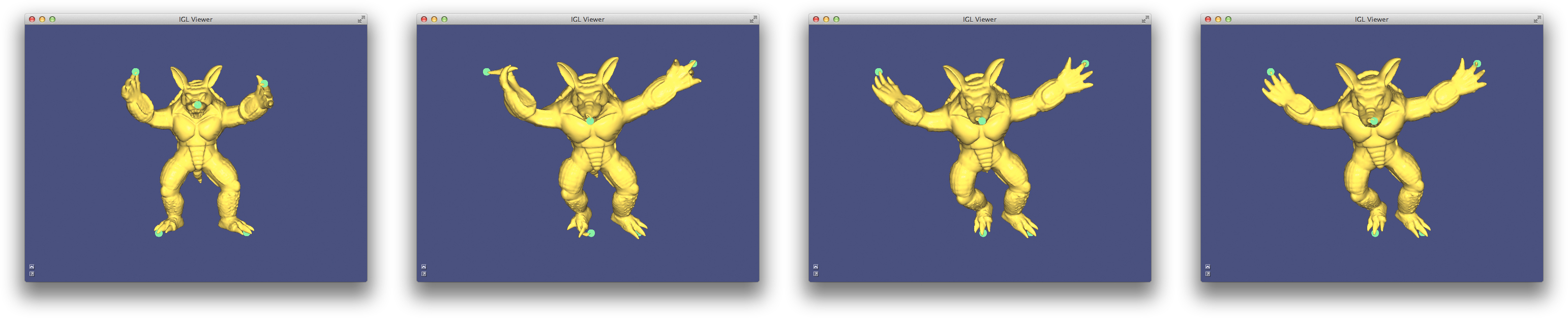

Direct Delta Mush¶

To produce a smooth deformation, linear blend skinning requires smooth skinning weights. These could be painted manually or computed automatically (e.g., using Bounded Biharmonic Weights 13). Even still, linear blend skinning suffers from shrinkage and collapse artifacts due to its inherent linearity (see earlier). “Direct Delta Mush” 18 skinning attempts to solve both of these issues by providing a direct skinning method that takes as input a rig with piecewise-constant weight functions (weights are either =0 or =1 everywhere). Direct delta mush is an adaptation of a less performant method called simply “Delta Mush” 19. The computation of Delta Mush separates into “bind pose” precomputation and runtime evaluation.

At bind time, Laplacian smoothing is conducted on the bind pose, moving each vertex from its rest position \mathbf{v}_i to a new position \tilde{\mathbf{v}}_i. The “delta” describing undoing this smoothing procedure, is computed and stored in a local coordinate frame associated with the vertex:

\delta_i = \mathbf{T}_i^{-1} (\mathbf{v}_i - \tilde{\mathbf{v}}_i).

At run time, the mesh is deformed using linear blend skinning and piecewise-constant weights. Near bones, the deformation is perfectly rigid, while near joints where bones meet, the mesh tears apart with a sudden change to the next rigid transformation. The same amount of Laplacian smoothing is applied at run time to this posed mesh. Moving each vertex to a location \tilde{\mathbf{u}}_i. A local frame \mathbf{S}_i is computed at this location and the cached deltas are adding in this resolved frame to restore the shape’s original details:

\mathbf{u}_i = \tilde{\mathbf{u}}_i + \mathbf{S}_i \delta_i.

The key insight of “Delta Mush” is that Laplacian smoothing acts similarly on the rest and posed models.

The key insight of “Direct Delta Mush” is that this process of Laplacian smoothing at runtime is nearly linear and local frames can be computed in a embarrassingly parallel fashion using SVD (cf. ARAP).

Direct delta mush moves the smoothing step into precomputation, resulting in

“vector-valued” skinning weights per-vertex per-bone, stored in a matrix

\Omega. In libigl, for a mesh (V,F) and (e.g., piecewise-constant) weights

W this precomputation is computed using:

igl::direct_delta_mush_precomputation(V, F,Wsparse, p, lambda, kappa, alpha, Omega);

the parameters p, lambda, kappa, alpha control the smoothness and compactness

of the resulting deformation. The precomputation’s output is the matrix Omega.

At runtime, \Omega is used to deform the mesh to its final locations. In libigl, this is computed using:

igl::direct_delta_mush(V, T_list, Omega, U);

where T_list is the input pose (affine) transformations associated with each

bone and the final locations are stored in U.

Mesh Deformation with Kelvinlet¶

Kelvinlets24 is a technique for real-time physically based volume sculpting of virtual elastic materials. The technique treats meshes as fluids made of compressible materials and deforms them by advecting points along a displacement field. It relies on analytical solutions to the equations of elasticity.

A quick primer on linear elastostatics 25¶

The equilibrium state of linear elasticity is determined by a displacement field \mathbf{u} : R^3 \rightarrow R^3 that minimizes the elastic potential energy

E(\mathbf{u}) = \frac{\mu}{2}\left\|\nabla\mathbf{u}\right\|^2 + \frac{\mu}{2(1-2\nu)}\left\|\nabla \cdot \mathbf{u}\right\|^2 - \langle\mathbf{b}, \mathbf{u}\rangle

where \mu is the elastic shear modulus, \nu is the Poisson ratio, and \mathbf{b} represents the external body forces.

The first term controls the smoothness of the displacement field, the second term penalizes infinitesimal volume change, and the last term indicates the external body forces to be counteracted.

One can associate the optimal displacement field with the solution to the critical point of the above equation, also known as the Navier-Cauchy equation:

\mu\Delta\mathbf{u} + \frac{\mu}{(1 - 2\nu)}\nabla(\nabla \cdot \mathbf{u}) + \mathbf{b} = 0

The Kelvinlet is the solution to the Navier-Cauchy equation in the case of a concentrated body load due to a force vector \mathbf{f} at a point \mathbf{x}_{0}, i.e., where \mathbf{b}(\mathbf{x}) = \mathbf{f} \delta(\mathbf{x} − \mathbf{x}_{0}) and can be written as:

\mathbf{u}(\mathbf{r}) = \left[ \frac{(a - b)}{r}I + \frac{\mathbf{b}}{\mathit{r}^3}\mathbf{r}\mathbf{r}^{t}\right] \mathbf{f} \equiv \mathbf{K}(\mathbf{r})\mathbf{f}

where \mathbf{K} is the Kelvinlet function, \mathbf{r} = \mathbf{x} − \mathbf{x}_{0} is the relative position vector from the load location \mathbf{x}_{0} to an observation point \mathbf{x}, \mathit{r} is the norm of \mathbf{r}, a = \frac{1}{4\pi\mu} and b = \frac{a}{(1-\nu)}

The displacement field \mathbf{u}(\mathbf{x} − \mathbf{x}_{0}) deforms a point \mathbf{x} in a linear elastic material to \mathbf{x} + \mathbf{u}(\mathbf{x} − \mathbf{x}_{0}). The associated deformation gradient is then defined by a 3×3 matrix of the form \mathbf{G}(\mathbf{x} − \mathbf{x}_{0}) = \mathbf{I} + \nabla\mathbf{u}(\mathbf{x} − \mathbf{x}_{0}).

This gradient \mathbf{G}(\mathbf{r}) determines the different properties of the displacement field \mathbf{u(\mathbf{r})}. For instance, the skew-symmetric part of \nabla\mathbf{u}(\mathbf{r}) indicates the rotation induced by \mathbf{u(\mathbf{r})}, while its symmetric part corresponds to the elastic strain and determines the stretching. The strain tensor can also be decomposed into a trace term that represents the scaling of the volume of the elastic medium, and a traceless term that represents the pinching deformation.

This forms the fundamentals of the Kelvinlet brushes.

Regularized kelvinlets¶

The concentrated body load at a single point \mathbf{x}_{0} introduces a singularity to the Kelvinlet solution at \mathbf{x}_{0}. For this reason, the kelvinlet equation is modified to:

\mathbf{u_{\epsilon}}(\mathbf{r}) = \left[ \frac{(a - b)}{r_{\epsilon}}I + \frac{\mathbf{b}}{\mathit{r_{\epsilon}}^3}\mathbf{r_{\epsilon}}\mathbf{r_{\epsilon}}^{t} + \frac{a}{2}\frac{\epsilon^2}{r_{\epsilon}^3}I\right] \equiv \mathbf{K_{\epsilon}}(\mathbf{r})\mathbf{f}

where \mathbf{r}_{\epsilon} = \sqrt{r^2 + \epsilon^2} is the regularized distance, \epsilon > 0 is the radius of the sculpting brush.